Business vs. provider: AI software restrictions to know

How much control do businesses have over the use of AI platforms they buy? Vendor acceptable use contracts and policies can determine an AI deployment's success or failure.

Contractual services are routinely accompanied by terms-of-use stipulations that form a framework of mutual rights, responsibilities, limitations and restrictions governing a customer's use of a provider's AI systems, services, intellectual property and other assets. The framework is typically expressed within a contractual document, and the provider implements the underlying technical and procedural mechanisms needed to enforce it.

While terms of use isn't a new idea and applies to most industries, the explosive growth of AI has spotlighted the importance of AI platform restrictions. Consider the highly publicized dispute between AI provider Anthropic and the Pentagon in early 2026. Anthropic refused to let the U.S. Department of War (DoW) use its Claude AI platform for mass domestic surveillance or the development of autonomous weapons. The Pentagon demanded unrestricted use for broadly termed "defense operations." This disagreement ended any partnership between Anthropic and the DoW, which has subsequently established partnerships with more accommodating providers like OpenAI.

Anthropic's stance and its aftermath will provide public relations and business students with an important leadership case study to debate for years to come. However, this high-profile conflict underscores a far more immediate challenge for AI technology providers concerned with the ethical and societal safety issues tied to their AI platforms.

AI acceptable use restrictions

The operators of a service, such as an AI model or platform, can impose terms, conditions and limitations on its use. Chances are that the business using the AI service will, in turn, offer products and services that carry acceptable use restrictions or other formalized agreements. The focus of such agreements is typically on what the business can't do -- the exclusions -- because that's usually the shorter list.

This article is part of

What is enterprise AI? A complete guide for businesses

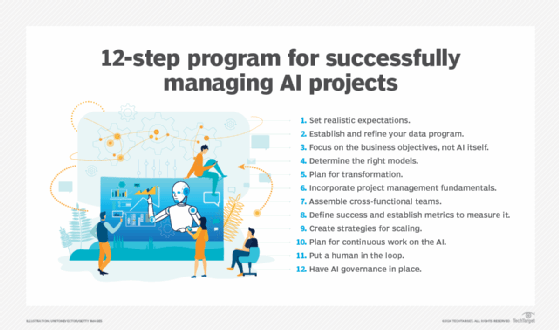

So, what kinds of restrictions exist for AI platforms? Major AI platform providers use policies include a variety of broad provisions, such as the following:

- Restricted content. The idea of "bad" content includes a swath of topics, but users are generally prohibited from using the AI platform to create content that's harmful, hateful, illegal or sexually explicit.

- Deliberately false information. The AI platform or service can't be used to create content that's intentionally designed to mislead, including fraudulent, deceptive or deliberately false.

- Illegal or malicious acts. An AI system can't be used to generate or implement illegal activities, such as generating code to exploit software or hardware vulnerabilities, hacking into a service or system, and creating malware.

- Do no harm. A classic prohibition is to not allow any AI use that's intended to cause physical harm, such as aiding in the development of autonomous weapons. Such prohibitions go to the heart of business and societal ethics.

- Data privacy or security violations. Although an AI system can't readily distinguish between personal, private and public information, the onus is on the user to refrain from applying sensitive data, personally identifiable information and proprietary business information, such as software code. Since it's common practice for AI systems to use new data for model training and refinement, any information a user inputs might soon be used as output by someone else.

- Intellectual property violations. The AI system can't distinguish between public information and IP, which is owned and protected by law. AI users can't input content that's trademarked, copyrighted or protected by other IP rights. Further, the AI system can't be used to create content that violates those legal IP protections.

- AI service re-engineering. This restriction prevents using an AI service to create competitive AI services or platforms, such as benchmarking the AI system's performance or reverse engineering the AI service to facilitate the creation or refinement of a competing AI service.

- AI service tampering. AI service providers implement guardrails intended to monitor and enforce the restrictions included in their use policies. Any effort to tamper with, override or bypass those measures is deemed a direct violation of the policies.

- Regulatory restrictions. Use policies might include broad language related to regulatory issues, reminding users to maintain regulatory or legal compliance based on their location or jurisdiction, such as when data sovereignty applies.

Implementing AI acceptable use restrictions

There are several ways to implement restrictions on the use of AI platforms and systems, including contractual terms, policy restrictions and technical infrastructure or tools.

Contractual terms

Contracts are classic mechanisms that establish a legally binding framework of understanding between a provider and user. Contracts outline how AI uses data, especially proprietary data; how AI outputs can and can't be used; and who accepts responsibility for AI errors. Consequently, contracts form the front line of AI use, and content typically includes the following stipulations:

- Purpose. The intended purpose of the AI platform or service.

- Usage restrictions. These limitations are often clear and deliberate prohibitions of the AI service, such as data input limits, security, tampering and competition.

- Output ownership and use limitations. These terms define who owns AI-generated output and typically impose strict limits on selling, publishing or otherwise using content that involves third-party IP.

- Training restrictions. Mutually accepted limitations often prevent a provider from using the client's inputs, prompts or resulting outputs to train or refine AI models.

- Human review requirements. Contracts can stipulate that some use cases require human review and acceptance to ensure accuracy and manage bias. Typical human-in-the-loop (HITL) requirements include hiring medical and legal practices.

- Liability and indemnification. These terms define who's liable or responsible for harmful, discriminatory or inaccurate AI outputs. Providers often seek to shift responsibility to the users, though users can negotiate to hold the provider responsible under certain circumstances.

- Security and compliance. Such terms and conditions are common and negotiable when users must ensure their AI providers adhere to prevailing data privacy, security and sovereignty regulations.

Policies

Contracts and policies can heavily overlap, but they're often used together as part of an AI acceptable use framework. While a contract is a legally binding and enforceable agreement between parties, a policy provides a far more flexible set of guidelines, rules or procedures.

A contract, for example, might stipulate adherence to HITL policies, but the actual HITL policies might be embodied in a separate policy document. This approach typically benefits AI providers because they can change policies unilaterally at any time, while contract changes require both parties to renegotiate and accept them.

Common policies that can be implemented as separate guidelines in conjunction with contracts often include the following stipulations:

- Data handling restrictions. As AI capabilities and legal landscapes evolve, keeping the actual list of data handling restrictions in a separate policy document lets providers change them at any time.

- Restricted uses. Similarly, a contract might ban restricted uses, but the specific list of restricted uses might be left to a policy document so providers can add or subtract restrictions as AI capabilities and uses change.

- HITL requirements. These policies list the AI use cases in which human review and acceptance of AI outputs are required.

- Approved tools. AI providers might only allow a limited set of tools to interact with AI systems. This policy lists the tools and often prohibits the use of free or public tools that can present security or governance risks.

AI usage policies of major platform providers

AI use policies typically contain vendor software standards, requirements, guidelines and expectations for responsible use by customers, as demonstrated by the following AI platform providers:

Technical elements

The AI provider will enforce its contract's underlying policies through the design and implementation of its infrastructure. Common technical elements of AI enforcement include the following:

- Access and authorization tools. AI providers use role-based access control to restrict AI access based on job function or account status, while authentication systems verify user identity. Access and authorization tools prevent unauthorized users from accessing the AI system. Other tools detect and prevent access from unauthorized applications, such as certain browsers.

- Content monitoring. Varied filters are used to monitor AI inputs and outputs. Input monitoring restrict queries to prevent unacceptable prompts, while output monitoring stop harmful or inappropriate AI system outcomes. Other tools, such as anomaly detection, check for indications of malicious or unusual use.

- Privacy and data protection tools. AI providers use data anonymization and redaction tools to safeguard potentially sensitive data. Anonymization tools alter sensitive data such as users' names and birth dates, while redaction tools block sensitive data outright. These tools help to ensure sensitive data never reaches the AI models.

- AI governance tools. Additional monitoring can log all types of AI activity, such as prompt queries and file exchanges, and lets AI providers handle AI governance by capturing and recording risks or ensuring no discernable risks have been identified.

Limitations of AI usage restrictions

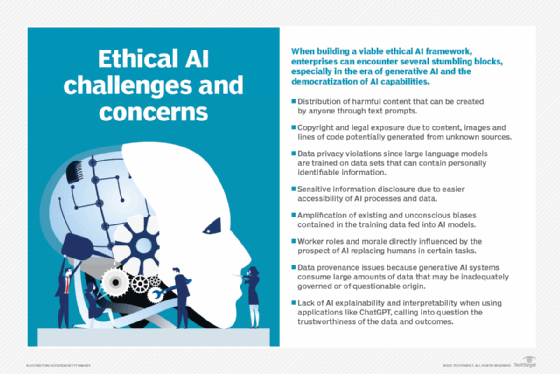

Although AI providers rely on acceptable use policies to govern users' interactions with AI services, applying or enforcing those restrictions objectively can be difficult. Some practical and conceptual limitations to AI use restrictions include the following:

- The definition of risk. AI providers might impose additional monitoring, review or safeguards for uses deemed high risk. But risk is often subjective and always changing. Subjective risk perceptions can lead to excessive restrictions or foregoing additional safeguards.

- The reality of bias. Bias exists in data, and it really doesn't matter how much policy and legislation are designed to prevent it. Even the most unintentional bias will be reflected in AI performance. AI providers can't be responsible for biases if all reasonable efforts are made to mitigate it, and AI platform users can't expect the provider to detect or fix flaws in users' data.

- The limits of monitoring. Monitoring requires extensive resources and staff skills an AI provider might lack. Also, responses to user violations could be blunted by human delay and review.

- Prompting and ambiguity. While filters are useful, they're not perfect. Skilled prompt engineers can construct complex or carefully phrased prompts that elicit an AI response without directly violating existing filters.

- Government exemptions in AI restrictions. AI providers generally accommodate government exemptions for "any lawful purpose" that typically involves national security, defense or intelligence. In practical terms, the provider lets the government use an AI system for purposes that would typically get a business user banned from the platform. Such uses are generally intended for internal consumption, such as within a particular government agency.

Stephen J. Bigelow, senior technology editor at TechTarget, has more than 30 years of technical writing experience in the PC and technology industry.