5 types of server virtualization explained

Hypervisor-based might be the most common form of server virtualization in organizations, but there are other options to consider, including hardware-assisted and OS-level.

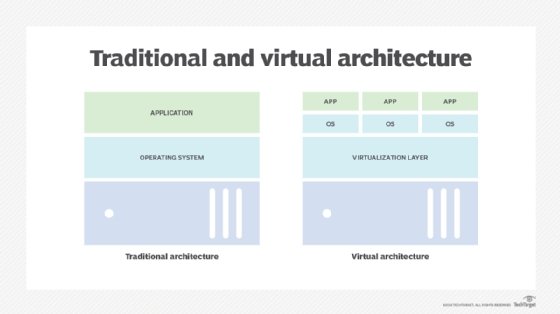

With virtualization, the network, storage and compute resources are abstracted, so applications, services and functions are less dependent on the physical hardware. IT administrators can provide applications, services and functions with their own environment -- which includes an operating system, support software, network and storage resources -- that is less prone to problems caused by other workloads running on the same underlying resources. Or they can share resources to reduce cost and improve overall utilization and performance.

From the early days of standard server-based virtualization -- excluding IBM's long-standing mainframe virtualization capabilities -- admins created virtual machines that contained everything the workload needed to run, including a full copy of an OS, all supporting software and emulated systems such as network interface cards. Some IT shops are moving to less bulky approaches, such as containerization, where the workload is minimized into a package that sits on top of virtualized resources and shares OS capabilities.

Compute resource virtualization is carried out in several ways, including hardware-based virtualization, hypervisors and software-based virtualization. As such, admins must make sure they choose their virtualization method carefully.

1. Hardware virtualization

In this case, the system provides virtualization by assigning parts of the CPU to different workloads. This is a major aspect of IBM's Power architecture, where portions of a core or whole cores can be carved out to create dedicated platforms for workloads, with dynamic allocation of additional resources as necessary. That way, different workloads can be provided with dedicated environments that can grow and shrink as the workload requires, and bad behavior by any single workload is isolated from other workloads.

Hardware virtualization also enables workloads that need greater availability -- VPN or antivirus engines, for example -- to have dedicated resources that can't be called on by other workloads. Intel and AMD have less of a focus on full hardware virtualization, instead using Intel Virtualization Technology and AMD Virtualization, respectively, in a hardware-assisted approach.

2. Hardware-assisted virtualization

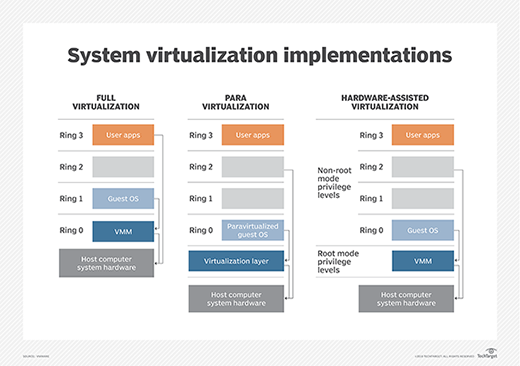

With hardware-assisted virtualization, the OS and other software do the heavy lifting, but the software calls upon hardware capabilities to provide optimized virtualization with minimal performance loss. APIs pass calls from the application layer down to the hardware, bypassing a lot of intrusive emulation and call handling from the code execution path.

Hardware-assisted virtualization is generally considered a function of hypervisor-based virtualization in conjunction with the underlying available CPUs.

3. Hypervisor-based virtualization

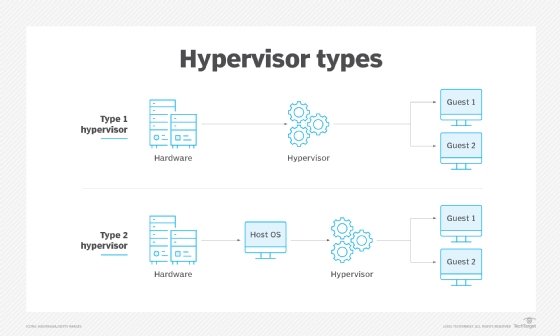

Hypervisor-based virtualization is the most common form of virtualization in organizations' data centers. Type 1 hypervisors, also known as bare-metal hypervisors, include VMware vSphere/ESXi, Microsoft Hyper-V and Linux KVM. With Type 1 hypervisors, virtualization acts before the OS truly kicks in, creating a virtualized hardware platform that multiple instances of the host OS can interact with through the hypervisor layer.

Type 2 hypervisors, also known as hosted hypervisors, reside on top of the host OS. Generally used for desktops to support guest OSes, as opposed to the server virtualization approach, Type 2 hypervisor examples include Oracle VM VirtualBox, Parallels Desktop and VMware Fusion.

4. Paravirtualization

Full virtualization is when the workload placed in the environment is unaware that it isn't running directly on a physical platform. Paravirtualization takes a slightly different approach. The hardware environment isn't emulated with paravirtualization -- each workload operates its own isolated domain.

Products such as Xen, which supports both full virtualization and paravirtualization, Oracle VM for x86 and IBM LPAR use a modified OS that understands the paravirtualization layer and optimizes functions such as privileged calls from the workload down to the hardware.

5. OS-level virtualization

OS-level virtualization -- otherwise known as containerization -- has gained a great deal of favor over the past few years. Containerization enables different workloads to share the same underlying resources in a mutually distrusting manner: Any problems caused by one workload shouldn't create problems for other workloads sharing the same underlying resources. This wasn't always the case. Early instances of Docker allowed privileged calls from one container to disrupt the physical environment, causing a domino effect of container corruption. Now, privileged calls to protected underlying resources are disabled by default.

As with hardware-assisted virtualization, performance is optimized as calls are made directly to the underlying OS without any need for emulation. With the emergence of Docker as an easy way to create workloads that can be moved from one platform to another, while minimizing the amount of resources used to provide virtualization, OS-level virtualization is embedded into many cloud platforms and supported by a majority of DevOps systems. Other platforms that offer OS-level virtualization include Linux Containers and IBM Workload Partitions for AIX.

Cloud platforms tend to use either hypervisor-based or OS-level virtualization or will layer OS-level virtualization capabilities on top of their hypervisor-based platforms.

The choice of virtualization type comes down to the needs for which guest OSes are supported, the number of workloads to be installed and managed, overall performance required and overall cost, as license fees can be high when looking at virtualizing a whole platform of hundreds of thousands of physical servers.