virtualization

What is virtualization?

Virtualization is the creation of a virtual version of an actual piece of technology, such as an operating system (OS), a server, a storage device or a network resource.

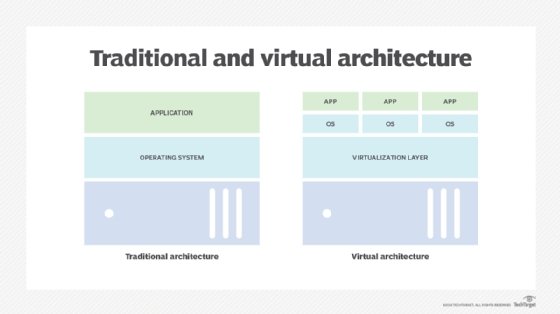

Virtualization uses software that simulates hardware functionality to create a virtual system. This practice lets IT organizations run multiple OSes, more than one virtual system and various applications on a single server. The benefits of virtualization include greater efficiency and economies of scale.

OS virtualization uses software that enables a piece of hardware to run multiple operating system images at the same time. The technology got its start on mainframes decades ago to save on expensive processing power.

How virtualization works

Virtualization technology abstracts an application, guest operating system or data storage away from its underlying hardware or software.

Organizations that divide their hard drives into different partitions already engage in virtualization. A partition is the logical division of a hard disk drive that, in effect, creates two separate hard drives.

Server virtualization is a key use of virtualization technology. It uses a software layer called a hypervisor to emulate the underlying hardware. This includes the central processing unit's (CPU's) memory, input/output and network traffic.

Hypervisors take the physical resources and separate them for the virtual environment. They can sit on top of an OS or be directly installed onto the hardware.

Xen hypervisor is an open source software program that manages the low-level interactions that occur between virtual machines (VMs) and physical hardware. It enables the simultaneous creation, execution and management of various VMs in one physical environment. With the help of the hypervisor, the guest OS, which normally interacts with true hardware, does so with a software emulation of that hardware.

Although OSes running on true hardware often outperform those running on virtual systems, most guest OSes and applications don't fully use the underlying hardware. Virtualization removes dependency on a given hardware platform, creating greater flexibility, control and isolation for environments. Plus, virtualization has spread beyond servers to include applications, networks, data management and desktops.

The virtualization process follows these steps:

- Hypervisors detach physical resources from their physical environments.

- Resources are taken from the physical environment and divided among various virtual environments.

- System users work with and perform computations within the virtual environment.

Once the virtual environment is running, a user or program can send an instruction that requires extra resources from the physical environment. In response, the hypervisor relays the message to the physical machine and stores the changes.

The virtual environment is often referred to as a guest machine or virtual machine. The VM acts like a single data file that can be transferred from one computer to another and opened in both. It should perform the same way on every computer.

Types of virtualization

Hypervisors are the technology that enables virtual abstraction. Type 1, the most common hypervisor, sits directly on bare metal and virtualizes the hardware platform. KVM virtualization is an open source, Linux kernel-based hypervisor that provides Type 1 virtualization benefits. A Type 2 hypervisor requires a host operating system and is more often used for testing and labs.

There are six areas of IT where virtualization is frequently used:

- Network virtualization combines the available resources in a network by splitting up the available bandwidth into connectivity channels, each of which runs independently from the others. With virtual network, each channel can be assigned or reassigned to a particular server or device in real time. Much like a partitioned hard drive, virtualization separates the network into manageable parts, disguising the true complexity of the network.

- Storage virtualization pools physical storage from multiple network storage devices into a centrally managed console. Storage virtualization is commonly used in storage area networks.

- Server virtualization hides server resources, including the number and identity of individual physical servers, CPUs and OSes, from users. Virtual servers spare users from having to manage the complicated details of server resources. They also increase server, processor and OS sharing as well as resource use and expansion capacity.

- Data virtualization abstracts the technical details of data and data management, such as location, performance and format. Instead, data virtualization focuses on broader access and resiliency.

- Desktop virtualization virtualizes a workstation load rather than a server. This lets the user access a desktop remotely, typically using a thin client at the desk. With virtual desktop infrastructure, the workstation essentially runs in a data center server and access is more secure and portable. However, the OS license and infrastructure still must be accounted for.

- Application virtualization abstracts the application layer away from the OS. This lets the application run in an encapsulated form without dependence on the underlying OS. Application virtualization enables a Windows application to run on Linux and vice versa, and it adds a level of isolation.

Virtualization is part of an overall trend in enterprise IT that includes autonomic computing, in which the IT environment automates or manages itself based on perceived activity. It's also tied to the on-demand, utility computing trend where in which clients pay for computer processing power only as needed. Virtualization centralizes administrative tasks while improving scalability.

Advantages of virtualization

The overall benefit of virtualization is that it helps organizations maximize output. More specific advantages include the following:

- Lower costs. Virtualization reduces the amount of hardware servers companies and data centers require. This lowers the overall cost of buying and maintaining large amounts of hardware.

- Easier disaster recovery. DR is simple in a virtualized environment. Regular snapshots provide up-to-date data, letting organizations easily back up and recover VMs, avoiding unnecessary downtime. In an emergency, a virtual machine can migrate to a new location within minutes.

- Easier testing. Testing is less complicated in a virtual environment. Even in the event of a large mistake, the test can continue without stopping and returning to the beginning. The test simply returns to the previous snapshot and proceeds.

- Faster backups. Virtualized environments take automatic snapshots throughout the day to guarantee all data is up to date. VMs can easily migrate between host machines and be efficiently redeployed.

- Improved productivity. Virtualized environments require fewer physical resources, which results in less time spent managing and maintaining servers. Tasks that take days or weeks in physical environments are done in minutes. This lets staff members spend their time on more productive tasks, such as raising revenue and facilitating business initiatives

- Single-minded servers. Virtualization provides a cost-effective way to separate email, database and web servers, creating a more comprehensive and dependable system.

- Optimize deployment and redeployment. When a physical server crashes, the backup server might not always be ready or up to date. If this is the case, then the redeployment process can be time-consuming and tedious. However, in a virtualized data center, virtual backup tools expedite the process to minutes.

- Reduced heat and improved energy savings. Companies that use a lot of hardware servers risk overheating their physical computing resources. Virtualization decreases the number of servers used for data management.

- Environmental consciousness. Companies and data centers that lots of electricity for hardware have a large carbon footprint. Virtualization can reduce significantly decreases the amount of cooling and power required and the overall carbon footprint.

- Cloud migration. VMs can be deployed from the data center to build a cloud-based IT infrastructure. The ability to embrace a cloud-based approach with virtualization eases migration to the cloud.

- Lack of vendor dependency. VMs are agnostic in terms of hardware configuration. As a result, virtualizing hardware and software means that a company no longer requires a single vendor for these physical resources.

Limitations of virtualization

Before converting to a virtualized environment, organizations should consider the various limitations:

- Costs. The investment required for virtualization software and hardware can be expensive. If the existing infrastructure is more than five years old, organizations should consider an initial renewal budget. Many businesses work with a managed service provider to offset costs with monthly leasing and other purchase options.

- Software licensing considerations. Vendors view software use within a virtualized environment in different ways. It's important to understand how a specific vendor does this.

- Time and effort. Converting to virtualization takes time and has a learning curve that requires IT staff to be trained in virtualization. Furthermore, some applications don't adapt well to a virtual environment. IT staff must be prepared to face these challenges and address them prior to converting.

- Security. Virtualization comes with unique security risks. Data is a common target for attacks, and the chance of a data breach increases with virtualization.

- Complexity. In a virtual environment, users can lose control of what they can do because several parts of the environment must collaborate to perform the same task. If any part doesn't work, the entire operation can fail.

Virtualization vs. containerization

Virtualization and app containerization both run multiple instances of software apps or OSes on the same physical hardware. However, both approaches have distinct methodologies. Virtualization relies on hypervisors as intermediaries between the underlying, centralized physical hardware and the instances of software and OSes. Running different types of apps with different operating system requirements on the same hypervisor would be an ideal use of virtualization.

Containerization doesn't require hypervisors. Instead, it relies on a single host kernel as the intermediary between the physical hardware and the software instances, which are referred to as containers. Containers share the same OS, making them an efficient alternative to virtualization.

Containers are ideal for software developers building a modular application. They can develop each component as a container or microservice and later combine them into a complete app.

Virtualization vs. cloud computing

Virtualization is often an integral part of a cloud computing infrastructure. However, cloud computing can exist without virtualization. Both virtualization and cloud computing run software on many servers. However, there are important distinctions:

- Scope. Cloud computing uses virtualization strategies to provide customers with different applications -- each having distinct OS requirements -- using the same physical hardware. However, cloud computing is broader and includes more infrastructure, such as data security, that doesn't require virtualization.

- Control. Cloud providers maintain control of the underlying physical hardware, whereas virtualization without cloud computing requires an organization to manage and handle its own proprietary hardware.

- Accessibility. Cloud computing services are accessible from remote environments, while virtualization is mainly on premises. To operate in the cloud, a virtualized environment requires cloud computing.

- Scalability and elasticity. Both cloud computing and virtualization are scalable approaches that allow for as many instances of software to run on physical hardware as needed. However, virtualization requires more resource-intensive upgrades to scale than cloud computing.

History of virtualization

The roots of virtualization go back three to the 1960s, when hypervisors were developed and used to run multiple computers on premises and handle business processes, such as batch processing.

In the 1990s, large enterprises that could afford to build and run centralized IT software stacks ran their applications on premises. Virtualization became a popular method for running legacy applications without being bound to a single OS. Organizations could implement less expensive server technology with this approach.

Virtualization remained obscure until around the 2000s when it began to gain traction. Throughout the decades, companies such as IBM, Microsoft, Red Hat and VMware released product offerings that provided new virtualization options. For example, VMWare released Storage vMotion in 2007, which was used for storage virtualization. Microsoft released its Hyper-V for VM in 2008 before releasing Windows Azure in 2010, offering more enhanced virtualization capabilities.

Virtualization refers to full-scale virtualization; paravirtualization is a different approach involving partial virtualization. Learn the differences between virtualization and paravirtualization, and explore their advantages and disadvantages.