Virtual servers vs. physical servers: What are the differences?

Virtualization has cemented itself in the enterprise data center, but that doesn't mean physical servers are obsolete. They each have their benefits and uses.

To better understand the differences between virtual server and physical servers, administrators must look at them individually first. Although a physical server is needed to run virtual servers, that architecture isn't the same as a physical server running a web, database or file server.

What is a physical server?

A physical server refers to a hardware server with the motherboard, CPU, memory and IO-controllers. It's considered a bare-metal server because its hardware is used directly by an OS instead of a virtualization platform.

A physical server is used to run a single instance of an OS. It runs Windows, Linux or another OS and, very often, it's used to run a single application.

What is a virtual server?

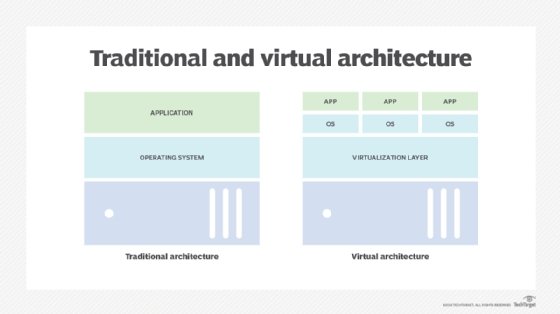

A virtual server or virtual machine -- terms used interchangeably here -- is a software-based representation of a physical server. The function that abstracts CPU, memory, storage and network resources from the underlying hardware and assigns them to VMs is called a hypervisor.

The hypervisor runs directly on the server's hardware in place of an OS. VMs have an additional level of isolation and can run an independent OS on top of the hypervisor.

A physical machine can be split up into many VMs, where each has its own purpose, making it different than the bare-metal example above, where the physical server only runs one service.

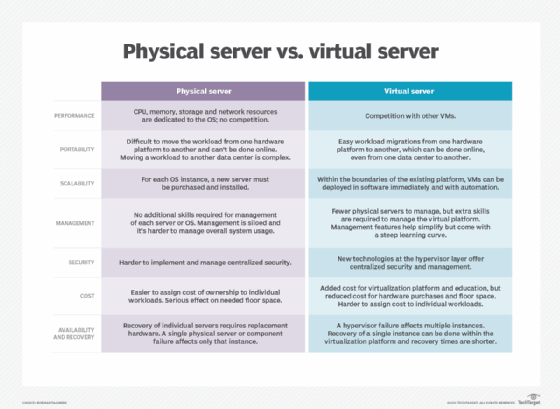

Virtual servers vs. physical servers: Key differences

Before discussing some of the main differences between virtual servers and physical servers, let's look at a visual that compares the two:

A short history of the physical-to-virtual transition

For many years, running physical servers was the only option. Data centers were filled with dedicated servers with each one doing its own thing, and, in that respect, management was simple. If there was a problem with a server, it only affected that server and admins could direct all their attention to it for troubleshooting and maintenance.

The needed floor space in the data center for dedicated servers comes at a high cost though. In addition, most organizations found that servers were only running at a small fraction of their capacity. Replacing a server in a physical server environment requires an actual replacement server and disaster recovery (DR) requires almost a one-to-one ratio for production and failover servers.

In the early 21st century, VMware introduced server virtualization for the Intel platform.

The benefits of virtualization

One of the big drivers for virtualization adoption was server consolidation. Where originally 10 or 15 servers were needed, a single physical server could run 10 or 15 VMs. This is still one of the main advantages of virtualization and consolidation ratios have increased over the years.

Another benefit of virtualization is that relocating a VM to different hardware, performing a restore or DR and failover is much simpler. When VMware introduced vMotion technology to live-migrate workloads to another hardware platform, the benefits increased even more. Admins can replace hardware without service interruption and balance workloads on all available hardware, eliminating bottlenecks.

Comparing costs

Because startup costs, which include hypervisor licenses and employee training, are higher with virtualization, it's easy to assume they outweigh the hardware savings, especially with the declining prices of hardware. However, in the long run, when the first regular hardware replacement for the traditional environment would be necessary, the cost savings become evident.

The added value is also something to consider. Over the past two decades, other vendors and open source projects introduced hypervisors such as Hyper-V, Xen and KVM. Increased competition sparked new features that made virtualization a must-have. With more flexibility, automation, software-based scalability, migration and VM management features, it's difficult to say that it's simpler and faster to implement the same workloads on dedicated physical servers.

Virtualization also makes it possible to run workloads in a cloud environment, whether as a permanent solution for when organizations don't want to run their own servers or for cloud bursting to easily increase system capacity when needed.

When to run physical servers

The most common reason for organizations to run workloads on a dedicated server is to have that workload use all available resources. When a high-end database server requires 64 CPU cores, 32 TB of RAM and 100 GB Ethernet, it could make sense to run it on a dedicated server with those resources.

On the other hand, if that workload runs on top of a hypervisor with sufficient capacity, then the benefits of uniform management, workload migration and portability can outweigh the possible performance penalty of the virtualization overhead.

Another reason for running a physical server is when hardware is needed that can't be virtualized. Some components, such as PCI adapters and interfaces, can't be added to a VM. And, of course, there are platforms that aren't virtualized, such as an IBM AS/400 or Unix hosts