IT systems management

What is systems management?

Systems management is the administration of the information technology (IT) systems in an enterprise network or data center. An effective systems management plan facilitates the delivery of IT as a service and allows an organization's employees to respond quickly to changing business requirements and system activity. In a hybrid IT environment, this involves overseeing the design and day-to-day operations of the data center. It also includes oversight of the integration of third-party Cloud services.

The chief information officer or chief technology officer usually oversees IT systems management. The department responsible for architecting and managing the systems is sometimes known as management information systems, information systems or IT infrastructure and operations. Tasks for these teams include the following:

- gathering system requirements;

- buying equipment and software;

- distributing, configuring and maintaining the equipment;

- providing enhancements and service updates to equipment;

- implementing processes to address problems;

- provisioning services;

- monitoring IT systems performance; and

- determining whether objectives are being met.

The Information Technology Infrastructure Library (ITIL) provides a best practices guide for operations and systems management in the data center and cloud.

Why is systems management important?

Systems management maintains the IT functions that keep a business operational and running efficiently. Most business functions involve some sort of IT system. Each IT system or subsystem must function independently and be integrated with related subsystems to ensure business success.

IT systems must operate at a certain service level for the business to succeed. Systems management ensures that each component is performing as expected so that the business can operate as expected. Good systems management simplifies IT service delivery, allowing employees and workgroups to do their jobs efficiently. It also helps businesses be proactive, spending less time fixing problems and more time planning for the future and making improvements.

Systems management has become even more important as IT systems have grown more complex. As businesses grow and adopt emerging technologies, they must manage IT systems more efficiently. For example, IoT requires new ways of providing DCIM as companies rely on distributed sensors to identify issues with heating, cooling and power use.

However, as new technology is added, a company's IT operations requirements and challenges also grow. Customers and businesses alike require high levels of uptime from increasingly complex IT networks. Lapses in IT system performance can lead to serious consequences, such as financial loss or reputation damage among the business's customer base.

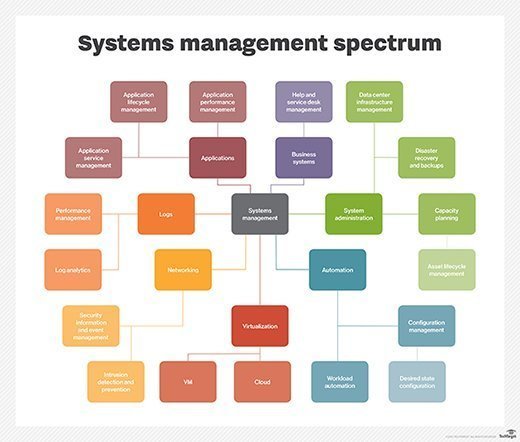

Subsystems of IT systems management

IT infrastructure consists of various subsystems that fulfill specific goals, such as data management, network management or storage. IT subsystems work together as part of the overall IT system.

It is helpful to think in terms of subsystems because IT encompasses a variety of technologies. Specifying the subsystem helps to define the context. Some examples of IT subsystems are the following:

Application lifecycle management. This is the oversight of all stages in the life of a software application, from planning to retirement. ALM involves documenting and tracking changes to an application, as well as enhancing user experience, application monitoring and troubleshooting.

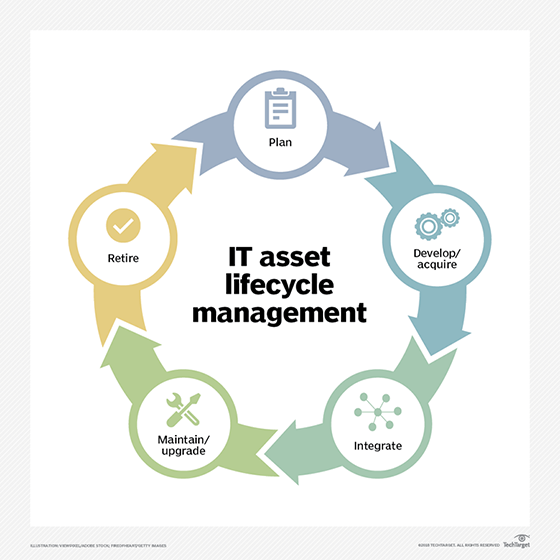

Asset lifecycle management. This involves all stages in hardware and software life, from planning and procurement to decommissioning and retiring. The IT asset lifecycle covers software licensing, from hypervisors to business applications, and analysis of asset cost versus value or revenue generated.

Automation management. This is the use of automatic controls to monitor and carry out IT management functions.

Capacity planning management. This involves estimating the amount of certain resources needed over a future period of time. These resources include data center floor space, cooling, hardware, software, power and connectivity infrastructure and cloud computing.

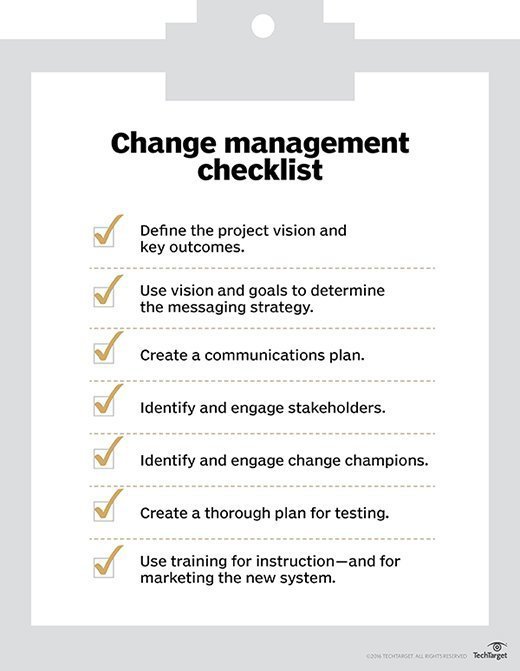

Change management. This is a systematic approach to dealing with change from the perspective of both the organization and the individual. Change management ensures that changes are approved and documented, and it improves a company's ability to adapt quickly.

Cloud lifecycle management. This is the exercise of administrative control over public, private and hybrid clouds.

Compliance management. This ensures that an organization adheres to industry and government regulations as specified in its compliance framework.

Configuration management. This encompasses the processes used to monitor, control and update IT resources and services across an enterprise. Configuration management lets a business know how its tech assets are configured and how they relate to one another.

Cost management. This is the planning and controlling of IT expenditures. Cost management enables good budgeting practices and reduces the chance of going over budget.

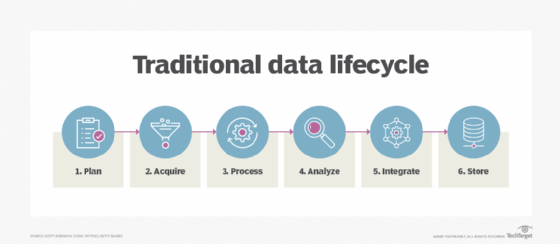

Data management. This determines how data in an organization is created, retrieved, updated and stored. Data management can also include data backup and disaster recovery.

Data center infrastructure management. This combines data center systems management with building and energy management. The goal of DCIM is to provide a holistic view of a data center's performance.

Help desk management. This involves reporting and tracking problems that pass through the help desk, as well as managing resolutions to those problems.

IT service management. This is the creation and management of a strategic approach to designing, delivering, managing and improving the way IT is used so an organization can meet its business goals. ITSM lets businesses manage IT services throughout their lifecycle.

Network management. This is the administration of both wired and wireless networks. The FCAPS (fault, configuration, accounting, performance and security) framework for network monitoring and management and ITIL best practices are popular administrative tools for this subsystem.

Performance management. This is the oversight of an organization's IT infrastructure to ensure that key performance indicators, service levels and budgets comply with the organization's business goals.

Security information and event management. This is a holistic view of an organization's IT security. SIEM combines security information management and security event management (SEM) functions into one security management system.

Server management. This is the consolidation and management of servers in a homogeneous or heterogeneous environment. It involves the supervision of patch management, efficiency, power use and performance, as well as predictive maintenance.

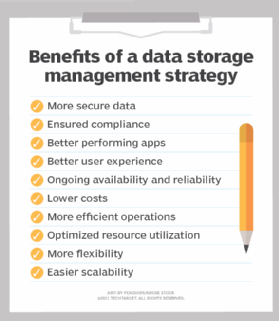

Storage management. This is the establishment and management of procedures, services and standards for managing storage infrastructure and third-party cloud storage services.

Virtualization management. This is the provisioning and management of a virtual infrastructure, including virtual machines, containers and virtual desktop infrastructure. It includes monitoring and correcting performance problems that are unique to virtualization.

Challenges of systems management

IT systems management involves many challenges. Some universal challenges come with managing any IT system, and each IT subsystem has its own challenges, including the following:

- Cost. Systems management costs money for IT staff, systems management tools and the training it takes to use those tools and learn industry standards.

- Disaster recovery. Systems management is a key part of any disaster recovery plan. When disasters happen, data and systems must be back online as fast as possible.

- Interoperability. One of the biggest challenges is getting all subsystems to work together in a consistently changing IT environment. It can be difficult to integrate systems management software with various hardware and other software. It may also be difficult to integrate newer IT systems with legacy ones.

- Training. IT staff and systems managers need to understand how systems management tools integrate with one another and existing infrastructure. Training staff to do this takes time and resources.

- Software updates. As systems change and become more complex, it is difficult to ensure that software in all subsystems is updated. Software updates can cause compatibility issues, and missed updates can create security vulnerabilities.

- Security. Securing IT systems gets more challenging as infrastructure becomes more complex. Maintaining the security of the systems management software is particularly important. This software touches many IT systems and, if infected, can compromise network security. One example of systems management software being targeted is the SolarWinds breach of 2020, in which the Orion network monitoring platform was infected.

What to consider when buying systems management software

A variety of systems management software options are available, and no one product will work for every business. Companies should consider several factors when searching for systems management tools and developing an overall systems management strategy. These include the following five elements:

- company size

- available budget

- existing equipment and resources

- quantity of IT devices and resources

- infrastructure complexity

Companies should also decide if they need systems management services and software at all. A small business with fewer than 10 computers and a simple infrastructure may find it makes sense to maintain them manually rather than to buy expensive management software.

Conversely, a large enterprise might opt for a centralized management service to manage its distributed and complex IT infrastructure. Some companies may choose a hybrid environment, which features a mixture of in-house personnel and managed services.

Examples of systems management software

Systems management software often handles several functions. Below are examples of some of these tools:

- Jira Service Management. This ITSM software provides ITIL-certified change- and service-management features. It also has customizable templates that require little software development

- Mitratech Compliance Manager. This product provides compliance and risk management features. It also shows a business its compliance obligations.

- Paessler PRTG. This tool monitors network infrastructure, including routers, firewalls and servers, to spot bottlenecks and improve performance.

- Progress WhatsUp Gold. This remote network monitoring and management tool is for Windows, LAMP (Linux, Apache, MySQL, PHP) and Java

- SAP Master Data Governance. This software simplifies enterprise data management and automates functions such as data replication.

- SolarWinds Systems Management Bundle. This product handles many systems monitoring functions, including application and dependency monitoring, server monitoring and log management.

- Syxsense Manage. This cloud-based endpoint management system provides security and patch management for third-party software updates and Windows feature updates.

- Zabbix. This free, Open Source tool monitors network resources, such as servers and databases.

A large part of the decision to use a systems management service depends on the capabilities of the company's IT team. One type of systems management is server management. Learn the most important skills a server engineer needs to monitor, maintain and manage servers.