Blue Planet Studio - stock.adobe

LLM build vs. buy: A decision framework for LLM adoption

When deciding whether to build or buy a large language model, businesses must consider costs, customization, governance, risk and readiness to determine the best AI approach.

Large language models (LLMs) are the foundation of many AI systems. They can analyze and write text, create software code, perform reasoning, power chatbots and search engines, and assist in customer support tasks.

LLMs provide pattern recognition and form the interface between human knowledge and machine data. They understand context, meaning and nuance between words, and even detect sentiment. LLMs also use data to formulate detailed plans, create high-quality content and even synthesize entirely new data sets.

An LLM can be bought, which typically means licensed or accessed for a fee. They can also be built and customized using an open source LLM or developed entirely from scratch. The LLM build-vs.-buy decision can get complicated for AI developers and business leaders. This tip takes a look at what should be considered.

LLMs are just another software component

Software is frequently built from a mix of bought and built components. It's common for developers to license software libraries while building other software modules and subsystems, integrating everything into the completed application. LLMs work the same way. From an architectural perspective, an LLM is one component or subsystem involved in a complete AI system.

Today, there are many LLMs available. The table below is a list of some major LLMs.

Why so many LLMs? LLMs proliferate for numerous reasons. There's intense competition among major LLM providers unwilling to leave this revenue potential untapped. Models vary in their performance, capabilities and purpose; some are large, general and comprehensive; some are small and highly efficient or narrow in scope; others are specialized for verticals such as healthcare and legal. LLMs communicate using some of the same methodologies as other software modules, including web interfaces, APIs and local or direct integration.

Using commercially available LLMs can be an effective approach for some AI projects. But they don't fit every AI project. Businesses choose to develop AI because of the tangible benefits AI technology can provide -- cost savings, competitive advantages and innovation. This often demands an LLM that meets a strong but specific mix of performance, accuracy, security, compliance and cost attributes. For some business-critical projects, there's no such thing as good enough, and organizations might opt to build their own LLMs.

Industry opinions remain mixed. A 2025 Omdia report, "Navigating Build-Vs.-Buy Dynamics for Enterprise-Ready AI," found that 95% of the 376 technical and business stakeholders surveyed agreed that building an AI offers greater customization and control, and 91% acknowledged the speed and benefits of prebuilt AI platforms. This underscores the importance of careful consideration and tradeoffs.

4 areas to consider for the LLM build-vs.-buy decision

When considering whether to build or buy an LLM model, there are four key areas to assess in making that decision.

1. LLM total cost of ownership

Cost will profoundly affect the AI project's ROI. Since AI is now a business necessity rather than an experimental boondoggle, costs – and thus ROI -- emerge as the single most overriding consideration in an LLM build-vs.-buy decision. Total cost of ownership (TCO) is also the most complex assessment because of the number of cost factors involved over the lifecycle of the LLM.

LLM builders face a range of upfront and long-term costs, including the following:

- LLM software development. This isn't a mobile data access app. LLMs are complex subsystems that demand ample expertise from the development team, along with vast amounts of data to train and test the subsystem. It can take from six months to two years to field an enterprise-class LLM. Customizing open source LLMs can shave some development time, but the need for expertise remains. Additional work from machine learning operations (MLOps) teams, prompt engineers and governance teams will add ongoing costs to an LLM build.

- LLM infrastructure. LLMs demand significant computational power, including specialized processors such as GPUs. A business that chooses to build and operate an LLM needs to provide a large, scalable, secure and strong IT infrastructure for deployment that involves major capital expenditures and energy costs. Most organizations use a public cloud infrastructure for LLM deployment, but this also incurs ongoing operational costs that can span from $1,000 to more than $50,000 per month throughout the LLM's lifecycle, depending on the LLM's sophistication and use. FinOps teams can help to determine LLM cloud deployment costs.

- LLM monitoring and maintenance. LLM builds don't stop with deployment. Continuous monitoring is needed to check outcomes, spot bias and misuse, data drift and performance degradation. This involves the cost of monitoring tools and MLOps staff time to evaluate and remediate issues. Even well-performing models must be fine-tuned and retrained periodically, which requires recurring costs.

- Data preparation and management. Vast amounts of quality data are needed to train an LLM. This data must be procured, stored and prepared for use. Experienced data science teams work with infrastructure teams to store and secure the data. Data obtained from outside sources can be costly, especially if it's industry-specific, niche or limited.

One overlooked advantage of building and operating an LLM is the potential for monetization. Third parties pay for access to the LLM, generating a revenue stream that positively impacts the LLM's ROI and helps offset the costs of owning an LLM directly. However, less-exacting AI projects often use an existing commercial LLM. This can accelerate the AI project's development and lower associated upfront costs, though recurring costs can still be significant.

LLM buyers face similar long-term costs, including the following:

- LLM inference costs. Inference is the use of an LLM. Each time a business accesses a commercial LLM to generate text, answer a question or perform other tasks, the business incurs a use-based expense; this is an inference or pay-per-use cost. Inference costs are typically cited per million tokens. These can be units of text or other elements that an LLM can process -- based on the provider's overhead and margin, which can include infrastructure costs, power demands and other factors. Inference costs vary from $0.10 for small LLMs to $15 for top-tier LLMs. Major AI projects with high use can generate high inference costs that add up over time.

- LLM provider disruptions. A third-party LLM provider is a business partner that provides a critical piece of the organization's AI platform. Disruptions to the LLM result in disruptions to the AI, interrupting business revenue and vital AI tasks, such as customer service, which can have an adverse effect on the business. Monitor LLM uptime and consider the costs of potential disruptions as well as potential governance and compliance consequences.

- LLM provider lock-in. The AI market is evolving rapidly, and commercial LLM availability is likely to expand and remain competitive in the coming years. But this isn't guaranteed. Building an AI platform to use a certain LLM creates a vendor lock-in that, in turn, creates a business vulnerability. If an LLM vendor is slow to tune its model, experiences gaps in availability or delivers poor outcomes, a customer's AI system could be negatively affected. This could force the business into a costly, time-consuming shift to a potentially less desirable LLM.

2. LLM control and customization

The question of building or buying an LLM frequently leads to discussions of control, customization and intellectual property (IP). Businesses often consider building an LLM model because it could provide a competitive advantage and differentiator.

When an enterprise leases or buys a commercial LLM, they're using the same LLM that many other AIs use. Is that commercial LLM enough of a differentiator if it's available to anyone? Building an LLM or customizing and training an open source LLM lets the enterprise AI project deliver more specialization and achieve better competitive differentiation. Here are some LLM build-vs.-buy aspects to consider:

- Data quality and adequacy. A commercial LLM is trained by the LLM's provider. Every business or user that accesses the commercial LLM has access to the same training and accuracy. This is fine for noncritical AI platforms, but mission-critical AI systems expected to drive business results might benefit from a built or customized LLM trained and tuned to improve competitive advantage.

- Data residency and sovereignty. The world is increasingly segregated as nations recognize the strategic value of data and the power of AI. A bought LLM might require the exchange of data across geopolitical borders that violate data sovereignty rules. However, a built LLM can be deployed locally within geopolitical borders to accommodate data sovereignty regulations as they evolve.

- Intellectual property. There are numerous IP concerns around LLMs, including training data integrity and provenance; production data ownership, such as user queries or data from IoT devices; and the model itself, its algorithms and parameters. IP issues with commercial LLMs must be reviewed carefully with a legal team. Can the business own or monetize its AI if that AI relies on a third party LLM using IP with unclear or incomplete rights? A custom built LLM has more control over IP in the model's design and data.

- Contractual agreements. Built LLMs require no contractual agreement because the enterprise creates, trains, deploys and operates them. However, a commercially bought LLM requires attention to various contractual issues, such as the model's accuracy, uptime guarantees, liability against bias, hallucinations and IP misuse. Further, a commercial LLM contract might limit the organization's data use to prevent the LLM provider from using client data to train and refine the LLM, which effectively allows the LLM provider to improve its LLM at the users' expense.

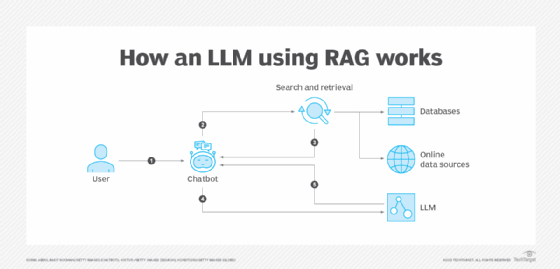

- Tuning and refinement. Even a well-refined LLM might need tuning to meet an AI system's specific needs. A commercial LLM can use retrieval-augmented generation (RAG) to fetch external data in real time -- it's a sound way to reduce hallucinations and access up-to-date data. But a custom built LLM can use detailed fine-tuning techniques to adjust model weights or learn specific tasks, formats and other behaviors.

3. LLM governance and risk management

AI is rapidly gaining traction as a valuable business technology. But as AI technology gains ground, governance and risk need attention. LLMs are the nexus of many interactive AI systems, so it isn't surprising that they would face increasing regulation, governance and risk.

LLM build-vs.-buy governance and risk considerations include the following:

- Auditability. AI systems require independent and transparent inspection and validation of their design, algorithms, data and decision-making throughout their lifecycle. This includes thorough documentation of model development, training data sources and performance. It also allows full traceability from the initial request, through data access, processing and decision-making to reveal how an AI made its decision. Traceability is independent, letting third-party reviewers gauge the AI system against prevailing technical, ethical, regulatory and legal requirements. Traceability use is growing, but its adoption is highest in regulated industries that are most likely to face regulatory scrutiny. LLMs can be built to allow auditability, but commercial LLMs might not always provide the level of auditability needed to meet regulatory needs.

- Explainability. Explainability often complements auditability by detailing why the AI arrived at its decision. Where auditability focuses on data and reproducibility for the purposes of compliance and legal defense, explainability focuses on model logic and reasoning to establish trust and transparency. As with auditability, a built LLM can ensure explainability, but a bought one might not offer the same level of explainability. The need for explainability will require detailed discussions with the LLM owner and clear language in an LLM contractual agreement.

- Liability. Incorrect or harmful AI outputs carry risks of legal liability. Regulations like the EU's AI Liability Directive make it easier for victims to claim damages, while the EU's Revised Product Liability Directive treats AI as a product, making manufacturers liable for defects even after it's operational. Liability waivers and user agreement terms have limited usefulness. An organization that builds its own LLM carries the full liability burden, though a commercial LLM operated by an outside provider doesn't alleviate liability for the enterprise that uses the LLM. Business leaders must discuss liability and mitigation strategies with their legal team because burden and risk can vary with location and local laws.

- Compliance. AI regulatory governance is expanding quickly, and an AI system must meet the regulatory requirements prevailing in any jurisdiction where it's accessed. Regulations can be legislation, such as the EU's AI Act or it can be industry-specific rules or guidelines. Whether built or bought, LLMs are subject to the same compliance requirements. The practical implications of compliance should be discussed with the organization's regulatory experts. An enterprise might have to build its own LLM if outside LLM providers can't guarantee adherence to prevailing compliance requirements.

4. LLM organizational readiness

Intent doesn't ensure success. An organization's own development capabilities, previous AI expertise and available talent pool can play decisive roles in an LLM build-vs.-buy decision. Even when a build mandate is clear, an enterprise lacking the proven skills, experience, resources and bandwidth needed to undertake a major LLM project can face costly delays and project failure.

Some considerations of LLM organizational readiness include the following:

- Human talent. An LLM isn't just another web portal or enterprise app. Building, deploying, and maintaining an LLM demands a skilled and proven multidisciplinary team with deep technical expertise in AI, machine learning, data management, data curation, LLM software engineering and development, and strong AI governance. Team members often have high levels of industry and domain expertise. Weak, incomplete or overtaxed teams can spell disaster for a LLM build.

- Workflows and processes. Effort without process is simply chaos. Even when human talent is available, the organization must have a refined tool set and developed processes in place that can accommodate data collection, preparation and quality management; software development, testing and deployment; as well as LLM training, validation, monitoring, evaluation, updating, refinement and oversight.

- Dependencies and vulnerabilities. There are always dependencies in a complex software project. Tools may require licensing. Data must be acquired, examined and curated. Careful attention must be given to LLM and AI governance, risk, compliance and liability. Even the IT infrastructure -- such as the internet and public cloud -- can be points of failure for a critical LLM build. Ensuring that each dependency and vulnerability is understood and addressed takes collaboration and experience.

- Organizational culture. The organization itself requires leadership and execution maturity to recognize the risks inherent in large and complex projects such as an LLM build. Maturity also helps ensure the confidence to accept those risks. A culture unprepared to shoulder the risks or tolerate some experimentation along the way might find better outcomes in using a commercially bought LLM.

Future-proofing the LLM decision

The decision to build or buy an LLM might seem straightforward: Buy when time-to-market is vital and the AI is noncritical and build when the AI is central to the business or offers strategic differentiation. But any chief AI officer can tell you that the choice is still fraught with questions and anxiety.

Time is an important factor. The 2025 Omdia survey found that 64% of respondents realized value from their AI initiatives within 6 months, while 21% reported a year or more before AI's value appeared. The longer timeframe can lead to skepticism about AI and limit future investment. However, there are steps that can be taken to future-proof the LLM decision:

- Prevent LLM lock-in. Design the AI architecture so that the LLM is easily decoupled, so it can be swapped with other LLMs as technology changes, without having to rewrite the overall application -- an approach called model agnosticism. This works whether building or buying.

- Mitigate LLM tool lock-in. LLM development and application integration relies on one or more critical tools or frameworks. Tools tailored to specific LLMs can cause problems if the LLM must be swapped. It's best to use tools and frameworks -- such as Haystack, LangChain and LlamaIndex, that support model swapping.

- Consider open source LLM. Open source LLMs are a middle ground for some AI projects, saving time and investment by modifying and using an existing LLM but without having to rely on an outside provider. A decoupled LLM architecture makes it easier to replace the LLM later if needed.

- Assess hybrid and multimodel approaches. Some organizations might consider a more nuanced approach, choosing to buy or build certain elements of the AI stack. For example, a project might benefit from an open-weight model with in-house fine-tuning or a third-party LLM with an in-house RAG. Still other projects might benefit from multiple LLMs, routing queries to the most cost-effective or best-performing one depending on the nature of the query.

- Start smaller by buying the LLM. An organization with limited expertise can start by buying an LLM to speed up the go-to-market strategy, gain experience and then work on a customized LLM over time as internal capabilities grow. With this approach, it's important to use a decoupled LLM architecture to allow rapid LLM replacement over time.

- Ensure LLM data availability. A bought LLM might need no training data but swapping to an open source or custom-built LLM demands a lot of data. Ensure that any potential plans to use a built LLM can access sufficient data with adequate IP rights to train, tune and deploy the LLM when the time arrives. No data access means no LLM.

- Watch the winds of compliance. Regulatory standards and legal frameworks are changing fast, in ways that affect the LLM build-vs.-buy calculus. The choice must meet current requirements and support a changing regulatory and legal landscape. Have a fallback plan if the regulatory environment changes.

Stephen J. Bigelow, senior technology editor at TechTarget, has more than 30 years of technical writing experience in the PC and technology industry.