What is machine learning operations (MLOps)?

Machine learning operations (MLOps) is the development and use of machine learning models by development operations (DevOps) teams. MLOps adds discipline to the development and deployment of ML models, making the development process more reliable and productive.

MLOps encompasses a set of processes, rather than a single framework, that machine learning developers use to build, deploy and continuously monitor and train their models. It's at the heart of machine learning engineering, blending artificial intelligence (AI) and machine learning techniques with DevOps and data engineering practices.

There are many steps needed before an ML model is ready for production, and several players are involved. The MLOps development philosophy is relevant to IT pros who develop ML models, deploy the models and manage the infrastructure that supports them. Producing iterations of ML models requires collaboration and skill sets from multiple IT groups, such as data science teams, software engineers and ML engineers.

Development of deep learning and other ML models is considered experimental, and failures are part of the process in real-world use cases. The discipline is evolving, and it's understood that, sometimes, even a successful ML model might not function the same way from one day to the next.

How MLOps works

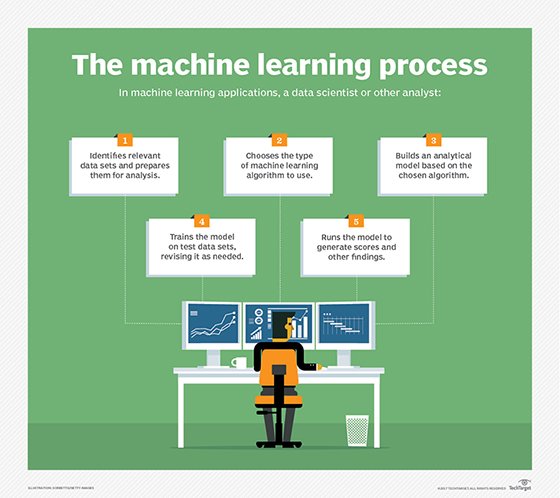

MLOps implements the machine learning lifecycle. These are the stages that an ML model must undergo to become production-ready. The following are the four cycles that make up the ML lifecycle:

- Data cycle. The data cycle entails gathering and preparing data for training. First, raw data is culled from appropriate sources, and then techniques such as feature engineering are used to transform, manipulate and organize raw data into labeled data that's ready for model training.

- Model cycle. This cycle is where the model is trained with this data. Once a model is trained, tracking future versions of it as it moves through the rest of the lifecycle is important. Certain tools, such as the open source tool MLflow, can be used to simplify this.

- Development cycle. Here, the model is further developed, tested and validated so that it can be deployed to a production environment. Deployment can be automated using continuous integration/continuous delivery (CI/CD) pipelines and configurations that reduce the number of manual tasks.

- Operations cycle. The operations cycle is an end-to-end monitoring process that ensures the production model continues working and is retrained to improve performance over time. MLOps can automatically retrain an ML model either on a set schedule or when triggered by an event, such as a model performance metric falling below a certain threshold.

Main components of MLOps

Various components make up the MLOps model building process. They're usually implemented sequentially and ensure the reproducibility of the process. The four steps in the MLOps lifecycle provide an overview of the process, but these cycles can be broken down into the more detailed components:

- Data collection and analysis. Valuable data must be identified and collected.

- Data preparation. Developers clean and prepare the data to ensure consistent formatting and readability before it's introduced to the model.

- Model development and training. The prepared data is used to train the ML model, which is tested to ensure it produces the insights, predictions and other outputs needed.

- Model deployment. The model is put into production, making it accessible to users after it's developed and tested.

- Model monitoring. The model's performance is monitored to ensure it runs smoothly. Any debugging that's needed happens at this stage.

- Model retraining. Models require new data to continue producing accurate and up-to-date insights and predictions. Retraining is an ongoing process.

- CI/CD. This component applies throughout the process, from development and testing to deployment and retraining. It automates and streamlines these processes.

Why is MLOps necessary?

Machine learning models aren't built once and forgotten; they require continuous training so that they improve over time. That's where MLOps comes in. It provides the ongoing training and constant monitoring needed to ensure ML models operate successfully.

MLOps documents reliable processes and governance strategies to prevent problems, reduce development time and create better models. MLOps uses repeatable processes in the same way businesses use workflows for organization and consistency. In addition, MLOps automation ensures time isn't wasted on tasks that are repeated each time new models are built.

What are the benefits of MLOps?

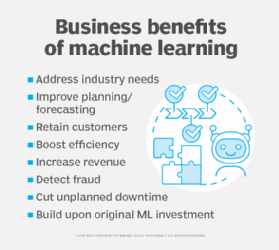

MLOps provides a range of benefits, such as the following:

- Speed and efficiency. MLOps automates many of the repetitive tasks in ML development and within the ML pipeline. For example, automating initial data preparation procedures reduces development time and cuts down on human error in the model.

- Scalability. ML models often must be scaled to handle increased workloads, larger data sets and new features. To provide scalability, MLOps uses technology such as containerized software and data pipelines that can handle large amounts of data efficiently.

- Reliability. MLOps model testing and validation fix problems in the development phase, increasing reliability early on. Operational processes also ensure models comply with policies that an organization has in place. This reduces risks such as data drift, in which the accuracy of a model deteriorates over time because the data it was trained on has changed.

MLOps challenges

MLOps might be more efficient than traditional approaches, but it's not without its challenges. They include the following:

- Staffing. The same data scientists responsible for developing ML algorithms might not be the most effective at deploying them. They also might not be best equipped to explain to software developers how to use the algorithms. Some of the best MLOps teams embrace the idea of cognitive diversity -- the inclusion of people who have different approaches to problem-solving and offer unique perspectives because they think differently.

- High costs. MLOps can be costly, given the need to build an infrastructure that uses many new tools. The resources required for data analysis, as well as model and employee training, are also expensive. This is especially true of large-scale ML projects with lots of dependencies and feedback loops. It's important for an organization interested in these projects to assess whether MLOps is the best approach.

- Imperfect processes. While MLOps processes are designed to reduce errors, some mistakes still occur and require human intervention.

- Cyberattacks. Malicious actors are a threat given the large amount of data that MLOps infrastructures store and process. Cybersecurity is required to minimize the risk of data breaches and leaks.

Key use cases for MLOps

On the surface, MLOps appears to be exclusive to the tech industry; however, other industries find value in using MLOps practices to enhance their operations:

- Finance. ML is valuable for analyzing millions of data points fast. This lets financial services companies use ML to analyze many transactions and quickly detect fraud, for example.

- Retail and e-commerce. Retail relies on MLOps to produce models that analyze customer purchase data and make predictions on future sales.

- Healthcare. MLOps-enabled software is used to analyze data sets of patient diseases to help institutions make better-informed diagnoses.

- Travel. The travel industry analyzes customers' travel data to better target them with advertisements for their next trips.

- Logistics. This software is used to analyze performance data on different modes of transportation to predict failures and risks. This practice is known as predictive maintenance.

- Manufacturing. MLOps tools are used to monitor manufacturing equipment and provide predictive maintenance capabilities.

- Oil and gas. In the oil and gas industry, MLOps monitors equipment and analyzes geological data to identify suitable areas for drilling and extraction of oil and natural gas.

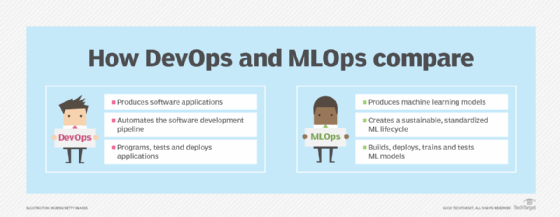

MLOps vs. DevOps

The most obvious similarity between DevOps and MLOps is the emphasis on streamlining design and production processes. However, the clearest difference between the two is that DevOps produces the most up-to-date versions of software applications for customers as fast as possible, a key goal of software vendors. MLOps is instead focused on surmounting the challenges that are unique to machine learning to produce, optimize and sustain a model.

DevOps typically involves development teams that program, test and deploy software apps into production. MLOps means to do the same with ML systems and models but with a handful of additional phases. These include extracting raw data for analysis, preparing data, training models, evaluating model performance, and monitoring and training continuously.

MLOps vs. ML engineering

The term ML engineering is sometimes used interchangeably with MLOps; however, there are key differences. MLOps encompasses all processes in the lifecycle of an ML model, including predevelopment data aggregation, data preparation, and post-deployment upkeep and retraining. Meanwhile, ML engineering is focused on the stages of developing and testing a model for production, similar to what software engineers do.

For example, an MLOps team designates ML engineers to handle the training, deployment and testing stages of the MLOps lifecycle. These professionals possess the same skills as typical software developers. Others on the operations team may have data analytics skills and perform predevelopment tasks related to data. Once the ML engineering tasks are completed, the team at large performs continual maintenance and adapts to changing end-user needs, which might call for retraining the model with new data.

Best practices for MLOps

There are many useful strategies that MLOps teams adhere to. The following set of practices can help guide a successful machine learning project to completion and reduce its likelihood of failure:

- An application programming interface from an existing AI service can simplify or expedite MLOps in various ways. For example, APIs can be used to retrieve data from external data sources and for automated testing of ML models.

- MLOps professionals often run parallel model development processes so that, if one model fails, they still have others in progress.

- Pretrained models are used to show proof of concept.

- Generalized algorithms showing some successes are further trained for a specific task. For example, a logistic regression algorithm can be trained to predict the likelihood of future events.

- Publicly available data sources are used to bridge gaps in model training data, provide new data and prevent model drift.

How an organization can implement MLOps

There is no single right way to acquire the skilled employees, tools and infrastructure needed to run an MLOps operation. That said, there are three levels of MLOps implementation that coincide with an organization's needs:

- Level 0. This level is descriptive of smaller companies or startups that don't require large-scale MLOps processes. This entails little to no automation, and small development teams handle processes manually. Also, there's no CI/CD, so deployed models are rarely upgraded once they're in a production environment.

- Level 1. Organizations in need of advanced methods can implement continuous training and automation tools so processes don't need to be performed manually. The biggest difference between levels 0 and 1 is that level 1 enables models to be upgraded to accommodate changing end-user needs and new data.

- Level 2. Level 2 is the highest level of automation for MLOps processes. It lets organizations experiment with creating more models. This involves using level 2 tools to set up a pipeline of automated processes that are easily replicated and scaled.

There are four types of ML training approaches. Supervised machine learning is the most common, but there's also unsupervised learning, semisupervised learning and reinforced learning. Learn the steps involved in machine learning training.