What are oversampling and undersampling?

Oversampling and undersampling are techniques used in data analytics and statistics to modify unequal data classes to create balanced data sets. Oversampling and undersampling are also known as resampling.

These data analysis techniques are often used to be more representative of real-world data. For example, data adjustments can be made in order to provide balanced training materials for AI and machine learning algorithms.

Where to use oversampling and undersampling

Oversampling and undersampling are used to intentionally introduce a bias into a data set with the intention to make the resulting model more sensitive to a particular group than it may have been otherwise.

One area where oversampling and undersampling techniques are used is for survey research. A survey sample population may be unbalanced in terms of types of participants. By using oversampling or undersampling, the ratios of surveyed characteristics, such as gender, age group and ethnicity, can used to make the weight of the data better representative of the group's ratios within the greater populations.

An area where oversampling and undersampling is needed is training a model to detect fraudulent or malicious activity. One example is a credit card company that had millions of records of valid activity but only thousands of cases of fraudulent activity. Another example is a network monitoring tool that has billions of data points but needs to find a small sample of malicious activity. To train such models, it may be necessary to produce more data of the fraudulent (oversampling) and remove some of the good data (undersampling).

Oversampling and undersampling are also useful when it is more important to identify the minority case accurately. For example, for fraudulent or malicious activity, it is better to inaccurately flag good activity as bad than to miss bad activity and let it pass. So, the small amount of bias that might be introduced by these techniques is acceptable if it makes the resulting model more sensitive.

Oversampling vs. undersampling

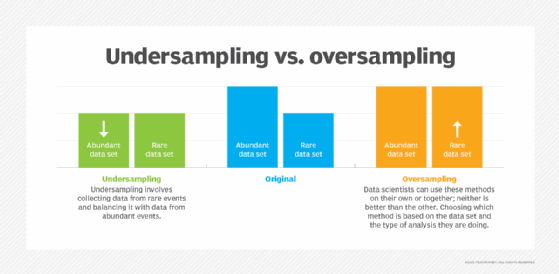

When one class of data is the underrepresented minority class in the data sample, oversampling techniques may be used to duplicate these results for a more balanced number of positive results in training. Oversampling is used when the amount of data collected is insufficient.

Conversely, if a class of data is the overrepresented majority class, undersampling may be used to balance it with the minority class. Undersampling is used when the amount of collected data is sufficient. Undersampling may also be used when there is too much data to be easily processed, but this is becoming uncommon as processing and storage become cheaper.

In both oversampling and undersampling, simple data duplication is rarely suggested. Generally, oversampling is preferable as undersampling can result in the loss of important data. Undersampling is suggested when the amount of data collected is larger than ideal and can help data mining tools stay within the limits of what they can effectively process.

Oversampling techniques

Random oversampling is the simplest method of oversampling. It simply duplicates some of the entries in the underrepresented data set. It is not recommended though as it can cause the resulting model to be overspecialized to the repeated data. Some methods may introduce random noise into the generated samples.

Synthetic Minority Oversampling Technique (SMOTE) generates unique new data based on the existing data. It identifies the characteristics of the data and creates new entries that are reasonable. For example, if the data set of weights had an entry at 150 pounds and another at 160 pounds, it might create an entry at 155 pounds. The resulting data is more diverse and more likely to be representative of a real population.

Adaptive synthetic sampling (ADASYN) is an extension of SMOTE. While typically SMOTE focuses average data in the middle of a set, ADASYN might focus on data at the edges of a data set, which are harder to gather and train for.

Undersampling techniques

Random undersampling removes entries randomly. It is simple to implement but can lose important details in the data set.

Cluster, or centroid, undersampling takes several entries that are similar or close together and replaces them with a single entry.

Condensed nearest neighbor (CNN) takes data entries that are clearly in one class or the other and removes them. This keeps the maximum data points that might help in unclear situations but minimizes the data needed for simple cases.

Tomek Links find pairs from different classes that are near to each other and remove the majority entry. This helps to keep clear boundaries between classes in a data set.

One-Sided Selection combines CNN and Tomek Links to remove excess data from the majority data set, while maintaining the minority data set.

Analytics can be biased, which can hurt profits or lead to social backlash due to discrimination. It's important to fix these biases before problems occur. Explore different types of bias in data analysis and how to avoid them.