What is DevOps? Meaning, methodology and guide

DevOps is a software development approach that brings development and IT operations teams together to deliver applications faster and more reliably.

The philosophy promotes better communication and collaboration between the two teams -- and others -- in an organization. It includes adopting iterative software development, automation and programmable infrastructure deployment and maintenance. DevOps also includes cultural changes, such as building trust and cohesion between developers and systems administrators and aligning technological projects to business requirements. DevOps can transform software delivery processes, job roles, tools and best practices.

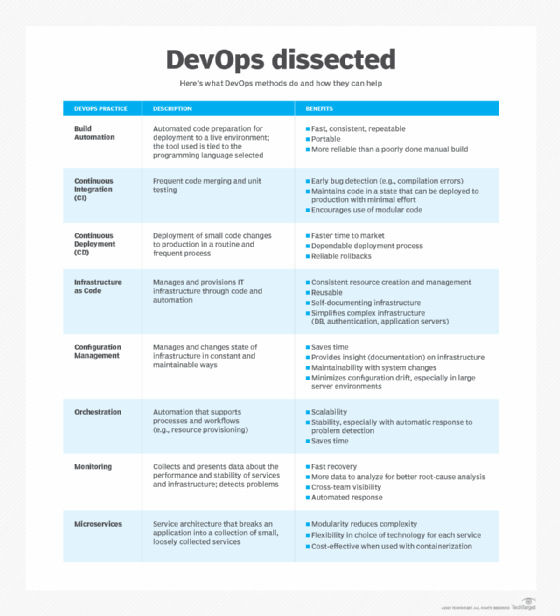

DevOps isn't a specific technology, but DevOps environments do use common methodologies:

- Continuous integration and continuous delivery (CI/CD) or continuous deployment tools, with an emphasis on task automation.

- Systems and tools that support DevOps adoption, including software development, real-time monitoring, incident management, resource provisioning, configuration management and collaboration platforms.

- Cloud computing, microservices and containers implemented concurrently with DevOps methodologies.

A DevOps approach is one of many techniques IT staff use to execute IT projects that meet business needs. DevOps doesn't have an official framework, but the CALMS framework (culture, automation, lean, measurement and sharing) is a popular implementation model.

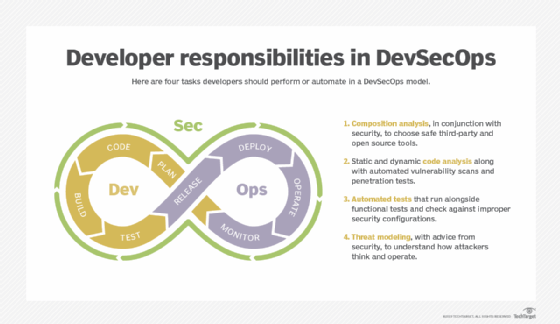

Some professionals believe that the simple combination of development and IT ops isn't enough, and that DevOps should include business (BizDevOps) and other areas. For example, DevSecOps, which is the integration of security into the DevOps lifecycle, is increasingly important in today's cybersecurity landscape.

Why is DevOps important?

DevOps has been shown to improve software quality and development project outcomes for the enterprise. Such improvements take several forms:

- Development outcomes. DevOps follows a cyclical, iterative development process. DevOps projects typically start small with minimal features, then systematically refine and add functionality throughout the project's lifecycle. This enables the business to be more responsive to changing markets, user demands and competitive pressures.

- Product quality. The cyclical, iterative nature of DevOps ensures that products are tested continuously as existing defects are remediated and new issues are identified. Much of this is handled before each release, resulting in frequent releases that enable DevOps to deliver software with fewer bugs and better availability compared to software created with traditional paradigms.

- Deployment management. DevOps integrates software development and IT ops tasks, often enabling developers to provision, deploy and manage each software release with little, if any, intervention from IT. This frees IT staff to focus on more strategic tasks. Deployment can take place on local infrastructure or in the public cloud, depending on the project's specific goals.

A well-executed DevOps environment enables a business to deliver more competitive, higher-quality software products to market faster, with lower support and maintenance demands than traditional development approaches.

What are the benefits of DevOps?

DevOps benefits include the following:

- Faster time to market for software, enhancing revenue and competitive opportunities for the business.

- Rapid improvement based on user and stakeholder feedback.

- Better software quality, better deployment practices and less downtime from more frequent testing.

- Improvement to the entire software delivery pipeline through builds, repository use, validations and deployment.

- Less menial work across the DevOps pipeline, thanks to automation.

- Streamlined development processes through increased responsibility and code ownership in development.

- Broader roles and skills.

How does DevOps work?

DevOps is a methodology meant to improve work throughout the software development lifecycle (SDLC). You can visualize a DevOps process as an infinite loop, comprising these DevOps pipeline stages: plan, code, build, test, release, deploy, operate, monitor and -- through feedback -- plan, which resets the loop.

Ideally, the cyclical loop that comprises each DevOps iteration alleviates significant stress on development outcomes.

DevOps gives organizations far more flexibility in developing and releasing software that matures systematically over time, allowing teams to learn, adjust and experiment in ways that traditional development methods don't support.

To align software with expectations, developers and stakeholders communicate about the project, and developers work on small updates that go live independently of one another.

To avoid wait times, IT teams use CI/CD pipelines and other automation to move code from one step of development and deployment to another. Teams review changes immediately and can rely on policies and tools to enforce policies and ensure releases meet standards.

To deploy good code to production, DevOps adherents use containers or other methods to ensure the software behaves consistently from development through testing and into production. They deploy changes individually so that problems are traceable. Teams rely on automation and configuration management for consistent deployment and hosting environments. Problems they discover in live operations lead to code improvements, often through a blameless post-mortem investigation and continuous feedback channels.

Developers might support the live software, which puts the onus on them to address runtime considerations. IT ops administrators might be involved in the software design meetings, offering guidance on how to use resources efficiently and securely. Anyone can contribute to blameless post-mortems.

Building a DevOps culture

The core pillars of DevOps culture are collaboration, shared ownership and accountability, automation, and learning and continuous improvement. Each of these pillars plays a vital role in realizing the full benefits of DevOps and quickly delivering reliable software at scale.

Although there's no one way to establish a DevOps culture, here are some best practices to consider:

- Change the organizational structure.

- Integrate DevOps culture into hiring.

- Adopt blameless principles.

- Provide learning opportunities.

- Communicate clearly about automation.

- Seek feedback.

- Measure the effectiveness of DevOps culture.

Leaders should model core values, set clear expectations without micromanaging and offer incentives to teams that meet DevOps metrics.

DevOps methodologies, principles and strategies

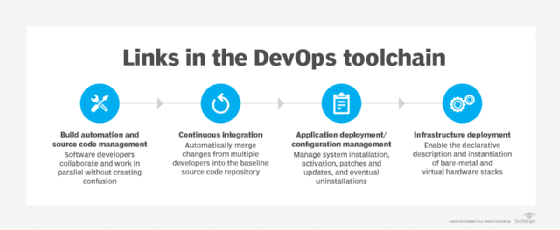

Code repositories. Version-controlled source code repositories enable multiple developers to work on code. Developers check code out and in and can revert to a previous version if needed. These tools keep a record of modifications made to the source code -- including who made the changes and when. Without tracking, developers might struggle to follow which changes are recent and which versions of the code are available to end users.

In a CI/CD pipeline, a code change committed in the version-control repository automatically triggers the next steps, such as a static code analysis or build and unit tests.

CI/CD pipeline engines. CI/CD enables DevOps teams to frequently validate and deliver applications to end users through automation during the development lifecycle. The continuous integration tool automates processes so developers can create, test and validate code in a shared repository as often as needed without manual effort. Continuous delivery extends these automated steps into production-level tests and release management configurations. Continuous deployment goes a step further, invoking tests, configuration and provisioning, and providing monitoring and potential rollback capabilities.

Artifact repositories. Source code is compiled into an artifact for testing. Artifact repositories enable version-controlled, object-based outputs. Artifact management is a good practice for the same reasons as version-controlled source code management.

Configuration management and IaC. Configuration management systems enable IT to provision and configure software, middleware and infrastructure using scripts or templates. The DevOps team can set up deployment environments for software code releases and enforce policies on servers, containers and VMs through a configuration management tool. Changes to the deployment environment can be version-controlled and tested, enabling DevOps teams to manage infrastructure as code (IaC).

Containers. Containers are isolated runtimes for software on a shared OS. Containers provide abstraction that enables code to work the same way across different underlying infrastructures from development to testing and staging, and then to production.

GitOps. GitOps espouses declarative source control over application and infrastructure code. Everything about the software, from feature requirements to the deployment environment, comes from a single source of truth. GitOps is associated with change management and deployment techniques, such as immutable infrastructure, which dictates replacing or reinstancing -- rather than upgrading or changing -- deployed code each time a change is made.

Cloud environments. DevOps organizations often concurrently adopt cloud infrastructure because they can automate its deployment, scaling and other management tasks. Many cloud vendors also offer CI/CD services.

Cloud-based DevOps pipelines. Public cloud providers offer native DevOps tool sets for use with workloads on their platforms. Cloud adopters can use pre-integrated services or run third-party tools.

As-a-service models. DevOps as a service is a delivery model for a set of tools that facilitates collaboration between an organization's software development and IT ops teams. In this delivery model, the provider assembles a suite of tools and handles the integrations to seamlessly cover the overall process of code creation, delivery and maintenance.

In other cases, service providers act as consultants, assisting businesses with DevOps projects or supplementing expertise that might be lacking across the DevOps process. Service providers should be evaluated based on factors including time in business, proven experience -- particularly in related market verticals -- and compatibility with existing DevOps tools and practices.

Monitoring and observability. Monitoring and observability tools enable DevOps professionals to oversee the performance and security of code releases on systems, networks and infrastructure. They can combine monitoring with analytics tools that provide operational intelligence. DevOps teams use these tools together to analyze how code changes affect the overall environment. Observability specifically uses metrics, logs and traces to understand why something is happening.

DevOps vs. Agile vs. Waterfall. In terms of strategy, DevOps is associated with Agile software development because Agile practitioners promoted DevOps as a way to extend the methodology into production. This mindset has even been labeled a counterculture to the IT service management practices championed in ITIL. In traditional Waterfall development, teams used an all-or-nothing approach, gathering requirements upfront and then writing, testing and releasing code as events. They addressed any performance or reliability issues as an afterthought.

To refine their practices, organizations should understand the related contexts of DevOps, Agile and Waterfall development, site reliability engineering (SRE) and SysOps, and even the variations within DevOps. These approaches influence how organizations structure teams and define responsibilities.

DevOps skills and job roles

Depending on the type of organization, there are several DevOps roles that IT leaders should consider for the team, as they each bring valuable skills to the table. The job descriptions vary, and not all are needed due to overlapping responsibilities, but here's a basic overview of the different types of roles:

- Software developers. These team members write, update and fix application code. Ideal candidates have experience with several programming languages and app development methodologies. They should also be familiar with production environments.

- IT operations engineers. Engineers in this role provision and deploy host infrastructure and software releases. They also monitor software in production. Beyond having IT ops experience, people in this role should understand the SDLC and be able to write application code and sysadmin scripts.

- DevOps engineers. Tasked with building and managing CI/CD pipelines and automations, DevOps engineers should know infrastructure design principles and be familiar with multiple platforms. They should have expertise in automation coding.

- Systems architects. This role evaluates services for hosting DevOps tools, oversees DevOps tool consolidation and monitors CI/CD pipelines. Ideal candidates should specialize in DevOps tools and high-level infrastructure design. Some organizations combine the DevOps engineer and systems architect roles because of their similarities.

- QA engineers. These engineers run manual tests, write automated tests for software releases and address any bugs. Necessary skills include writing quick, comprehensive tests and knowledge of test automation frameworks.

- UX engineers. This role involves working with developers and users to ensure the software and UI meet their needs. Effective communication and collaboration are key.

- Security engineers. Security engineers test application security and coordinate with developers and IT engineers to develop secure applications and infrastructure. Familiarity with and experience in DevSecOps is increasingly important.

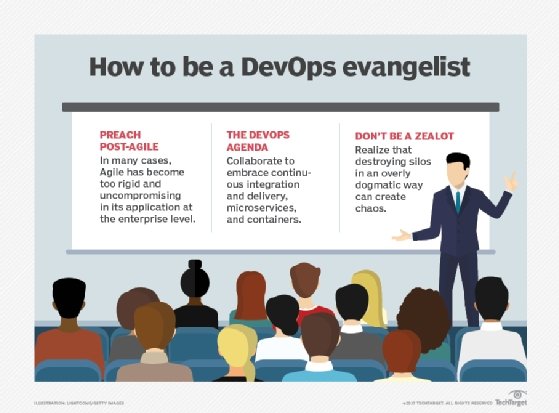

- DevOps evangelists. Team members in this role help build a strong DevOps culture in the organization. This can be a separate role or someone already on the team. They should be able to convey the importance of DevOps to decision-makers.

- SREs. These engineers work with developers and IT engineers on application and infrastructure design, monitor production environments and mitigate incidents. Responsibilities might overlap with IT and QA engineers, but SREs typically do a lot of code-based automation.

- Cloud engineers. This role involves deploying and managing applications in the cloud and overseeing any multi-cloud strategies. Expertise in cloud environments and tools can set them apart from general IT engineers.

- Automation engineers. Tasked with implementing and troubleshooting automated workflows, automation engineers sometimes have a broad role that encompasses DevOps processes and other business workflows. They should have experience with emerging technologies.

- AI DevOps engineers. These engineers oversee the use of AI in DevOps and CI/CD pipelines. Other roles could cover this, but as AI adoption increases, it might be worth creating a dedicated role for the compliance and security implications.

IT leaders can establish DevOps roles in one of three ways:

- Replace separate development and IT ops departments with a combined DevOps department.

- Run DevOps as a separate team alongside existing development and IT ops teams.

- Embed DevOps engineers into each department.

In addition to business acumen, DevOps team members should have a variety of skills in software development, infrastructure management and project management.

More specifically, DevOps engineers should be familiar with various platforms, programming and scripting languages, configuration management, version management, IaC, provisioning, deployment, security, tracking and assessing release performance, network optimization, troubleshooting, integration, communication and team management.

There are many DevOps certifications and training courses available, including, but not limited to, the following:

- DevOps Foundation Exam Study Guide.

- DevOps Culture and Mindset.

- Introduction to DevOps and Site Reliability Engineering.

- Kubernetes: Getting Started.

- Docker Essentials.

- Getting Started with DevOps on AWS.

- Preparing for Google Cloud Certification: Cloud DevOps Engineer Professional Certificate.

- Microsoft Certified: DevOps Engineer Expert.

- Red Hat's Developing Cloud-Native Apps with Microservices Architectures.

- IBM's Introduction to DevOps.

DevOps adoption best practices

The adoption of DevOps or other CD paradigms can be disruptive to software and management teams. There are inevitable changes to workflows, processes, tool sets and even staffing that will drive the need for more training. DevOps adoption can easily get off track and eventually fall by the wayside. Below are some tips to help ease DevOps adoption and increase the chances of DevOps success.

Start small

DevOps transformations don't happen overnight. Many companies start with a pilot project -- a simple application where they can get a feel for new practices and tools. DevOps teams look for quick, easy wins to refine workflows, learn the tools and prove the value of DevOps principles. For large-scale DevOps adoption, try moving in stages.

Consider the workflows

Initially, DevOps can mean a commitment from development and IT ops teams to understand the concerns and technical constraints at each stage of the software project. Agree upon KPIs to improve, such as shorter cycle times or fewer bugs in production. Understand how DevOps practices map to current development and deployment practices and plan suitable workflow changes to enhance software quality, security and compliance. Lay the groundwork for continuous processes by communicating across job roles.

Select the proper tools

Evaluate the existing tools for software development and IT operations. More or different tools might be needed. Identify process shortcomings, such as a step that's always handled manually -- for example, moving from a code commit to testing -- or a tool without APIs to connect with other tools.

Consider standardizing on a single DevOps pipeline across the whole company. With one pipeline, team members can move from one project to another without reskilling. This enables security specialists to harden the pipeline and eases license management. The tradeoff is that DevOps teams give up the freedom to use what works best for them.

Employ meaningful metrics

Simply adopting DevOps isn't enough to ensure success. Understand the need for DevOps in the first place -- what problems it is intended to solve, or what benefits it is intended to deliver. Select metrics and KPIs that will show those outcomes and then plan to measure and report on those metrics as an objective gauge of DevOps success.

Specific metrics will vary across organizations, but leaders should incorporate a mix of delivery and deployment, reliability and stability, operational efficiency, and business and customer impact KPIs.

Start with the five DORA metrics, an industry-standard benchmark for delivery and reliability established by Google Cloud's DevOps Research and Assessment team:

- Deployment frequency.

- Mean lead time for changes.

- Mean time to recover.

- Change failure rate.

- Reliability.

Use the DevOps maturity model

The DevOps maturity model illustrates five principal phases of adoption, ranging from novice to well-established. Organizations can use the DevOps maturity model as a guide to adoption by identifying their place in the model:

- Initial. Teams are siloed; work is reactive and done with ad hoc tool and process choices.

- Defined. A pilot project is a proof of concept that defines a DevOps approach, basic processes and tools.

- Managed. The organization scales up DevOps adoption with lessons learned from the pilot. The pilot's results are repeatable with different staff members and project types.

- Measured. With processes and tools in place, the teams share knowledge and refine practices. Automation and tool connectivity increase, and standards are enforced through policies.

- Optimized. Continuous improvement occurs. DevOps might evolve into different tool sets or processes to fit use cases. For example, customer-facing apps have a higher release frequency, and financial management apps follow DevSecOps best practices:

- Embedding security early with a "shift left" approach.

- Mapping DevSecOps to compliance frameworks.

- Integrating IaC security.

- Automating security across the pipeline.

- Fostering cross-functional ownership.

- Standardizing and securing toolchains.

- Securing the software supply chain.

- Implementing secrets management and identity controls.

- Implementing continuous monitoring and feedback.

- Adopting policy-as-code governance.

- Establishing security champion programs.

What are the challenges of DevOps?

The DevOps paradigm introduces complexities and changes that can be difficult to implement and manage within a busy organization. Common DevOps challenges include the following:

- Organizational and IT departmental changes, including new skills and job roles, which can be disruptive to development teams and the business.

- Expensive tools and platforms, including training and support to use them effectively.

- Development and IT tool proliferation.

- Unnecessary, fragile, poorly implemented and maintained or unsafe automation.

- Logistics and workload difficulties scaling DevOps across multiple projects and teams.

- Riskier deployment due to a fail-fast mentality and job generalization vs. specialization, where access to production systems is handled by less IT-savvy personnel.

- Regulatory compliance, especially when role separation is required.

- New bottlenecks, such as automated testing or repository utilization.

In short, DevOps doesn't solve every business problem or benefit every software development project in the same way.

DevOps tools

DevOps is a mindset, not a tool set. But it's hard to do anything in an IT team without suitable and well-integrated tools. In general, DevOps practitioners rely on CI/CD pipelines, containers and cloud hosting. Tools can be open source, proprietary or supported distributions of open source technology.

Here are some examples of the different types of tools available:

- Artifact repositories. AWS CodeArtifact, Azure Artifacts, Cloudsmith, JFrog Artifactory, Packagecloud, ProGet and Sonatype Nexus Repository.

- CI/CD pipeline engines. Azure Pipelines, CircleCI, Jenkins, GitHub Actions and GitLab CI/CD.

- Cloud-based DevOps pipelines. AWS CloudFormation and CodePipeline, and Azure DevOps and Pipelines.

- Cloud environments. AWS, Google Cloud and Microsoft Azure.

- Code repositories. Bitbucket, Git, GitHub and GitLab.

- Configuration management and IaC. Ansible, Chef, Puppet and SaltStack. IaC-specific tools include AWS CloudFormation and HashiCorp Terraform.

- Containers. Amazon Elastic Kubernetes Service, Docker, Kubernetes, Microsoft (Windows-specific container options) and Red Hat.

- GitOps. Argo CD and Flux CD.

- Monitoring and observability. Amazon CloudWatch, Datadog, Dynatrace, Grafana, New Relic, OpenTelemetry, Prometheus, and Splunk.

The evolution of DevOps

As DevOps became popular, organizations formalized DevOps approaches. Retailer Target originated the DevOps Dojo training method, for example. Vendors touted DevOps-enabling capabilities in tools, from collaboration chatbots to CI/CD suites built into cloud services.

Technologies such as microservices, virtual containers and public cloud services offered a natural fit for the fast, flexible nature of DevOps.

Modern trends in DevOps

DevOps continues to evolve, as AI surfaces to aid in everything from code creation to incident management. AI for DevOps means smarter automation, even shorter wait times and seamless translation of business needs into technology offerings.

The role of AI in DevOps practices affects teams in other ways as well. Established processes and DevOps roles and responsibilities are evolving as automation use in the SDLC increases. The tools that DevOps teams use are also getting smarter, ultimately leading to tool consolidation.

Other key DevOps trends in 2026 include platform teams joining DevOps teams and the use of intent-driven infrastructure, which enables teams to think less about the environment in which their software will be deployed, as AI determines the best fit based on workload analysis.

In addition, organizations are encouraging DevOps teams to be even more cost-conscious amid higher overall cloud spending. Organizations are also asking that regulatory concerns and recent geopolitical issues be at the forefront of DevOps teams' minds when developing and deploying software.

Currently, DevOps teams are building and customizing software to incorporate AI and automation, while the future of DevOps sees teams focusing more on high-level business issues and less on the tasks that AI and automation can handle.

Stephen J. Bigelow, senior technology editor at TechTarget, has more than 20 years of technical writing experience in the PC and technology industry.

Ryann Burnett, executive managing editor, has 10 years of experience at TechTarget Editorial, covering virtualization, containers, monitoring, observability, data centers, server hardware, IoT and other technologies.

Meredith Courtemanche and Alexander S. Gillis also contributed to this article.