What is software-defined networking (SDN)?

Software-defined networking (SDN) is a networking approach in which software is used to easily configure and centrally manage IT networks. SDN abstracts a network's different, distinguishable layers to make it more agile, flexible and easy to manage. Its main goal is to improve network control and empower enterprises to respond quickly to changing business requirements and quickly adopt innovative technologies.

How does SDN differ from traditional networking?

In a software-defined network, a network engineer or administrator can shape traffic from a centralized control console without having to touch individual switches in the network. A centralized SDN controller directs the switches to deliver network services wherever they're needed, regardless of the specific connections between a server and devices. They can also use APIs to facilitate communications between the network hardware and SDN software and to control data flows to and from various devices.

This process is a move away from traditional network architecture, in which individual and dedicated network devices like routers and switches control network traffic and make traffic decisions based on their configured routing tables. In traditional networks, hardware devices need to be manually and individually configured. Also, device troubleshooting happens on a case-by-case basis. And since visibility into the entire network is poor, there is no way to centrally control devices and their configurations.

Offering greater visibility into network health, centralized control and stronger security, SDN is increasingly the paradigm of choice for network managers, admins and IT teams. In fact, SDN has played an important role in networking for well over a decade now. It has also influenced many innovations in networking and helped to create more flexible, agile, secure and future-proof IT networks.

How does SDN work?

Originally, SDN technology focused solely on the separation of the network control plane from the data plane. While the control plane makes decisions about how packets (traffic) should flow through the network, the data plane moves packets from place to place. The decoupling between network hardware and software means that software oversees the control plane while the hardware is free to take care of the data plane. This provides a single pane of glass view that enables network admins to control the entire network in a centralized manner.

Today, SDN encompasses several types of technologies in addition to functional separation, including network virtualization and automation through programmability.

In a classic SDN scenario, a packet arrives at a network switch. Rules built into the switch's proprietary firmware tell the switch where to forward the packet. These packet-handling rules are sent to the switch from the centralized controller.

The switch -- also known as a data plane device -- queries the controller for guidance as needed and provides the controller with information about the traffic it handles. The switch sends every packet going to the same destination along the same path and treats all the packets the same way.

Software-defined networking uses an operation mode that is sometimes called adaptive or dynamic, in which a switch issues a route request to a controller for a packet that does not have a specific route. This process is separate from adaptive routing, which issues route requests through routers and algorithms based on the network topology, not through a controller.

The virtualization aspect of SDN comes into play through a virtual overlay, which is a logically separate network on top of the physical network. Users can implement end-to-end overlays to abstract the underlying network and segment network traffic. This microsegmentation is especially useful for service providers and operators with multi-tenant cloud environments and cloud services, as they can provision a separate virtual network with specific policies for each tenant.

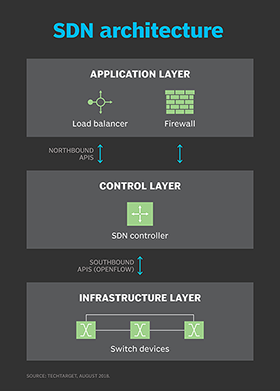

SDN architecture

A typical representation of software-defined networking architecture comprises three layers: the application layer, the control layer and the infrastructure layer. These layers communicate using northbound and southbound application programming interfaces (APIs).

Application layer

The application layer contains the typical network applications or functions organizations use. This can include intrusion detection systems, load balancing or firewalls. Where a traditional network would use a specialized appliance, such as a firewall or load balancer, a software-defined network replaces the appliance with an application that uses a controller to manage data plane behavior.

Control layer

The control layer represents the centralized SDN controller software that acts as the brain of the software-defined network. This controller resides on a server and manages policies and traffic flows throughout the network. It also communicates with the application layer through the northbound interface to understand application resource needs.

Infrastructure layer

The infrastructure layer is made up of the physical switches and other devices in the network. These devices forward the network traffic to their destinations. The SDN infrastructure can be easily customized by configuring new services and allocating virtual resources as needed by applications. These infrastructure changes can be centrally managed in real time.

APIs

These three layers communicate using respective northbound and southbound APIs. Applications talk to the controller through its northbound interface. The controller and switches communicate using southbound interfaces, such as OpenFlow, although other protocols exist.

There is currently no formal standard for the controller's northbound API to match OpenFlow as a general southbound interface. It is likely the OpenDaylight controller's northbound API may emerge as a de facto standard over time, given its broad vendor support.

SDN models

Organizations can implement software-defined networking in many ways. Here are the four main models:

- Open SDN. It involves using open protocols (e.g., OpenFlow) to control the behavior of virtual and physical devices in the data plane.

- API SDN. Here, APIs rather than protocols control the flow of data through the network.

- SDN Overlay. A virtual network runs on top of existing hardware, creating dynamic tunnels to data centers and allocating bandwidth to each channel without affecting the physical network.

- Hybrid SDN. This model combines traditional networking and SDN and is particularly useful for incrementally introducing SDN into legacy environments.

Benefits of software-defined networking

SDN delivers a variety of benefits to organizations. These include the following:

Simplified network management and control

SDN helps to simplify network management for IT teams. A network administrator needs to deal with only one centralized controller to configure and manage all connected devices. This approach is a radical departure from traditional networking, where configuring multiple devices individually is the norm.

End-to-end visibility into networks

This makes device configurations, resource provisioning and management easier. It also enables IT teams to easily monitor network health and act quickly to increase network capacity as business requirements change.

Stronger network security

Centralized, software-defined network also provides a security advantage for organizations. The SDN controller can monitor traffic and deploy security policies. If the controller deems traffic suspicious, for example, it can reroute or drop the packets. Also, admins can easily implement security policies across the entire network to increase its ability to withstand threats.

Simplified policy changes

With SDN, an administrator can change any network switch's rules when necessary -- prioritizing, deprioritizing or even blocking specific types of packets with a granular level of control and security.

This capability is especially helpful in a cloud computing multi-tenant architecture, as it enables the administrator to manage traffic loads in a flexible and efficient manner. Essentially, this enables administrators to use less expensive commodity switches and have more control over network traffic flows.

Reduced hardware footprint and Opex

SDN virtualizes hardware and services that were previously carried out by dedicated hardware. Also, administrators can use open source controllers instead of costly vendor-specific devices. This reduces the organization's hardware footprint and lowers operational costs.

Networking innovations

SDN also contributed to the emergence of software-defined wide area network (SD-WAN) technology. SD-WAN employs the virtual overlay aspect of SDN technology. SD-WAN abstracts an organization's connectivity links throughout its WAN, creating a virtual network that can use whichever connection the controller deems fit to send traffic to. By adopting this technology, organizations can programmatically configure their network topology in a WAN. Also, SD-WAN can better handle large amounts of traffic and multiple connectivity types compared to traditional WANs.

What are the challenges of SDN?

Main adopters of SDN include service providers, network operators, telecoms, carriers and large companies, such as Meta and Google. However, there are still some challenges behind SDN.

Security

Security is both a benefit and a concern with SDN technology. The centralized SDN controller presents a single point of failure and, if targeted by an attacker, can prove detrimental to the network.

Controller redundancy costs

Implementing controller redundancy is one way to minimize the risk of a single point of failure. However, this can entail an additional cost.

Unclear definition

Another challenge with SDN is the industry really has no established definition of software-defined networking. Different vendors offer various approaches to SDN, ranging from hardware-centric models and virtualization platforms to hyperconverged networking designs and controllerless methods.

Market confusion

Some networking initiatives are often mistaken for SDN, including white box networking, network disaggregation, network automation and programmable networking. While SDN can benefit and work with these technologies and processes, it remains a separate technology.

Slow adoption and costs

SDN technology emerged with a lot of hype around 2011 when it was introduced alongside the OpenFlow protocol. Since then, adoption has been relatively slow, especially among enterprises with smaller networks and fewer resources. Many enterprises cite the cost of SDN deployment to be a deterring factor.

SDN use cases

Some use cases for software-defined networking include the following:

- DevOps. SDN can facilitate DevOps by automating application updates and deployments. This strategy can include automating IT infrastructure components as the DevOps apps and platforms are deployed.

- Campus networks. Campus networks can be difficult to manage, especially with the ongoing need to unify Wi-Fi and Ethernet networks. SDN controllers can benefit campus networks by offering centralized management and automation, improved security and application-level quality of service across the network.

- Service provider networks. SDN helps service providers simplify and automate the provisioning of their networks for end-to-end network and service management and control.

- Data center security. SDN supports more targeted protection and simplifies firewall administration. Generally, enterprises depend on traditional perimeter firewalls to secure their data centers. However, companies can create a distributed firewall system by adding virtual firewalls to protect the virtual machines. This extra layer of firewall security helps prevent a breach in one virtual machine from jumping to another. SDN centralized control and automation also enables admins to view, modify and control network activity to reduce the risk of a breach.

Many data centers and WANs also use SDN to capture its efficiency, cost, and agility benefits.

The impact of SDN

Software-defined networking has had a major effect on IT infrastructure management and network design. As SDN technology matures, it not only changes network infrastructure design but also how IT views its role.

SDN architectures can make network control programmable, often using open protocols, such as OpenFlow. Because of this, enterprises can apply aware software control at the edges of their networks. This enables access to network switches and routers rather than using the closed and proprietary firmware generally used to configure, manage, secure and optimize network resources.

While SDN deployments are found in every industry, the technology's effect is strongest in technology-related fields and financial services.

SDN is influencing the way telecommunications companies operate. For example, Verizon uses SDN to combine all its existing service edge routers for Ethernet and IP-based services into one platform. The goal is to simplify the edge architecture, enabling Verizon to enhance operational efficiency and flexibility to support new functions and services.

SDN's success in the financial services sector hinges on connecting to large numbers of trading participants, low latency and a highly secure network infrastructure to power financial markets worldwide.

Nearly all the participants in the financial market depend on legacy networks that can be nonpredictive, hard to manage, slow to deliver and vulnerable to attacks. With SDN technology, organizations in the financial services sector can build predictive networks to enable more efficient and effective platforms for financial trading apps.

SDN and SD-WAN

SD-WAN is a technology that distributes network traffic across WANs using SDN concepts to automatically determine the most effective way to route traffic to and from branch offices and data center sites.

SDN and SD-WAN share similarities. For example, they both separate the control plane and data plane, and they both support the implementation of additional virtual network functions.

However, while SDN primarily focuses on the internal operations within a local area network, SD-WAN focuses on connecting an organization's different geographical locations. This is done by routing applications to the WAN.

Other differences between SDN and SD-WAN include the following:

- Customers can program SDN, while the vendor programs SD-WAN.

- SDN is enabled by network functions virtualization (NFV) within a closed system. SD-WAN, on the other hand, offers application routing that runs virtually or on an SD-WAN appliance.

- SD-WAN uses an app-based routing system on consumer-grade broadband internet. This enables better quality performance and a lower cost per megabyte than Multiprotocol Label Switching (MPLS), which is critical to SDN.

SDN and SD-WAN are two different technologies aimed at accomplishing different business goals. Typically, small and midsize businesses use SDN in their centralized locations, while larger companies that want to establish interconnection between their headquarters and off-premises sites use SD-WAN.

Learn about important components of SDN controllers, such as modularity, APIs clustering and graphical user interfaces.