Funtap - stock.adobe.com

A guide to deploying AI in edge computing environments

Deploying AI at the edge is increasingly popular due to processing speed and other benefits. Consider hosting requirements, latency budget and platform options to get started.

Combining AI with edge computing can be complex. The edge is a place where resource costs must be controlled and other IT optimization initiatives, like cloud computing, are difficult to apply.

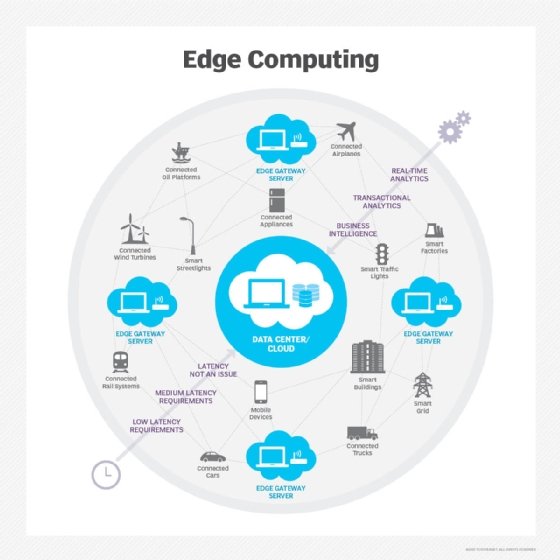

Edge computing is an application deployment model where part or all of an application is hosted near the real-world system it's designed to support. These applications are often described as real-time and IoT because they interact directly with real-world elements such as sensors and effectors, requiring high reliability and low latency.

The edge is usually on premises, near users and processes, often on a small server with limited system software and performance. This local edge is often linked to another application component running in the cloud.

As AI increases in power and complexity, it creates more opportunities for edge deployment scenarios. When deployed in edge computing environments, AI can offer a range of benefits across industries. But proper implementation requires certain capabilities and platform considerations.

Benefits of AI in edge computing

AI deployed in edge computing environments, sometimes referred to as edge AI, offers many benefits.

For edge applications that process events and return commands to effector devices or messages to users, AI at the edge enables better and more flexible decision-making than simple edge software alone could provide. This might include applications that correlate events from single or multiple sources before generating a response, or those requiring complex analysis of event content.

Other benefits of AI in edge computing environments include the following:

- Improved speed.

- Stronger privacy.

- Better application performance.

- Reduced latency and costs.

Considerations for AI in edge computing

When deploying AI in real-time edge computing, organizations must address two important technical constraints: hosting requirements vs. edge system capabilities, and latency budget.

Hosting requirements

Most machine learning tools can run on server configurations suitable for edge deployment, as they don't require banks of GPUs. Increasingly, researchers are also developing less resource-intensive versions of more complex AI tools -- including the large language models popularized by generative AI services -- that can run on local edge servers, provided that the system software is compatible.

If the needed AI features are not available in a form suitable for local edge server deployment, it might be possible to pass the event to the cloud or data center for handling, as long as the application's latency budget can be met.

Latency budget

Latency budget is the maximum time an application can tolerate between receiving an event that triggers processing and responding to the real-world system that generated that event. This budget must cover transmission times and all processing time.

The latency budget can be a soft constraint that delays an activity if not met -- for instance, an application that reads a vehicle RFID tag or manifest barcode and routes the vehicle for unloading. It can also be a hard constraint, where failure to meet the budget could result in catastrophic failure. Examples of the latter include a dry materials dump into a railcar on a moving train or a high-speed traffic merge.

When to deploy AI at the edge

Deciding when to host AI at the edge involves balancing the available compute power at a given point, the round-trip latency between that point and the trigger event source, and the destination of the responses. The greater the latency budget, the more flexibility in placing AI processes, and the more power they can bring to the application.

While some IoT systems process events individually, complex event correlation is useful in other applications. For example, in traffic control, the optimal command depends on events from multiple sources, such as traffic sensors.

Analysis of event contents is also highly valuable in healthcare. For example, AI can analyze blood pressure, pulse and respiration to trigger an alarm if current readings, trends in readings or relationships among different co-occurring health metrics indicate that a patient is in trouble.

Latency budget permitting, it is also possible to access a database stored locally, in the cloud or in a data center. For example, a delivery truck might use RFID to obtain a copy of the loading manifest, the contents of which could then be used to direct the truck to a bay, dispatch workers to unload the truck and generate instructions for handling the cargo.

Even when AI is not hosted in the cloud or data center, edge applications often generate traditional transactions from events, along with local processing and turnaround. Organizations need to consider the relationship between the edge host, AI and transaction-oriented processing when planning for edge AI.

Choosing an edge AI platform

The primary consideration when selecting an edge AI platform is how it is integrated and managed. Where edge AI is only loosely linked with the cloud or data center, special-purpose platforms like Nvidia EGX are optimized for both low latency and AI. For edge AI tightly coupled with other application components in the cloud or data center, a real-time Linux variant is easier to integrate and manage.

In some cases, where a public cloud provider offers an edge component -- such as AWS IoT Greengrass or Microsoft's Azure IoT Edge -- it's possible to divide AI features among the edge, cloud and data center. This approach can streamline AI tool selection for edge hosting, enabling organizations to simply select the AI tool included in their edge package when available.

Most edge AI hosting is likely to use simpler machine learning models, which are less resource-intensive and can be trained to handle most event processing. AI in the form of deep learning requires more hosting power, but might still be practical for edge server hosting, depending on model complexity. LLMs and other generative AI models are becoming more distributable to the edge, but currently, full implementations are likely to require cloud or data center hosting.

Finally, consider the management of edge resources used with AI. While AI itself does not necessitate different management practices compared with other forms of edge computing, selecting a specialized platform for the edge and AI might require different management practices and tools.

Tom Nolle is founder and principal analyst at Andover Intel, a consulting and analysis firm that looks at evolving technologies and applications first from the perspective of the buyer and the buyer's needs. By background, Nolle is a programmer, software architect, and manager of software and network products, and he has provided consulting services and technology analysis for decades.

[61] copy.jpeg)