Getty Images

Managing drift in AI models and data

The training data and algorithms used to build AI models have a shelf life. Detecting and correcting model drift ensures that these systems stay accurate, relevant and useful.

AI models never remain static; they inevitably drift over time. This makes continuous output monitoring and model drift mitigation vital to any ongoing AI strategy.

AI systems are developed using complex combinations of algorithms that produce results by comparing new operational data with a comprehensive set of training data. This comparison between training and production data is crucial. Training data and proper training techniques enable AI models to learn patterns and take informed actions amid an ever-rising flood of real-time business information.

Unfortunately, real-world data and operating conditions can change unexpectedly or gradually over years, months or even days. Training data -- the foundation of AI decision-making -- doesn't autonomously adapt to keep pace with these changes. Over time, increasing discrepancies between production conditions and training data can impair a model's accuracy. It's a phenomenon known as model drift, which can lead to declining model performance.

What is AI model drift?

AI model drift occurs when the real-world data a model encounters deviates from the data its engineers trained it to recognize or handle. As a result of this discrepancy, the model gradually loses its ability to accurately spot trends, identify issues or make decisions, as it continues to apply outdated patterns it learned during initial training.

Consider an email filtering model trained to identify spam by flagging certain words or phrases commonly found in such emails. Over time, language evolves, and spammers adopt new tactics to attract readers' attention. This could include new buzzwords, phrases, references and tactics like spear phishing that the model wasn't trained to recognize.

Over time, new elements might replace those that engineers trained their models to recognize. These changes in production data degrade the model's performance because it was never trained to handle these new data elements. This results in model drift that reduces, or even erases, the model's value to the business. To combat this, AI and machine learning teams can update the training data and possibly integrate adaptive learning mechanisms that can adjust to new spamming behaviors.

Drift is correctable and has no permanent impact on models themselves. Its severity depends on the amount of deviation between its production and training data. If production data and variables returned to expected parameters, a model's behavior and output would be restored. Similarly, retraining the model on new or updated data, or adjusting the model to reflect changing relationships between variables, can often remediate drift. When drift occurs, it compromises a model's ability to deliver accurate, predictable outputs, thereby reducing its value.

Causes of AI model drift

There are two principal causes of model drift:

- Data drift occurs when there is a change in the distribution, scope or nature of the incoming production data over time. For example, a model that predicts trends for a retail business might have been trained on typical shipping activity data before 2020. During the COVID-19 pandemic, shipping activity escalated significantly, leading to an influx of production data that differed substantially from the training data. Data drift is often termed feature drift, where a feature is a data element.

- Functional drift occurs when there are changes in the fundamental underlying behaviors or relationships among variables, making the initial parameters less suited to the operational environment. For example, a financial services provider's AI model might experience functional drift if shifts in the economy alter how loan defaults relate to credit scores. Functional drift is sometimes called concept drift because it refers to the model's behavior or to how information is conceptually related.

AI data drift vs. AI model drift

You might have heard the ideas of data drift and model drift used interchangeably. While they are almost inseparable in the discussion of drift, there is a vital difference between data drift and model drift.

- Data drift occurs when input data differs from training data, leading to deviations from established input assumptions. For example, imagine a lending model trained in 2022 making decisions on loan applications in 2026, when income and debt patterns are significantly different. Data drift is best detected by comparing training and real-time data and can usually be fixed by retraining the model.

- Model drift occurs when the logical relationship between inputs and outputs shifts, making the model's decision logic outdated or obsolete. For example, a fraud detection platform becomes less effective because malicious actors start using new tactics. Model drift is typically detected by monitoring performance metrics, such as accuracy, over time and is often remediated through retraining or model algorithm updates.

Other factors can also result in forms of model drift, undermining reliability and accuracy:

- Poor data quality. Incorrect measurements, missing values, lack of normalization and other data errors can result in data quality issues that reduce model effectiveness. Feeding a sales prediction model incorrect transaction amounts will not produce reliable results. Today's model data is under enormous pressure to be "AI-ready," which demands careful preprocessing, often implemented in real time. Data science specialists can help direct the most effective preprocessing and feature enhancements needed to optimize and maintain data quality.

- Upstream data changes. Training and production data must be preprocessed through a data pipeline before it reaches machine learning models and AI systems. Changes to the data pipeline can absolutely affect data quality. Still, many more subtle changes along the data pipeline -- such as changes to numerical rounding behaviors -- can alter how a model calculates or processes a data feature, resulting in drift.

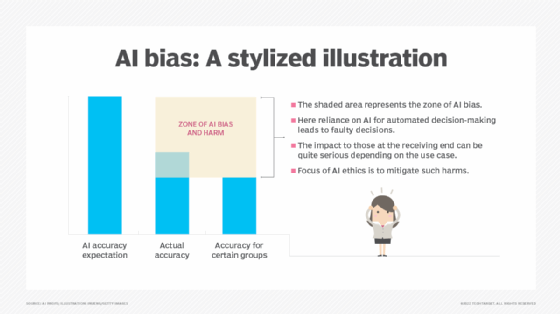

- Training data bias. Data bias occurs when the distribution of data in a set is skewed or fails to represent the true distribution. If engineers train a model using biased data, it will perform poorly or deliver undesirable outcomes in production when it encounters data in real-world environments that differs from its training set.

- External events. Models used for tasks such as user experience or sentiment analysis can be inundated with unexpected data from external sources, such as political events, economic changes and natural disasters. For example, a regional war might affect user sentiment analysis for a product or service, causing previously positive indicators to suddenly decline. This form of data drift sometimes resolves quickly, but it can also persist for an extended period, causing a prolonged effect on the model.

How to monitor and detect AI model drift

Detecting model drift can be tricky; businesses might be averse to the time required to build and train a model and to check its results. However, business environments and data change over time, putting any system at risk for model drift. This, in turn, can inhibit accurate decision-making within the organization and lead to worse ROI from AI initiatives.

Detecting model drift requires a comprehensive suite of methods:

- Direct comparison. The most straightforward method for detecting model drift is to compare predicted and actual values. For example, if a model forecasts revenue for the upcoming quarter, regularly comparing the predicted revenue to the actual revenue for that quarter will make drift evident if the two results diverge over time.

- Model performance monitoring. Numerous metrics can help to measure model performance, including confusion matrices, F1 score, and gain and lift charts. Other statistical methods, such as the Kolmogorov-Smirnov test, can help calculate the deviation of outputs from the expected mean. The Z-score, or Chi-squared test, can identify important changes in the mean of a variable or statistical distributions. Model engineers should select the metrics that are most appropriate for the model, its intended purpose and the characteristic under review.

- Data and feature assessments. Data and the features used in models change over time. Model engineers should periodically assess the data delivered to the model, review the training data used to prepare it, and reevaluate the algorithms and assumptions used to construct it. This can help teams determine whether data quality has changed and whether the existing features still have predictive power.

- Comparative models. When two or more similar models are available, it might be worth comparing their outputs to understand their variability and sensitivity to different data sets. For example, teams could develop parallel models that use slightly different training or production data, then compare their outputs to help identify drift in one or more models.

There are many varied tools available to help detect model and data drift. For example, tools such as Arize AI, Fiddler AI, Evidently AI and Google's Vertex AI Model Monitoring can track changes to data and detect drift over time. Regardless of the method or tools used, drift detection should be treated as a regular -- or even constant -- process needed to ensure accurate outputs over time.

How to correct AI model drift

To correct model drift, businesses can employ machine learning workflows that include a recurring process of data QA, drift monitoring and mitigation. This includes establishing strong data governance practices, proactively designing models that can adapt over time and regularly auditing them for accuracy and reliability.

Specific drift mitigation strategies include the following:

- Continuous monitoring. Monitoring and observability tools are key for tracking input data and calculating distributions -- comparing them to those in the training data can alert business leaders to potential drift and trigger remediation strategies.

- Data pipelines and feature engineering. Consider any changes across the entire data pipeline, which often involve data preprocessing and feature engineering. Remediating drift can involve adjusting the data pipeline and adding data features or even synthetic data to refine the model's performance.

- Model retraining. Periodic retraining is one of the most straightforward means of mitigating model drift, and it can be performed as needed -- whether at regular intervals or when triggered by detected drift. Retraining can provide fresh, accurate, complete and valid data that enables the model to evolve in response to new data and features.

- Adaptive techniques. Traditional machine learning models employ initial training and retraining as needed. Still, generative and other advanced model designs can implement feedback loops within the model, enabling it to learn from and adapt to incoming data. One such technique might involve user scoring or other human feedback, enabling the model to dynamically tailor its decision-making to produce more desirable outputs. Engineers can integrate other incremental or continuous learning techniques into the model for regular training updates.

- Multiple models. Another way to guard against model drift is to employ several related models simultaneously, each capturing different aspects of the problem or data. Evaluating the same issue from different perspectives establishes a more holistic approach to analytics and decision-making. Using multiple models can also serve as a safeguard against the failure of the entire system if one model starts to drift.

Stephen J. Bigelow, senior technology editor at TechTarget, has more than 30 years of technical writing experience in the PC and technology industry.