disambiguation

What is disambiguation?

Disambiguation is the process of determining a word's meaning -- or sense -- within its specific context. A word, whether spoken or written, often has multiple senses, and it is only through its context that the meaning becomes clear.

For example, if a local news website displays the headline "Bank collapses," readers might try to withdraw money from their savings accounts, when the article could be referring to a riverbank. If instead the headline reads "Bank collapses on the Stony River," readers will immediately know that bank refers to the water's edge. They automatically disambiguate the word because of its proximity to "river," which provides the context needed to understand how the word bank is being used.

What is word sense disambiguation?

Most of the time, disambiguation is an unconscious process that people do naturally -- if they have the social and cultural foundation necessary to understand a word's variations. In computing, however, disambiguation is a more complex process because that foundation can no longer be assumed.

Disambiguation, when applied to computing, is typically referred to as word sense disambiguation (WSD). Programs that carry out WSD determine each word's possible senses and choose the sense that best fits the surrounding context, using various techniques to make a decision.

For programs that perform natural language processing (NLP), such as medical transcription applications that convert spoken words to written language or assistive technologies that translate typed text into artificial speech, words that have multiple meanings can be challenging, especially if the context in which they're used does not easily clarify their meaning.

Consider the word "tick." When a WSD system encounters this word in a sentence, it must choose from a list of possible senses. WordNet identifies the following senses for the term tick in its noun and verb forms:

- (n) tick, ticking (a metallic tapping sound) "he counted the ticks of the clock."

- (n) tick (any of two families of small parasitic arachnids with barbed proboscis; feed on blood of warm-blooded animals).

- (n) check mark, check, tick (a mark indicating that something has been noted or completed etc.) "as he called the role he put a check mark by each student's name."

- (n) tick (a light mattress).

- (v) click, tick (make a clicking or ticking sound) "The clock ticked away."

- (v) tick, ticktock, ticktack, beat (make a sound like a clock or a timer) "the clocks were ticking"; "the grandfather clock beat midnight."

- (v) tick, retick (sew) "tick a mattress."

- (v) check, check off, mark, mark off, tick off, tick (put a check mark on or near or next to) "Please check each name on the list"; "tick off the items"; "mark off the units."

Most WSD systems include a separate module for determining a word's part of speech, such as noun or verb, which helps to narrow down the possible senses. However, even if the system knows that tick is being used as a noun, it must still contend with multiple meanings, so it must also take the context into account.

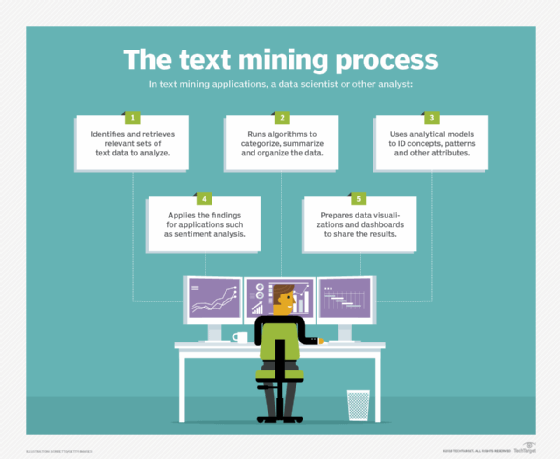

For example, a text mining application that includes a WSD module might encounter the passage: "A tick can be quite annoying, but it is more than just an irritation. It can transmit Lyme disease to humans." If the application looks only at the word tick, it does not know whether the word refers to a type of mattress, clock sound, check mark or parasitic arachnid. The first sentence does not provide enough context to clarify its meaning. However, the mention of "Lyme disease" in the second sentence makes it very likely that tick refers to the parasite.

Although WSD is not a simple operation, it is essential for language processing. Various types of programs -- such as language translators, information retrieval systems, speech recognition software, text-to-speech converters and other language processing systems -- commonly incorporate some type of WSD component. Disambiguation is also crucial in the analysis of unstructured data, such as emails, documents, instant messages or social networking content.

Approaches to disambiguation

Researchers have spent decades refining and improving WSD to achieve the greatest accuracy. Two popular approaches are the shallow method and the deep method.

The shallow method uses nearby words to determine a term's intended meaning, rather than trying to understand the text as a whole. For example, if the word "manufacturing" appears in close proximity to the word "plant," then plant probably refers to some type of facility, rather than to a primrose, an actor sitting in the audience, or a defendant claiming false evidence. The shallow method is commonly used for disambiguation because it is fairly accurate and is easier to implement than the deep method. However, it is not always reliable, especially if a document contains multiple instances of a word that have different meanings.

The deep method goes further into the meanings of the words, pulling them from large lexical data sources, such as dictionaries or thesauruses, to determine all their possible senses. Although this is a more precise method than the shallow method, it is very difficult to implement outside specific domains. Creating a database comprehensive enough to achieve the high degree of accuracy needed to support cross-domain WSD is a significant challenge. Yet, when a smaller, less comprehensive database is used, the results are usually less accurate.

Approaches to disambiguation can also be classified by their source of knowledge and level of supervision. The following four groups are the most common categories:

- Knowledge-based. Also called dictionary-based, this method relies solely on dictionaries, thesauruses or knowledge-based resources such as WordNet or the Unified Medical Language System. This approach does not use any type of corpus. One of the most common approaches to knowledge-based WSD is the Lesk algorithm, which is built on the premise that words near each other are somehow related and their meaning can be extracted through a knowledge base.

- Supervised. The supervised approach assumes that a word's context provides enough information to determine its meaning. It relies on sense-annotated corpora to distinguish word senses and generate labeled training data. The better the training data, the more effective the algorithms that use the data. This means that the algorithms are only as good as the training data itself. However, creating the corpora used to generate the training data can be time-consuming and expensive.

- Unsupervised. This approach does not use labeled training data. It instead uses non-annotated raw data and comparable measures to induce senses from the text. This method assumes that similar senses occur in similar context, based on the formation of word clusters. The unsupervised approach is typically less accurate than the supervised approach, but it avoids the knowledge acquisition bottleneck and large investment in training data, making it less expensive and time-consuming.

- Semi-supervised. Because of the challenges that come with the supervised and unsupervised approaches, many WSD algorithms take a semi-supervised approach, which uses both labeled and unlabeled data for training. This approach requires less corpora and training data, providing a balance between the other two options.

Today's WSD programs rely heavily on sophisticated AI algorithms to search a word's surrounding text or an entire document. The algorithms find contextual terms that help determine the intended meaning of a particular word. Some algorithms rely on the idea that most words tend to have one meaning in a given document, which is often a reasonable assumption in sense identity.

Learn about different types of AI algorithms and how they work and see how natural language processing augments analytics and data use.