GDPR, CCPA, cloud drive security management tool makeovers

As data protection and privacy laws like GDPR and CCPA take hold, data managers refine governance practices, while vendors enhance traditional big data security tools.

In the wake of numerous data breaches, government regulations and mountains of data pouring in from multiple sources and channels, the integrity, protection and privacy of that data weigh on the minds of data managers and scientists mining, prepping and using data for analysis and operations.

As a result, companies are taking big data security management measures to protect their data, ensure that the data is uncorrupted and trustworthy, and comply with laws like GDPR and the California Consumer Privacy Act (CCPA). Yet many organizations have been struggling to get these efforts off the ground, sometimes when it's too late, resulting in heavy penalties and costs due to data breaches and noncompliance.

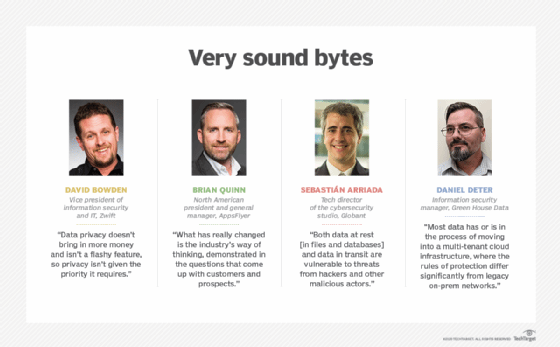

"Data privacy doesn't bring in more money and isn't a flashy feature, so privacy isn't given the priority it requires," said David Bowden, vice president of information security, data privacy, compliance and IT at Zwift, an online cycling and running physical training program. Bowden, an advisory group member of ISACA, an international professional association focused on IT governance, first discovered the importance of data security while working for a game company and is applying that experience to protect the privacy of Zwift fitness app users.

Data protection laws date as far back as 1970 but, more recently, have been undergoing revisions and updates to embrace the internet and other data sharing systems. "Privacy and data protection laws, policy and process have evolved tremendously in the last few years," Bowden acknowledged, "primarily due to the education of the public regarding the abuse and misuse of this data and the passing and enforcement of more comprehensive laws to protect individuals."

By necessity, companies are taking a more proactive stance to identify the security risks associated with new applications and services. "What has really changed is the industry's way of thinking, demonstrated in the questions that come up with customers and prospects," said Brian Quinn, North American president and general manager of San Francisco-based marketing analytics platform maker AppsFlyer. "Previously, it was mainly the enterprise-grade organizations that were taking interest in our approach to data privacy, but now we're having these conversations with small and midmarket organizations as well."

Security and privacy are moving targets

A wide range of technologies are available to help data managers protect and properly manage data. Traditional information security tools include endpoint protection, data exfiltration identification, network analysis, monitoring and logging. Other tools are very specific to data privacy, such as data identification, classification and governance.

Initially, there was a big push for these types of tools because of GDPR, but many users discovered that a more thoughtful approach was required as applications scaled in size and number. While many of these tools worked in principal, Bowden explained, they didn't address the scale needed for systems with 150-million-plus data subjects and petabytes of data. In addition, they were based on interpretations of the GDPR and CCPA laws since there were no set precedents to indicate what privacy professionals needed.

Now that companies have experience with GDPR, CCPA and related data privacy laws, Bowden said he believes that vendors are getting a better perspective on what product features and capabilities are required to comply with data protection and privacy regulations.

Anonymize to privatize

Michael Tegtmeier

Michael Tegtmeier

Anonymization is an emerging strategy for protecting data privacy in the event of a security breach, said Michael Tegtmeier, CEO and founder of Berlin wind turbine analytics company Turbit Systems. Using this technique, data is scrambled in such a way that it can still provide useful analytics or AI models but can't be easily reverse engineered to identify individuals.

Some companies with very sensitive data are even exploring different techniques for encrypted computing and confidential computing that develop analytics and models from encrypted data. Another technique ensures that the resulting models are stripped of sensitive data.

Tegtmeier said his company uses data collected from turbine customers to train models to learn the average behavior of each turbine type, while ensuring that identifying data is stripped away from the models. As a result, the models provide customer insights without disclosing sensitive data, such as a specific turbine site's earnings.

Protecting data at rest and in transit

"Both data at rest [in files and databases] and data in transit are vulnerable to threats from hackers and other malicious actors," said Sebastián Arriada, tech director of the cybersecurity studio at Globant, an IT services and software development company. In devising a big data security approach, he described four main categories of tools for protecting data at rest and in transit that data managers should consider:

- Data discovery tools. For mapping data, they're usually run periodically to find situations where data has been stored in or transmitted to an unexpected location.

- Data masking tools. They hide the original data with modified content before it's stored. Different scenarios may require data masking, such as protecting data from third-party vendors, preventing operator errors and avoiding sensitive data in processes where it's not required.

- Data encryption tools. They encrypt data at any level of the TCP/IP stack. Data encryption in transit is almost a default option for the web, and more than 90% of web traffic is encrypted. Cloud providers offer data at rest that's encrypted by default to increase the level of security in those environments.

- Data leakage detection tools. They help to detect and alert teams to potential exfiltration activity. Standard network devices can be used to detect data leakage, firewalls can be used to identify connections to blacklisted IPs and proxies can detect suspicious outbound connections.

Many companies are starting to adopt DevSecOps practices that weave security into software development practices. Arriada recommended taking data protection a step further by adding compliance with data regulations and requirements as an ongoing part of the software development lifecycle. His recommendations include identifying information affected by data regulation laws, aligning architecture designs to data requirements, reducing surface attacks with data minimization and anonymization techniques, and implementing external services to validate GDPR and CCPA alignment with cloud providers.

Data protection and the COVID-19 effect

Bill Miller

Bill Miller

The COVID-19 pandemic has dramatically changed the work environment and how data is managed, creating new data protection challenges for businesses. "As large groups of employees and contractors transition from a secure office to a remote work-from-home environment, data protection is key," said Bill Miller, senior vice president and CIO at NetApp, a hybrid cloud data services and data management provider based in Sunnyvale, Calif.

When individual employees move company data to retail or consumer-grade cloud locations to make sharing information easier, data can be compromised or exfiltrated. In addition to having the right data security tools in place, Miller noted, "[i]t's essential to tell them why it's so important that they don't [move data] on their own and undermine the built-in security of approved tools."

Employees working remotely but still inside the company's hermetically sealed environment can access enterprise apps via a secured desktop running in the cloud without downloading data locally.

Balancing cloud migration with on-premises security

Data managers have had to shift their thinking from protecting data within their environment to protecting data across a wide range of cloud services that shape a company's digital ecosystem, said Daniel Deter, information security manager at Green House Data, a managed services provider based in Cheyenne, Wyo. As a result, the focus has shifted from managing data egress at external boundaries to tools and platforms used during the data lifecycle.

Security capabilities are increasingly built into productivity tools and offered as a SaaS like Office365 and Google Docs. "Most data has or is in the process of moving into a multi-tenant cloud infrastructure, where the rules of protection differ significantly from legacy on-prem networks," Deter noted. Tool vendors are expanding the availability of built-in mechanisms to protect data, including policy-based enforcement of encryption, data loss prevention and identity management controls.

Yet cutting edge technology doesn't necessarily coincide with data protection capabilities. Therefore, companies migrating to the cloud need to balance cloud adoption with their private on-premises infrastructures.

Green House Data, Deter explained, took a measured data security approach when migrating its internal applications to the cloud. Much of the data was migrated to SaaS models that met business and security requirements, while other sensitive data remained securely locked behind defense-in-depth controls layered over on-premises physical infrastructure.

To strike a balance between cloud migration and on-premises data protection, Deter recommended taking the time to inventory data across all applications, segregating possible locations of data based on classification and sensitivity, enforcing role-based access and identity management controls to smoothly grant and revoke access to data, and intermingling policy-based controls within SaaS products for data resilience, encryption and loss protection.

Dig Deeper on Data governance

-

![]()

WhatsApp is refused right to intervene in Apple legal action on encryption ‘backdoors’

-

![]()

WhatsApp seeks to join Apple in legal challenge against Home Office encryption orders

-

![]()

US lawmakers say UK has ‘gone too far’ by attacking Apple’s encryption

-

![]()

Apple pulls Advanced Data Protection in UK, sparking concerns