20 real-world GenAI applications across leading industries

Generative AI is predicted to add up to $16 trillion to the global economy by 2030. Learn how 20 industries, from finance and legal to healthcare and manufacturing, are using it.

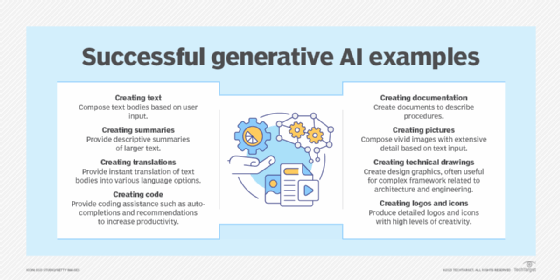

Over the past three years, generative AI has transformed industries by creating new content in text, image, music and video formats. In contrast to traditional AI that operates on rule-based models, thereby limiting its capabilities and reasoning, GenAI is trained on vast amounts of public and proprietary data, enabling it to provide users with humanlike, mostly accurate responses to their queries. Derivatives of GenAI include chatbots, high-quality content, automated summarization, intelligent recommendation engines, virtual tutors and AI-powered creativity tools.

The PwC report "Global Artificial Intelligence Study: Exploiting the AI Revolution" estimated that GenAI could contribute up to $16 trillion to the global economy by 2030 -- more than the current economic output of India and China combined -- through benefits that include increased productivity, cost savings, improved decision-making, personalization and enhanced safety. The following list captures how 20 different industries are currently using GenAI to achieve these benefits and meet business goals.

1. Manufacturing, industrial and electronics

Manufacturing teams have to meet production goals across throughput, rate, quality, yield and safety. To achieve these goals, operators must ensure uninterrupted operation and prevent unexpected downtime, keeping their machines in perfect condition. However, navigating siloed data -- such as maintenance records, equipment manuals and operating procedure documentation -- is complicated, time-consuming and expensive.

GenAI accelerates time to insight for operators, technicians, process engineers and plant managers. For example, at Koch Industries, facility operators use C3 Generative AI to query the system in natural language for comprehensive reports on internal and external operations. Process engineers assess performance and risk across assets, generating detailed insights on critical issues and full traceability to the source. According to Steve Lombardo, former communications and marketing officer at Koch, generative AI has helped the multi-industry company solve previously unsolvable problems at scale.

This article is part of

What is GenAI? Generative AI explained

2. Retail and consumer goods

From inventory management to customer service, sales, store operations, loss prevention and beyond, GenAI has made retail operations exponentially easier and more effective.

For example, Walmart has integrated GenAI across its operations, deploying conversational AI tools that let customers order groceries using just their voice or text. In-store associates have their own AI helper, "Ask Sam," that uses voice commands to assist with product locations, price checks and schedule queries. Walmart's modernized chatbots use intelligent AI to respond to customer inquiries in a more natural and intuitive way, enhancing the customer experience. The company has even developed its own AI system for supplier negotiations, achieving significant cost savings and fostering better relationships with suppliers.

3. Finance and banking

According to McKinsey, generative AI could add $200 billion to $340 billion in annual value to banking, largely through increased productivity. While traditional AI helps banks analyze data and forecast trends, GenAI goes beyond by providing coherent, contextually relevant outputs based on immeasurably larger inputs. It does this by extracting patterns and structures from vast amounts of customer and market data, giving banks deep insights into underlying factors such as potential risks or fraud and collecting customer information for loan origination. GenAI also enables banks to offer personalized banking and marketing experiences tailored to customer interests and needs.

For example, in wealth management, GenAI helps banks like Wells Fargo suggest optimal investment strategies and create customized portfolios based on individual risk appetites. Other GenAI applications let banks simulate different economic scenarios to assess and mitigate operational and market risks, evaluate the effectiveness of new trading strategies, automate reports and perform real-time monitoring and reporting for regulatory compliance, among other outputs.

4. Accounting

Manually extracting daily transaction data from financial documents, such as bank statements or investment reports, can take anywhere from a few minutes to 10 hours, depending on the number of transactions. Annual reports from a single financial institution could contain over 1,000 transactions. GenAI expedites this process by handling repetitive tasks, such as data entry, invoice processing and reconciliation; identifying and correcting human errors in financial records and other reports; and summarizing and analyzing vast amounts of financial data in minutes. GenAI-powered accounting tools, such as DocuAI, also improve financial reporting by producing detailed forecasts, simulating various financial scenarios and generating insightful reports.

5. Hospitality and travel

Executives in the hospitality sector use generative AI to improve five key management areas: travel planning, travel inspiration, operations, reputation and customer support. Examples include chatbots for handling customer inquiries and scheduling, as well as GenAI-powered newsletter content, content ideation, summarization and image generation.

In late December 2022, Southwest Airlines had to cancel more than 15,000 flights due to a major winter storm, leaving millions of customers stranded and angry as the airline's outdated software scheduling systems struggled to reroute flights and long customer service wait times made it hard to get help. "None of this would have happened with GenAI, which can streamline everything -- from airline and hotel scheduling to customer service, back-of-house communication, search, sentiment analysis of guests and so forth," contended Rafat Ali, founder of travel industry news site and research firm Skift.

6. Healthcare and life sciences

Across the healthcare sector -- from administrators to practitioners, clinicians, researchers, medical imaging specialists, patients and beyond -- generative AI is revolutionizing the field, making stakeholders' jobs easier, faster and more efficient.

For example, GenAI parses research papers into more intelligible language and summaries, making it easier for clinicians to understand them. GenAI, too, can be powered into chatbots that provide patients with lucid information, diagnoses, procedures and medical instructions. For medical imaging specialists, these large language models (LLMs) are fine-tuned with medical images and reference materials to pinpoint and describe abnormalities in patient images.

In medical research, a process that typically takes months or even years, GenAI condenses vast amounts of medical publications into summaries, analyses and insights. Healthcare administrators use GenAI-powered models to identify patterns and pinpoint inefficiencies; executives, for instance, might use GenAI to understand reasons for unusually long patient wait times. Market research platform Statista found that, as of 2023, almost half of U.S. healthcare organizations were already using GenAI across domains.

7. Customer service

Research firm Gartner predicted that by 2026, intelligent generative AI will reduce labor costs by $80 billion by taking over almost all customer service activities. Traditional AI-powered chatbots, no matter how sophisticated, struggle to understand and answer complex inquiries, leading to misinterpretations and customer frustration. In contrast, a GenAI-powered chatbot -- drawing from the company's entire wealth of knowledge -- dialogues with customers in a humanlike, natural way. This typically makes interactions faster as well as more efficient, responsive and personalized. At the same time, the chatbot learns from user feedback, improving its responses and minimizing its hallucinations and mistakes.

One company that profits from its continuous learning GenAI bot is U.K.-based energy supplier Octopus Energy. Its CEO, Greg Jackson, reported that the bot accomplishes the work of 250 people and achieves higher satisfaction rates than human agents.

8. Advertising and sales

There are plentiful small ways that GenAI impacts sales, including personalizing client emails, drafting and evaluating requests for proposal, preparing reports and testing different pitches. On the whole, though, the most successful salespeople are those who thoroughly understand their products, services and market -- and this is where GenAI has the greatest benefit. As Conor Grennan, chief AI architect at NYU Stern School of Business, explained, "If you give GPT some user cases of what you do, ask it to refer you to the best markets, to identify problems, it can familiarize you with your product -- all the details and risks that you may be unaware of."

Stakeholders can also query ChatGPT or other generative AI tools, such as Claude, Bing or Gemini, for explanations of images. "We have these complex graphs -- for example, the linear regression model. ChatGPT tells me what it is and how it applies to my market," Grennan said. Marketing-focused GenAI tools, such as Jasper, can translate content into more than 30 languages, helping sales teams broaden their reach.

9. Marketing

One of the most impacted industries by GenAI is marketing. Firms such as fintech marketplace InvestHub use generative AI to personalize at scale. "If I want to add 5,000 products next quarter, who is going to write that? Who is going to make sure it follows brand guidelines?" asked Bob Hutchins, founder of the AI marketing consultancy Human Voice Media, referring to the potential of GenAI to handle labor-intensive tasks in minutes.

GenAI-powered tools such as ChatGPT, Jasper and Einstein Copilot generate images for logos and other scenarios, produce professional-quality videos at a fraction of the usual cost and time, optimize ads, improve image quality and design avatars and virtual influencers for social media and the metaverse. They can also build customer profiles, fine-tune competitive messaging through AI analysis, predict emerging trends, design websites, research and strategize content, repurpose material, and simulate and test different strategies, providing strategic advice for long-term growth.

10. Education

Teachers often complain that students use generative AI for their homework. On the other hand, GenAI also benefits teachers and administration through task automation, including creating and grading assignments and exams, generating gamified learning programs such as complex quizzes, and producing engaging content. GenAI can tailor the student learning experience, turning lessons into visual dramas for some and crafting narratives and games for others based on students' preferences, needs and capabilities. The technology is also used to enhance virtual teaching with real-time instructor feedback and support. For administrators, the tool helps generate personalized ideas and content to attract high-level students and teachers, create and manage budgets, analyze and drive ideas, improve teacher and student performance, and establish and adjust policies.

The technology is so convincing that schools in Arizona and London plan to replace their human teachers with AI-driven instruction.

11. Fashion applications

Fashion designers utilize GenAI's huge troves of historical and current fashion data to generate unique and avant-garde designs. In the same way, they can use smart prompts to help them optimize production processes, reduce waste, make smarter sourcing and sustainability decisions and anticipate trends. For dressmakers or tailors, clothes can be personalized by analyzing individual preferences, body measurements and style choices, while customers can use AI to virtually visualize how garments will look on them before deciding whether to purchase.

For example, Google has developed a new GenAI technique that lets shoppers virtually try on clothes to see how garments suit their skin tone and size. Other Google Shopping tools use GenAI to intelligently display the most relevant products, summarize key reviews, track the best prices, recommend complementary items and seamlessly complete the order.

12. Entertainment and gaming

Through tools such as ChatGPT and MidJourney, GenAI enables users to create spectacular images, new content and professional-quality videos for free. It has also revolutionized art; for instance, beatboxer Harry Yeff (aka Reeps One) synchronized his voice with AI to generate a new form of percussive sound. Working with the Leipzig Ballet, Yeff used GenAI to generate innovative dance movements against an AI-generated background.

For core players like visual effects artists, illustrators, actors, scriptwriters, composers, studio engineers, photographers, game designers, audio and video technicians and animators, GenAI might threaten aspects of their roles. Its abilities include automating tasks such as character and environment design, voice generation and cloning, sound design, tools programming, scriptwriting, animation and rigging. It also handles 3D modeling, music generation and recording, lyrics composition, mastering, mixing and more.

13. Media

From copywriting and content generation to idea creation and more, GenAI has influenced media in both subtle and more audacious ways. For example, newspaper Die Presse uses it to generate interview questions, story ideas and social media headlines. Media groups, including Schibsted, use it to transcribe interviews and for copy editing. Hearst Newspapers applies the technology for headlines, SEO keywords and summaries. KSAT-TV uses AI to transcribe videos into text, while News Corp Australia employs generative AI to produce 3,000 local news stories a week.

On a bolder scale, a radio station in Poland replaced all its journalists with AI presenters but quickly abandoned the so-called experiment weeks later in the face of listener backlash. The Washington Post uses its GenAI-powered Heliograf tool to automate simple news stories on sports or election results. India Today employs AI news anchors, and Reuters built its own AI-assisted LLM to support clients with legal research.

14. Human resource management

For organizations to stay relevant, they need to upskill, reskill and continually improve employee performance. GenAI assists talent managers in creating a unified talent lifecycle, enabling organizations to engage with and assess candidates and employees and helping recruits realize their potential and ultimately thrive within the organization.

For example, Beamery's TalentGPT AI assistant uses GenAI to help employees explore potential career paths and to assist companies in hiring the best-fit talent by answering questions such as, "Which skills do I need to get this work done?" and "Are there people within our business that are a good fit for the job?" Administrators use the tool to identify gaps in their objectives and test results, prioritize key tasks for meetings and draft coherent reports. For talent coaches, the engine customizes employee career paths based on stored data, tracks their optimal career trajectory and matches staff to appropriate learning programs. GenAI tools make reports more comprehensive for all stakeholders, and users can query the bots for clarification when needed.

15. Legal services

Unlike traditional search engines that rely on keyword searches, GenAI enables researchers to analyze large data sets at scale, quickly identify relevant precedents and summarize key points. In fact, GenAI saves researchers and lawyers time by generating abstracts and analyzing decisions and cases from the vast pool of legal texts it's trained on. Legal professionals across corporations, law courts and governments use AI-powered tools, such as Spellbook and Juro, to process large data sets, summarize legal briefs and documents, prepare tax returns, draft contracts and personalize correspondence. Tax attorneys told Thomson Reuters they use GenAI for accounting, bookkeeping and tax research.

Even more remarkable are GenAI-powered engines like OpenAI's Harvey, whose arguments are as sophisticated as those of veteran lawyers. Harvey is fine-tuned on vast amounts of legal data, specifically designed to analyze complex scenarios, with some lawyers reporting that they value it for its accuracy and depth. According to the 2023 "International Legal Generative AI Survey" by LexisNexis, nearly half of all lawyers surveyed said they believe generative AI will transform their business, with a staggering 92% anticipating at least some impact.

16. Software development

Generative AI has revolutionized software development with tools like ChatGPT, Microsoft's Copilot and AWS CodeWhisperer, which can instantly generate code for basic functions. This enables developers to shift their focus to more strategic design and complex problem-solving roles. The technology also automates routine tasks, such as coding, debugging and testing, completing these tasks in a fraction of the time, usually more accurately than human software engineers. Other GenAI tools, such as CodeComplete, further explain code in readable language, enhancing learning and coding functions.

According to Deloitte research, 92% of U.S. developers are already using these AI coding tools, with 70% of developers citing benefits such as better overall quality, faster production time and quicker resolution.

17. Automotive and logistics

Vehicles outfitted with generative AI can identify road signs and roadblocks more accurately and efficiently than traditional AI, making journeys safer and more enjoyable. For example, Nvidia Drive provides real-time assistance and recommendations in multiple languages to warn drivers if they move into the path of an oncoming vehicle, indicate when the car's battery is running low or direct them to the nearest gas station. It uses advanced AI to help drivers anticipate and react quickly to critical situations, such as crowded intersections, sudden braking or dangerous swerving. Additionally, it creates customized route itineraries to find the best routes and automatically adjusts speed to suit the topography. The system also answers incoming calls and syncs calendar meetings, among other functions.

For automakers, generative AI aids in research and development, vehicle design, quality control, testing, validation and predictive maintenance. As panelists at Germany's renowned IAA Mobility International Motor Show pointed out, generative AI can simulate various scenarios for safer, innovative designs and more energy-efficient systems.

18. Public sector and non-profits

As participants on a 2023 Deloitte panel observed, actors in government and public service sectors are increasingly using generative AI to build connections among people, systems and different government agencies. Use cases include content generation, proposal writing, planning, detection and data visualization. For example, the GenAI-powered tool BlueDot alerts public bodies to outbreaks or potential threats from new or known pathogens, such as influenza and dengue. GenAI extracts location-specific data on disease events, connects various data sets on the back end and translates epidemiological data into natural language for users.

In other scenarios, the government of Iceland applied GenAI in an initiative to preserve its native language with correct grammar, spelling and cultural context. Singapore's government implemented GenAI systems to free up time spent on routine tasks by using the AI to redact documents and draft emails, speeches, briefs and more.

19. Agriculture

Generative AI improves farming and food production through its ability to customize crop breeds. AI analyzes and simulates vast data sets of genetic combinations, propelling the creation of new plant varieties that are resistant to diseases and pests and tailored to specific climates and environments. Additionally, AI can predict pest outbreaks, climate shifts and disease spread, empowering farmers to make informed decisions, reduce crop losses and improve yields.

The technology optimizes food supply chains by plotting and analyzing variables such as transportation costs, spoilage rates and market demand, ensuring fresh produce reaches consumers faster and at reduced costs. When it comes to sustainable farming practices, GenAI uses its massive database to simulate historic and current farming practices, predicting long-term environmental impacts. For example, Boston-based food tech firm Motif FoodWorks uses generative AI to design and test its plant-based foods, considering factors such as regional taste preferences, dietary requirements and even seasonal availability of ingredients.

20. Telecommunications

Aside from using generative AI to customize sales and reduce customer wait times, telecommunications companies harness this innovation to identify security risks, unblock network bottlenecks and communicate with customers and stakeholders across areas. Telco Systems is one such example: Through the LearnAI app, Telco employees interact with a personalized bot to help plan maintenance tasks, minimize downtime, anticipate equipment failures and network disruptions, and preempt system hacking and other breaches. Operators also use the platform to pinpoint and correct billing inefficiencies, optimizing their billing processes while generating on-demand explanations and analyses.

"Tools like Amazon Bedrock tell us a lot about the bandwidth of a user," said Andrew J. Young, software development engineer at AWS. "It tells us what their device capabilities are, and that allows us to know what content should be tailored to their device, whether IoT or mobile."

Developers use Amazon Bedrock to interpret customer requests and suggest intelligent solutions. They also query it for insights on issues such as governance, cost control, logging, security and A/B testing.

Leah Zitter, Ph.D., is a seasoned writer and researcher on generative AI, drawing on over a decade of experience in emerging technologies to deliver insights on innovation, applications and industry trends.