9 top generative AI tool categories for 2026

Agentic systems and AI data centers are driving forces shaping the development of GenAI tools and frameworks to build LLMs, orchestrate processes and integrate physical AI.

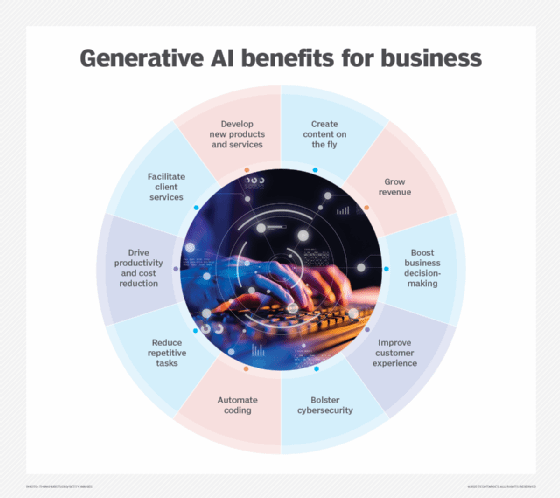

The popularity of agentic and generative AI services such as ChatGPT has galvanized interest in applying these new tools to practical enterprise applications. Nowadays, virtually every business app is enhanced with agentic and GenAI capabilities.

Most AI, data science and machine learning tools support GenAI use cases. These tools can manage the AI development lifecycle, govern data for AI development and mitigate security and privacy risks. Many emerging forms of GenAI don't necessarily use large language models (LLMs) for tasks like generating images, video, audio, synthetic data and translating across languages. Included are models built using generative adversarial networks, diffusion, variational autoencoders and multimodal techniques.

Agentic AI has made headlines recently with new protocols and standards for connecting data, agents, tool use and governance. Many of these components use GenAI tools under the hood, often in concert with other approaches for context engineering and governance. The goal of agentic systems shifts the focus from generating output, as with GenAI, toward achieving outcomes. These tools are getting increasingly better at reasoning, planning and autonomously executing complex tasks.

The proliferation of AI data centers and the need for greater power requirements will play an important role in how GenAI tools evolve over the next year. Tool providers are increasingly pursuing inference modeling methods to reduce infrastructure for training smaller domain-specific small language models and lower the cost of running these models in production. Along those lines, FinOps providers are expanding cost management capabilities beyond the cloud and into AI infrastructure. In addition, recent developments in physical AI that's centered around the Nvidia Omniverse are using GenAI capabilities to enhance data center design. Many leading platform providers are also expanding their core offerings to support multiple tool categories through new product developments, enhancements and acquisitions.

This article is part of

What is GenAI? Generative AI explained

When planning and executing a GenAI strategy, businesses need consider these nine top categories of GenAI development tools, their capabilities and the applications they support, along with vendor and open-source deployments.

1. Foundation models and services

New GenAI tools have primarily focused on simplifying the development of LLMs built using transformer approaches pioneered by Google researchers in 2017. New foundation models built on LLMs are often used out of the box to enhance existing applications. In other cases, vendors are developing domain-specific models for various industries and use cases. These models are evolving from simply answering questions to newer reasoning models that improve results but incur higher runtime costs.

Notable foundation models and services include Anthropic's Claude, Cohere's Command, DeepSeek, Facebook's Llama series, Google's Gemini, Mistral, OpenAI's GPT series and Technology Innovation Institute's Falcon LLM. Some domain-specific LLMs include Anthropic's Claude Code; C3 AI's Agentic AI Platform; Nvidia's NeMo, BioNeMo, PhysicsNeMo and Picasso; and OpenAI's Codex. A number of Chinese firms is also introducing competitive, open alternatives, such as Alibaba's Qwen, Baidu Research's Ernie and DeepSeek. The open source community is raising issues concerning the openness of AI models, the restrictions associated with new licensing schemes and a lack of insight into training data and processes.

2. Agentic AI orchestration

Agentic AI platforms have progressed from experimental open source tools like AgentGPT, AutoGPT and BabyAGI to more mature tools for businesses. This category of tools holds promise for an application layer in much the same way that web APIs provided a dynamic alternative to service-oriented architectures and enterprise service buses in business systems.

Many vendors are rushing to support the Model Context Protocol to simplify access to their existing tools. Platform providers are also rolling out new services to integrate workflows across multiple LLM models and services. Several new providers have recently emerged with open source agentic orchestration frameworks, including CrewAI, DailyAI, LangChain, LlamaIndex and Weights & Biases. Large enterprise vendors are also entering the field with growing initiatives such as Microsoft's AutoGen.

3. Agentic BPM platforms

Several platform providers that have traditionally catered to the business process management (BPM) market are approaching agentic orchestration from a rules- and process-oriented perspective. This is especially beneficial to businesses in regulated industries. Traditional robotic process automation, process mining and capture, and low-code tool vendors are applying their expertise in process governance to new agentic workflows.

These platforms include BPM-centric agent orchestration frameworks and tools from UiPath, Automation Anywhere,SS&C Blue Prism, Camunda, Celonis, ABBYY and Appian. Traditional enterprise vendors, including Oracle, Salesforce, SAP and ServiceNow, are also introducing agentic coordination capabilities into core platforms that take advantage of their ecosystems of connected data management and contextualization tools.

4. Physical AI integration

Newer physical AI approaches are already showing promise in building more efficient infrastructure for data centers, energy grids, chip production and smart factories. Nvidia has played a seminal role in building a thriving ecosystem of tools and partnerships on its Omniverse platform and in stewarding the OpenUSD digital twin specification. The chip giant has also open sourced the PhysicsNeMo engine, adding support for multiphysics workflows. Most major product lifecycle management, computer-aided engineering and electrical infrastructure vendors are adding native Omniverse and PhysicNeMo support that uses GenAI surrogate models to speed creation of design and simulation workflows.

5. Cloud GenAI platforms

The major cloud providers are rolling out suites of GenAI capabilities for businesses to develop, deploy and manage GenAI models. Cloud GenAI platforms include AWS Quick Suite, Google Vertex AI, IBM WatsonX and Microsoft Azure AI Fundamentals. Popular third-party cloud-based GenAI development platforms include offerings from Hugging Face and Nvidia.

6. Retrieval augmented generation tools

General-purpose foundation models can generate authoritative-sounding and well-articulated text, but they have a propensity to hallucinate and produce inaccurate information. Businesses use GenAI-specific development tools to build proprietary LLMs tailored to their needs and expertise. Retrieval-augmented generation (RAG) can prime foundation models to improve accuracy, and fine-tuning tools can calibrate foundation models. RAG and fine-tuning tools are sometimes used together to balance the benefits of each approach. RAG tools include the OpenAI Retrieval API, Microsoft Azure AI Search, Microsoft Graph RAG and open source tools such as Haystack, LightRAG and TxtAI. Fine-tuning capabilities are baked into services to access most commercial foundation models. Additional third-party fine-tuning tools include Entry Point AI and Hugging Face products that work with open source models.

7. Quality assurance and hallucination mitigation tools

New hallucination detection tools can identify and reduce the prevalence of hallucinations in various use cases. Hallucination mitigation tools include Alhena Agent Assist, Galileo's Agent Reliability Platform, Progress Software's Agentic RAG platform and TruLens. New research techniques, such as the Woodpecker algorithm, could assist businesses in developing their own hallucination mitigation workflows. Many of these vendors have released open source variants, including Galileo's Luna suite of evaluation foundation models, open source TruLens (now owned by Snowflake) and Vectara's Hughes Hallucination Evaluation Model.

8. Data aggregation tools

Early foundation models supported limited context windows, which characterize how much data an LLM can process in a single query. Although these models can improve the handling of larger context windows, there are tools designed to work with larger data sets. Data chaining tools like Dust and LangChain automate the process of inputting multiple documents into LLMs. Vector databases store data in an intermediate format, called an embedding space, to work with LLMs. Vector databases include ChromaDB, Faiss, Pinecone, Qdrant and Weaviate.

9. GenAI cost optimization platforms

AI cost-optimization tools can balance performance, accuracy and cost. Traditional FinOps providers of cloud cost optimization tools have started adding AI-specific capabilities, such as IBM Apptio. Observability platform providers are adding cost optimization to existing telemetry tools, such as Datadog LLM observability. Harness, a developer of software development lifecycle automation tools, is supporting cost-optimization capabilities for AI. More cloud cost-optimization, observability and AI software development providers will be developing related offerings over time.

George Lawton is a journalist based in London. Over the last 30 years he has written more than 3,000 stories about computers, communications, knowledge management, business, health and other areas that interest him.