KOHb - Getty Images

Generative AI in the enterprise raises questions for CIOs

Technology and business leaders are exploring pivotal issues surrounding adoption strategy, architecture and the IT organization's management responsibilities.

Generative AI is reshaping the enterprise technology estate, sparking discussions around adoption strategy, raising architectural issues and pushing IT leadership in new directions.

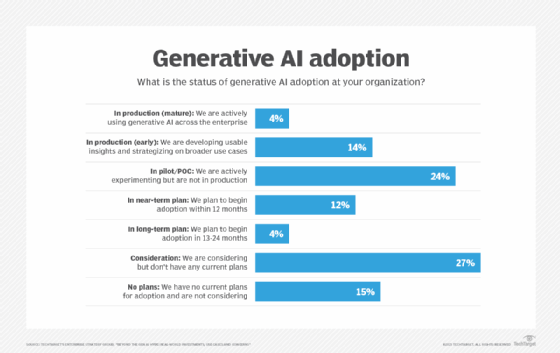

Those shifts are happening at shocking speed. While cloud computing adoption unfolded at a brisk pace, the uptake of generative AI is still happening faster. Most organizations are either evaluating or actively deploying the technology, which became readily accessible starting in late 2022.

An August 2023 report from TechTarget's Enterprise Strategy Group found 54% of the 600-plus organizations it polled will have adopted generative AI in the next 12 months. Likewise, 59% of business executives PwC surveyed cited "embedding new technologies in their business model" as the No. 1 strategic priority over the next three to five years; 46% of the respondents specifically identified generative AI.

"With generative AI, this is something that's brand new," said Neil Dhar, U.S. vice chair and consulting co-leader at PwC, speaking at an online presentation. "Every company in every sector is doing an inward analysis of how generative AI impacts their companies. It's clear that no one has run away from the pack here. And we're all figuring this out together, including ourselves."

GenAI and adoption strategies

Make vs. buy

Walmart, which tops the Fortune 500 list with more than $600 billion in revenue, is among the enterprises navigating generative AI. The retailer launched a GenAI Playground in June and released a generative AI tool, My Assistant, to some 50,000 campus associates in August.

David Glick, senior vice president of enterprise business services at Walmart, views generative AI as a "transformative technology." Its sudden arrival prompted a make-versus-buy decision at the retailer. Walmart has traditionally been a buy shop but shifted to making its own technology with the arrival of CTO Suresh Kumar in 2019. The company's GoLocal service delivery platform and relaunch of Walmart.com's digital storefront were both in-house efforts, for example.

Generative AI, however, calls for a best-of-both-worlds approach, according to Glick. The plan: Use the best of the available large language models (LLMs) and add its own customization layer.

"It's hard to train the LLM from the ground up," he said, noting that OpenAI and other companies in the AI sector have already done the foundational work.

"If they can do all the heavy lifting and the plumbing and the scaffolding, and we can build things that delight our customers -- either associate customers or [external] customers -- I think that's the sweet spot for us," Glick said.

Walmart aims to add its own data to fine tune the tech providers' LLMs. Glick pointed to the company's internal benefits help desk and its 300-page benefits guide, which agents may struggle to memorize. Incorporating that document into an LLM could augment a help desk agent's employee interactions, boosting efficiency and increasing accuracy.

This buy-and-customize method tracks with how the company has tailored ERP and HR products to suit its needs, Glick noted.

Industry executives anticipate most enterprises will follow the route of tuning publicly available LLMs rather than building their own.

"I think the level of effort and scale that it takes to build those becomes cost prohibitive, and there's already so much innovation going on," said Merim Becirovic, global CTO of Accenture's IT organization.

Vikrant Karnik, cloud and technology services leader at Genpact, a professional services firm based in New York, said the meteoric rise of generative AI has captivated corporate boards and sparked discussions on whether it's better to build AI infrastructure or tap what's available. The latter choice is winning out, even among organizations pushing generative AI's cutting edge -- about 5% of Genpact's customer base.

"Even in those cases, they are depending on models that already exist," Karnik said.

The case for speed

While emerging technologies often inspire caution and gradual adoption, industry executives believe speed is important when it comes to generative AI.

"I think now is the time and we all know that we have to experiment, we have to pilot," said Chris Bedi, chief digital information officer at ServiceNow. "The sooner we get started on it, the sooner we're going to realize material business outcomes from it. Waiting on the sidelines for this one, which is what a lot of people did -- and maybe rightfully, with things like the metaverse and blockchain -- doesn't feel like the right answer."

The difference between those other hot-then-not technologies and generative AI revolves around the latter's obvious and plentiful practical applications.

"I think blockchain and metaverse often became a solution in search of a problem versus generative AI, where use cases just jump off the page," Bedi said.

ServiceNow, which is creating its own generative AI models, has deployed the technology in a dozen in-house use cases.

Key use cases include those that simplify the task of finding information. ServiceNow houses much content in several different places.

"We have knowledge bases," Bedi said. "Many companies around the world have invested in knowledge bases and content stores. It's still hard to find stuff."

Generative AI, however, makes it possible to focus on content "in a very human-readily way and not get a 10-page document but the one paragraph you are interested in," he added.

Generative AI in the IT shop

Generative AI can support a wide range of enterprise functions, including IT.

Bedi cited developer productivity as one immediate opportunity for generative AI within the technology function. "How much more can you increase developer productivity -- which, by the way, is the labor pool that's the hardest to find," he said.

ServiceNow found its internal use of text-to-code technology has resulted in about a 20% productivity improvement among its software engineers. Bedi said this use of generative AI raises budgeting questions: For example, if the IT department had planned to write 1 million story points of code in 2024, will it now aim, with generative AI, to write 1.2 million story points of code with the same number of developers or generate that amount of code with 20% fewer developers?

Becirovic also supports rapid adoption of generative AI.

"I think speed is paramount," he said. "I'm a big fan of hands-on-keyboard, as fast as possible, because it gives people perspective and understanding. They're not spending years analyzing."

Becirovic also advocated for speed in cloud adoption, helping quickly grow the technology's penetration at Accenture. He believes widespread cloud deployment will now facilitate fast-paced generative AI uptake. Generative AI services live in the cloud. Cloud qualities such as elasticity and rapid innovation play into the new capabilities arising from generative AI, he noted.

"Cloud is a high-performance engine, and generative AI is your gas pedal," Becirovic said.

A September 2023 report from HCLTech, a technology company offering digital business services, provides another data point. Of the 500 business and technology leaders it surveyed, 85% said they recognized "the cloud's role in enabling generative AI and agree it can only be deployed with the right cloud strategy."

GenAI and IT architecture

New patterns ahead

Enterprises opting for existing models in their accelerated pursuit of generative must determine how best to access such offerings.

Becirovic framed the question as, "How can they make sure they've got the right architecture patterns internally to connect with and consume all the different components and services?"

Organizations must decide how to consume a service and make it available in a centralized catalog for companywide availability, he noted. Other service considerations include privacy, security, cost and internal use. He said the overarching task of understanding a service may be viewed as a certification process or governance certification.

"Every organization should be thinking about [certification] in this new world," Becirovic said. "Because today's the slowest day it's ever going to be. There's only going to be more services, more capability."

Decisions on architecture patterns stem from the certification process, he added. At this point, business and technology leaders decide where generative AI will fit in their organizations -- employee enablement, infrastructure automation or help desk operations, for example.

Structuring for scale

At ServiceNow, Bedi said his last quarterly business review covered the AI architecture topic.

"We actually spent a chunk of time on what is our AI architecture, including generative," he said. "What does that look like? What's the same, and what's different?"

Bedi said the meeting also brought up the need for a concept of "LLMOps" along the lines of machine learning operations. MLOps provides practices and tools for managing machine learning in organizations.

Interaction among LLMs is another aspect of creating an architecture.

"You're going to think about having large language models talk to other large language models directly," Bedi said. "What does that architecture look like? What does that security look like? What does that data protection look like?"

As for AI governance, technology adopters need to consider issues such as model drift and bias, he noted. In Bedi's opinion, organizations don't have to dig into architecture and governance as they experiment with generative AI. But those elements will become necessary when it's time to expand beyond pilots.

"If you're preparing to go from experiment to scale pretty quickly, you're looking at the architecture. There are a few building blocks that have to go in place; LLMOps [is] one of them," he said.

Cloud providers vie to build AI infrastructure

Cloud providers are bulking up their AI infrastructures to deliver advanced compute for customers.

Forrester Research's "The Future of Cloud" report, published in July 2023, cited that trend as the "next horizon of innovation" for cloud platforms moving beyond commodity infrastructure. Hyperscalers are competing to scale their pricier AI infrastructures, Forrester Research reported, with high-performance computing (HPC) workloads becoming a priority.

"HPC will be part of a broader push around specialized workloads," said Lee Sustar, an analyst at Forrester Research.

Cloud providers are putting considerable effort into acquiring GPUs to run those workloads, he noted. In addition, hyperscalers are working to ensure they can deliver specialized services in the regions customers request while also meeting country-specific data sovereignty requirements. He said the task is more challenging for cloud providers than delivering more generalized x86 or Arm-based workloads.

GenAI and IT leadership

Enabling business users

While IT leaders puzzle over how to harness generative AI, they have also begun to think about how their roles might change because of the technology.

Keyur Ajmera, CIO at ICIMS, a talent acquisition platform company, envisions a "shift up" approach when it comes to managing enterprise IT. Where the concept of shifting left refers to moving technology closer to external customers, shifting up brings technology closer to internal business users, he said.

Emerging technologies such as generative AI will provide a catalyst.

"Generative AI is a game changer, as more vendors come out with their own versions of [Microsoft] Copilot or embedded generative AI capabilities," Ajmera said. "These will be enabling technologies that business users and anyone in the organization can leverage to drive a lot of the technical innovation for the company. That frees up tech and IT teams to focus on governance, security, frameworks and establishing a really good cadence around how you use technology."

Essentially, shifting up changes the IT department's role from doing things for users to enabling them to take on more technical tasks. At ICIMS, Ajmera began exploring this approach in data and analytics, which are among the IT fields where tech talent is hard to find.

"I can't hire 30 business intelligence analysts," Ajmera said. "But what I can do is train up various operations folks and folks within the business who have technology savvy to effectively do some of this work."

Shifting up lets the business side take control of activities such as creating data visualizations -- without depending on IT, he noted.

Karnik said the trend toward pushing more technology power to business users has been going on for a while, citing visualization and low-code/no-code platforms as examples.

"That process was already happening," he said. "Generative AI is just one more step in that process."

Karnik doesn't anticipate the IT department evolving into a strictly governance function that hands out tools to business users. Security and data protection will remain a critical function, he said, citing the potential for data leakage. Users must enter data to obtain insight from a generative AI tool. But if they provide sensitive data, they could be giving away an organization's proprietary knowledge.

It's up to tech leaders to inform business users on how to properly use generative AI, according to Karnik. "The responsibility of the IT department becomes heightened and sharpened."

Widening the scope of responsibility

That responsibility also becomes highly distributed, as the effects of generative AI ripple across enterprises.

"We're created a generative AI roadmap for each department in the company," Bedi noted.

He's worked with ServiceNow's HR leadership to consider how generative AI could reimagine the recruiting functions. "It's everything from improving job descriptions to generating tailored interview guides," he noted.

Marketing is exploring how generative AI could provide intelligence that might have taken hours for people calling on customers to collate on their own. In cybersecurity, generative AI could scan through event logs to "find the needles in the haystack," Bedi said.

The application of generative AI could see a dual role in the CFO's office. On the one hand, the task is determining what can generative AI do for the finance function, "but it's also, 'How do we think about bending the cost curve in certain departments?'" he added.

Ajmera also pointed to the CIO's broad responsibility for generative AI diffusion. He believes gaps could emerge within organizations, as some pockets of employees engage with generative AI and others don't.

"It's up to every CIO to bring the organization along on this journey," he said.