Build an ethical AI framework: 12 top resources

IEEE Global Initiative, World Economic Forum and Stanford Institute are among the best resources available to businesses building a viable ethical AI foundation for responsible AI.

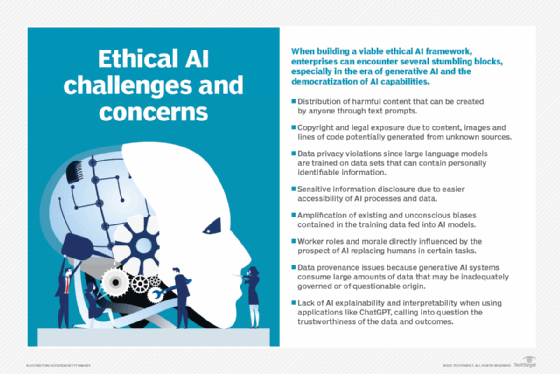

As generative AI and agentic AI gain a stronger foothold in the enterprise, business executives face serious issues surrounding the responsible application of AI initiatives, including ethics, moral principles and methods that help shape the development and use of AI technologies.

An ethical approach to AI is important, not only for making the world a better place but also for protecting a business's bottom line and reputation. That involves understanding the financial and reputational effect of biased data, hallucinations, transparency, explainability, limitations and other factors that erode public trust in AI.

"The most impactful frameworks or approaches to addressing ethical AI issues … take all aspects of the technology -- its usage, risks and potential outcomes -- into consideration," said Tad Roselund, senior advisor and executive coach at Boston Consulting Group.

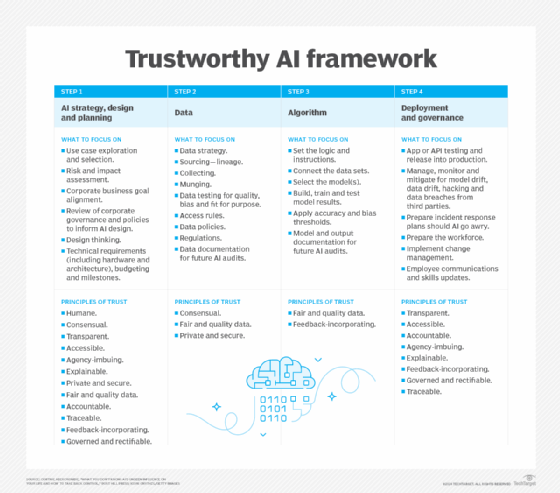

Many firms approach the development of ethical AI frameworks from a purely values-based position, Roselund said. But it's important to take a holistic, ethical AI approach that integrates strategy with process and technical controls, cultural norms and governance. These three elements of an ethical AI framework can help institute responsible AI policies and initiatives. And it all starts by establishing a set of principles around AI usage.

This article is part of

What is enterprise AI? A complete guide for businesses

"Oftentimes, businesses and leaders are narrowly focused on one of these elements when they need to focus on all of them," Roselund said. Addressing any one element might be a good starting point, but by considering all three elements -- controls, cultural norms and governance -- businesses can devise an all-encompassing ethical AI framework. This approach is especially important when it comes to GenAI and its ability to democratize the use of AI.

Enterprises must also instill AI ethics in the people who develop and use AI tools and technologies. Open communication, educational resources and enforced guidelines and processes to ensure proper AI use can further bolster an internal AI ethics framework that also addresses GenAI, Roselund added.

Top resources that shape an ethical AI framework

The following is an alphabetical list of standards, tools, organizations and other resources to help shape a company's internal ethical AI framework.

1. AI Now Institute

This institute focuses on the social implications of AI and policy research in responsible AI. Research areas include algorithmic accountability, antitrust concerns, biometrics, worker data rights, large-scale AI models and privacy. The institute's "Artificial Power: 2025 Landscape Report" explores the many ways AI innovations are imposed on humans and suggests concrete strategies for enterprises, policymakers and the public to develop more responsible AI policies.

2. Apart Research

This organization stewards collaboration, research and development across various aspects of ethical AI through hackathons, frameworks and practical solutions. One such project, called a medical agent controller, provides a multi-agent governance framework for medical chatbots. Other projects support innovations in physics and safety, model evaluation and testing, and the management of multi-agent risks.

3. Cooperative AI Foundation

This foundation conducts cross-industry research on the ethical and beneficial use of agentic AI. One of its white papers, "Multi-Agent Risks from Advanced AI," analyzes novel and unexplored risks of multi-agent systems across three failure modes relating to miscoordination, conflict and collusion. This information can help ethical teams identify the root causes of AI risk, such as information asymmetries, network effects, selection pressures, destabilization dynamics, commitment problems, emergent agency and multi-agent security.

4. IEEE Global Initiative 2.0 on Ethics of Autonomous and Intelligent Systems

This initiative focuses on ethical issues associated with incorporating GenAI into future autonomous and agentic systems. In particular, the working group explores how to extend traditional safety concepts to prioritize scientific integrity and public safety. Notably, it examines how the concept of safety has sometimes been misappropriated and misunderstood with regard to GenAI deployment risks. The group is developing new standards, toolkits, AI safety champions and awareness campaigns to improve the ethical use of GenAI tools and infrastructure.

5. Institute for Technology, Ethics and Culture Handbook

The ITEC Handbook was a collaborative effort between Santa Clara University's Markkula Center for Applied Ethics and the Vatican to develop a practical, incremental roadmap for technology ethics. The handbook includes a five-stage maturity model with specific, measurable steps that enterprises can take at each level of maturity. It also promotes an operational approach for implementing ethics as an ongoing practice, akin to DevSecOps for ethics. The core idea is to bring legal, technical and business teams together during ethical AI's early stages to root out bugs at a time when they're much cheaper to fix than after responsible AI is implemented.

6. ISO/IEC 23894:2023 Information technology -- AI -- guidance on risk management

This standard describes how an organization can manage risks specifically related to AI. It can help standardize the technical language that characterizes the underlying principles and how these principles apply to developing, provisioning or offering AI systems. It also covers policies, procedures and practices to assess, treat, monitor, review and record risk. It's highly technical and oriented to engineers rather than business experts.

7. NIST AI Risk Management Framework

AI RMF 1.0 guides government agencies and the private sector in managing new AI risks and promoting responsible AI. According to NIST, the framework is "intended to be voluntary, rights-preserving, non-sector-specific and use-case agnostic."

8. Partnership on AI

This nonprofit partnership of academic, civil society, industry and media organizations explores fundamental assumptions about how to build AI systems that benefit all stakeholders. Founding members include executives from Amazon, Facebook, Google, Google DeepMind, Microsoft and IBM. The partnership created an AI incident database to assess, manage and communicate newly discovered AI risks and harms. It's also stewarding a research framework for AI agent governance.

9. Psychopathia Machinalis

This book by Nell Watson and Ali Hessami provides a starting point for characterizing and communicating the different types of systemic AI maladies, akin to the medical taxonomies for mental disorders. It's characterized on its website as "a conceptual framework for a preliminary synthetic nosology within machine psychology, intended to categorize and interpret these maladaptive AI behaviors." The book discusses a broad taxonomy of 32 categories across seven axes of dysfunction that affect the reliability, safety and alignment of AI with human goals. It also provides tools to understand the root causes and develop countermeasures and mitigation strategies.

10. Safer Agentic AI

This book by Nell Watson and Ali Hessami provides a multidisciplinary foundation for governing agentic AI systems. The framework develops comprehensive terminology and guidance for developers, risk management teams and oversight bodies to enable safer agentic systems. Focus areas include goal alignment, safe operations, transparency, security, value alignment, accurate information management, kill switches and contextual understanding.

11. Stanford Institute for Human-Centered Artificial Intelligence

This institute provides ongoing research and guidance on best practices regarding human-centered AI technologies and applications. One of its early initiatives, in collaboration with Stanford Medicine, is Responsible AI for Safe and Equitable Health, which addresses ethical and safety issues surrounding AI in health and medicine.

12. World Economic Forum

A WEF white paper, "AI Agents in Action: Foundations for Evaluation and Governance," provides an overview of trends and best practices for evaluating, managing and governing AI agents responsibly. It outlines a technical architecture for safe agentic AI and introduces terminology to clarify roles, describe autonomy levels and contextualize innovations. One of its goals is to support enterprises in moving from one-off AI compliance checks to continuous monitoring and oversight with multi-agent ecosystems.

Editor's note: This article was updated in April 2026 to reflect the latest developments in ethical AI framework resources.

George Lawton is a journalist based in London. Over the last 30 years, he has written more than 3,000 stories about computers, communications, knowledge management, business, health and other areas that interest him.