How businesses use KPIs to measure AI's performance

Business decision-makers under heavy pressure to justify the value of their AI projects look to key performance indicators for salvation. KPIs reveal AI's efficiency and ROI.

Most businesses have AI projects in progress or planned for launch within the next year, with no effective strategy to measure a return on their investment. To justify their AI deployments, demonstrate a positive ROI and determine whether "the juice is worth the squeeze," business leaders must first define the business value they expect AI to deliver and establish key performance indicators to track that value.

KPIs not only measure an AI's ROI, but they can also be used to assess and improve an AI project's efficiency. If a credit card company, for example, uses a traditional AI deployment to develop pattern recognition tools for its fraud-detection department, machine learning (ML) creates foundational models trained on large data sets. In assessing the AI deployment's effectiveness, KPIs can determine the tool's speed, accuracy and efficiency as well as its ROI.

A generative AI (GenAI) deployment follows a similar process -- with one exception: Since GenAI tools generate content, KPIs must measure more than speed, accuracy and efficiency. After training a GenAI model, developers must set benchmarks for creativity, relevance and task-specific factors such as the content's reading grade level. As a result, KPIs might require a more subjective approach, thereby making an assessment more challenging.

Know these AI KPIs

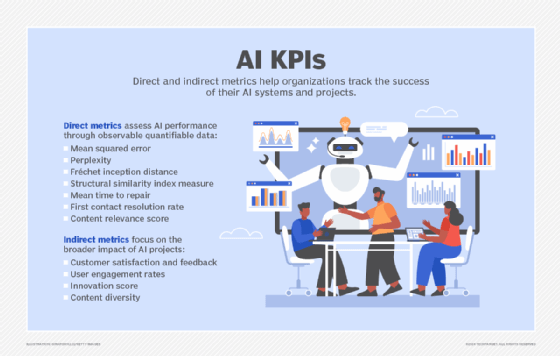

AI KPIs can be divided into two categories: direct and indirect.

Direct metrics

Mean squared error (MSE) is a critical direct metric in ML and GenAI projects. It measures the difference between the output generated and the intended result. MSE helps quantify errors in the training process. The greater the magnitude of the average error, the further the tool is from accomplishing its objective.

This article is part of

What is enterprise AI? A complete guide for businesses

Perplexity is also a common metric, particularly for GenAI models that produce text output. It measures how well a language model predicts a sample, measuring how many ways the tool might answer the question "What word comes next?" If, at each evaluation point, the model has a small number of potential "next word" candidates, the results yield a low perplexity score, indicating the model is more confident in its understanding of the text overall and that the generated content is more accurate. Lower perplexity suggests a model is better at producing humanlike text.

For GenAI applications that produce media and images, the Fréchet inception distance (FID) can be a helpful metric. Developed in 2017, FID measures the quality of AI-generated images by comparing them with human-generated ones. To get an FID score, evaluators quantify the characteristics of a reference image by passing it through a separate AI tool that extracts information about the real image features such as shapes, coloration and textures. The tool then extracts information about the generated image and compares the differences between the two images in output. The higher the FID score, the greater the distance between the expected and actual distribution of image features.

Another common image-based metric is the structural similarity index measure (SSIM), developed in 2004 for the motion picture industry, which assesses the perceived quality of generated images compared with original data. While the FID assesses the distribution of macro-level image features, such as shapes, the SSIM examines pixel-level characteristics, such as contrast and luminance. FID is best used across sets of images to determine if the AI tool generates images that generally conform to real images at a high level, while SSIM compares one specific image to another to determine their similarities.

More generic KPIs for businesses and IT also apply to AI projects, including the following:

- First contact resolution rate. For AI, this KPI can gauge what percentage of prompts submitted to the tool get a satisfactory response the first time, without being modified and resubmitted. It complements content relevance scores.

- Content relevance score. For text-based models, this KPI quantifies how closely AI-generated content matches business or creative needs.

- Money saved. It's important to measure both direct and indirect savings that AI can generate. For example, direct savings could be measured by how cost-effectively an AI deployment performs a task along the supply chain compared to a human manager. AI's indirect savings in a public relations firm, for instance, could be measured by how well it reduces the time to generate a press release compared to a human staff member.

- Money made. AI's effectiveness can also be measured by the role it plays in building revenue streams, such as enabling new product lines, expanding the customer base or increasing average sales volumes.

Indirect metrics

Indirect metrics are just as important as direct ones, especially for GenAI, where subjective measures of creativity and user satisfaction are pivotal. These metrics focus on higher-level business areas, including the following:

- Customer satisfaction. Direct human feedback-based "rate this answer" scores, coupled with indirect human feedback like the number of times a user restates a question or expresses frustration with the chatbot's responses, can help evaluate how well AI meets its intended purpose.

- User engagement rates. KPIs such as a user's session length or frequency of return to an application are strong indirect metrics for GenAI tools that generate creative outputs like art or music.

- Innovation scores. This metric measures how frequently GenAI produces novel, useful ideas or creative outputs that meet specific business goals.

- Content diversity. This metric evaluates a GenAI system's ability to produce varied, high-quality outputs across different contexts or domains.

How KPIs measure AI's value

AI-related direct and indirect KPIs quantify the effectiveness and efficiency of AI tools, making it easier to calculate ROI. Effective KPIs help track AI's overall business impact. Measuring GenAI ROI accounts for scalability, quantifying how many outputs a tool can generate in a given time period while maintaining quality.

Consider a business using GenAI to enhance its customer experiences. If one KPI shows a 60% reduction in the time required to generate personalized responses to customers in a real-time chat and another KPI shows a parallel increase in customer satisfaction, the two metrics would likely indicate a successful AI initiative to improve CX. And comparing the cost of the AI initiative to the expense of expanding a human contact center to achieve similar results would likely yield a positive ROI for the AI project.

Editor's note: This article was updated in April 2026 to reflect the latest developments in AI KPI measurement techniques.

John Burke is CTO and a research analyst at Nemertes Research. Burke joined Nemertes in 2005 with nearly two decades of technology experience. He has worked at all levels of IT, including as an end-user support specialist, programmer, system administrator, database specialist, network administrator, network architect and systems architect.

Jerald Murphy is senior vice president of research and consulting with Nemertes Research. With more than three decades of technology experience, Murphy has worked on a range of technology topics, including neural networking research, integrated circuit design, computer programming and global data center design. He was also the CEO of a managed services company.