drx - Fotolia

Hyper-converged and composable architectures transform IT

Hyper-convergence and up-and-coming HCI 2.0 and composable infrastructure can help you achieve your IT and business goals. See why, how and where their strengths and weaknesses lie.

Demands placed on infrastructure fluctuate due to changing business requirements and ever-evolving technology challenges. Two of these challenges include digital transformation, an overarching term covering a range of issues and technologies, and a more technology-specific scenario known as edge computing.

Digital transformation has IT becoming part of the business, not just supporting it. And while edge computing has shown up more prominently on organizations' radar of late due to the increasing awareness of the benefit of processing data at the network's edge, it's also enabling digital transformation. What's underlying the edge computing and digital transformation -- like so many other challenges facing IT shops -- is an attempt to speed up the deployment of IT resources, while also minimizing cost and maximizing efficiency.

But why, exactly? What underlying factors are driving these trends? More often than not, it comes down to data processing and analysis, both of which are essential to success in this age of big data and analytics. For digital transformation, this means making infrastructure more agile to improve the efficiency and effectiveness of how budget and resources are allocated, while simplifying and lowering the cost of managing IT infrastructure in the process. For edge computing, it means delivering scalable IT resources at or near the devices generating the data that needs to be processed and analyzed.

Previously, we noted how hyper-convergence can help you achieve these goals. Here, we will delve into that in more detail. We'll also explain why some think the recently evolved progeny of hyper-converged infrastructure -- disaggregated hyper-converged and composable architecture -- show great promise as well.

How hyper-converged infrastructure helps

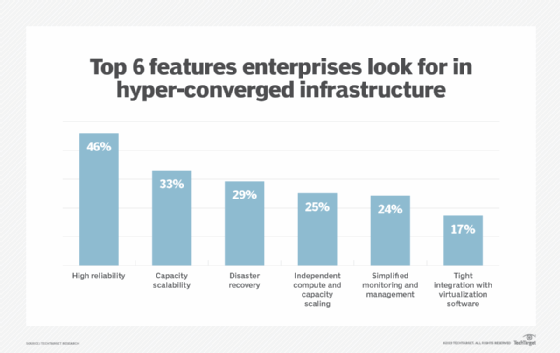

Hyper-converged infrastructure tightly integrates compute, storage and network resources with software-defined and virtualization technologies into nodes or appliances (and occasionally racks, such as Dell EMC VxRack System Flex). Hyper-converged systems and software are available from dominant players, including Dell EMC, Hewlett Packard Enterprise (HPE), Nutanix and VMware, as well as the likes of Ctera Networks, DataCore, Datrium, HiveIO, NetApp, Pivot3, Robin Storage, Scale Computing, StorMagic and others.

The point of hyper-convergence is to enable users to easily deploy and scale server resources as needed, wherever needed. It provides the speed and agility necessary to quickly add resources and process data; both of which are essential in the new digital economy.

Do you need more storage or compute? Simply attach another hyper-converged node. Also, because hyper-converged systems usually come in compact packages that easily fit at or near where those functions must be performed, hyper-converged infrastructure works especially well for edge computing.

Is your central data center or cloud becoming overwhelmed with analyzing petabytes (or more) of data constantly flowing in from IoT devices and smart sensors? Try deploying remotely manageable hyper-converged systems closer to the data source. Perhaps you manage a national or regional grocery chain? Or maybe a large real estate firm or financial advisory and analyst conglomerate with remote offices and branch offices (ROBOs) across the country?

Implementing localized hyper-converged infrastructure systems may be your ticket to easing data traffic and network congestion to and from geographically dispersed stores or ROBOs and the home office. Another benefit of using hyper-converged infrastructure is the quickening of data analysis, which could improve areas such as customer service and improve the bottom line.

Hyper-converged infrastructure 2.0: Disaggregation rising

Hyper-converged infrastructure isn't perfect and doesn't work for all use cases, however. Because standard hyper-converged systems feature an aggregated infrastructure, they've had one glaring problem: Users can't linearly scale individual resources. So, if you want to add either storage or compute power, you generally have to add both in lockstep, which wastes money on unneeded resources.

Several vendors are addressing this problem with hyper-converged hardware, such as Datrium DVX, HPE Nimble Storage dHCI and NetApp HCI; and software, such as VMware vSAN working with Lightbits Labs LightOS, demonstrated at VMworld last summer; and cloud services, such as AWS Outposts and Microsoft Azure Stack. They are doing so with what some are calling HCI 2.0.

Unlike standard hyper-convergence systems, which aggregate server resources, these HCI 2.0 products disaggregate storage and compute. That means they separate storage from compute to enable customers to buy and add only what they need, when they need it. This approach lets customers scale those IT resources independently of one another. With disaggregated hyper-converged infrastructure products, there is no more buying additional processing power when all you need is more storage, and vice versa.

Composable, the most agile infrastructure?

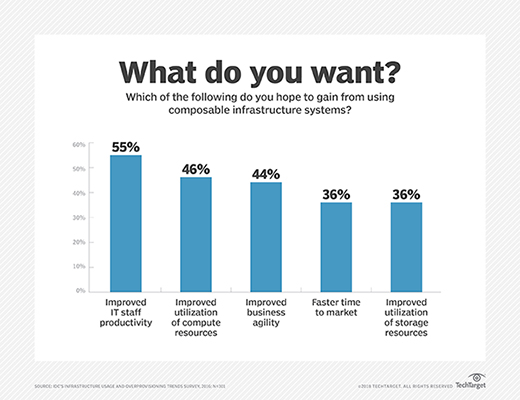

Emerging composable infrastructure, the next stage in the evolution of the converged data center, is another option. Composable infrastructure is particularly good for organizations looking to modernize their infrastructure to facilitate digital transformation.

Composable architecture takes disaggregation even further than HCI 2.0 by combining the rack-scale-ness of converged infrastructure with disaggregated hyper-converged infrastructure features and the ability -- and here's the kicker -- to programmatically create virtual servers on the fly. Here, a software-defined virtualization layer collects disparate IT resources and pools them into discrete compute, storage, network fabric, memory, etc. buckets. Composable infrastructure then provides those resources as services administrators can draw on to quickly stand up (and then take down) virtual servers on-demand to meet the needs of specific use cases.

No reconfiguring of hardware is required, as all infrastructure reconfigurations are virtual.

You would think composable infrastructure is the perfect answer to the IT infrastructure requirements of digital transformation. But while composability has great promise, and many vendors have products on the market, a dearth of interoperability standards means vendor lock-in is a real concern. The industry is aware of this issue and it appears something is being done about it.

For example, when Western Digital introduced its composable OpenFlex family, the OpenFlex F3100 Series Fabric Device, at the Flash Memory Summit last summer, it simultaneously announced its participation in what's being called the Open Composable Compliance Lab. Developed in partnership with Broadcom and Mellanox with support from other composable vendors like DriveScale and Kaminario, Western Digital said the lab intends to validate products to bring interoperability to the composable infrastructure market through open standards and what it called the Open Composability API to enable vendor neutral solutions.

Available composable infrastructure products

Composable architecture products have many similarities and some significant differences. Compare the offerings from three of the leading composable vendors -- Cisco, Dell EMC and HPE -- and you will see what we mean. As a new technology market, there aren't that many products available. Here's a rundown of some composable infrastructure products available today:

-- Cisco Unified Computing System

-- Dell EMC PowerEdge MX platform

-- HPE Synergy

-- HPE SimpliVity with Composable Fabric platforms

-- Western Digital OpenFlex F3100 Series Fabric Device

-- Datrium DVX

-- DriveScale

-- Liqid PCIe fabric switch

-- Kaminario Flex software

-- Lenovo TruScale hardware as a service

-- Intel Rack Scale Design reference architecture

Advances in hyper-converged infrastructure technology and, more specifically, the continuing development of disaggregated HCI 2.0 hyper-convergence and composable infrastructure -- including the start of composable interoperability standards -- promise to make infrastructure flexible enough to meet the ever-increasing complex challenges facing organizations today. These infrastructure technologies should enable IT shops to better meet the agile and speed requirements of business in this age of big data, IoT and digital transformation, as their goal is to permit administrators to more quickly allocate and scale server resources to specific workloads and process data whenever and wherever needed -- even at the network's edge.