What is GPT-3? Everything you need to know

GPT-3, or the third-generation Generative Pre-trained Transformer, is a neural network machine learning (ML) model trained using internet data to generate any type of text. Developed by OpenAI, it requires a small amount of input text to generate large volumes of relevant and sophisticated machine-generated text.

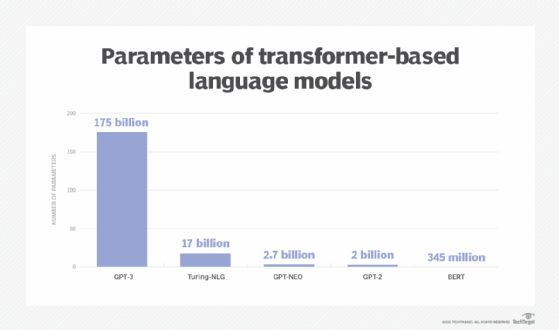

GPT-3's deep learning neural network is a model with more than 17 billion ML parameters. To put things into perspective, the largest trained language model before GPT-3 was Microsoft's Turing Natural Language Generation (NLG) model, which had 17 billion parameters. As of early 2021, GPT-3 is the largest neural network ever produced. As a result, GPT-3 is better than any prior model for producing text that seems like a human could have written it.

GPT-3 and similar language processing models are commonly referred to as large language models (LLMs). Industry experts criticized GPT-3's developer OpenAI and former CEO Sam Altman for switching from an open source to a closed source approach in 2019. Other LLM developers include Google DeepMind, Meta AI, Microsoft, Nvidia and X.

What can GPT-3 do?

GPT-3 processes input text to perform a variety of natural language tasks. It uses both NLG and natural language processing to understand and generate natural human language text. Generating content understandable to humans has historically been a challenge for machines that don't know the complexities and nuances of language. GPT-3 has been employed to create articles, poetry, stories, news reports and dialogue, using a small amount of input text to produce large amounts of copy.

This article is part of

What is GenAI? Generative AI explained

GPT-3 can create anything with a text structure -- not just human language text. A key GPT-3 capability is understanding and generating coherent and contextually relevant responses to a wide range of prompts. It's highly versatile in tasks such as writing essays and stories, answering questions, summarizing text, composing poetry and generating programming code.

GPT-3's large size lets it capture complex patterns in text data and generate fluent and contextually appropriate output. This makes it valuable for automating content creation and enhancing natural language understanding tasks. GPT-3's ability to understand and generate humanlike text opens up applications in customer service, content creation, language translation and education.

GPT-3 examples and use cases

One notable GPT-3 use case is OpenAI's ChatGPT language model. ChatGPT is a variant of the GPT-3 model, optimized for human dialogue, that can ask follow-up questions, admit mistakes it has made and challenge incorrect premises. ChatGPT was made free to the public during its research preview to collect user feedback. It was designed in part to reduce the possibility of harmful or deceitful responses.

Another common example is OpenAI's Dall-E, an AI image-generating neural network built on a 12 billion-parameter version of GPT-3. Dall-E was trained on a data set of text-image pairs and can generate images from user-submitted text prompts.

Using only a few snippets of example code text, GPT-3 can also create workable code that can be run without error, as programming code is a form of text. Using a bit of suggested text, one developer has combined the user interface prototyping tool Figma with GPT-3 to create websites by describing them in a sentence or two. GPT-3 has even been used to clone websites by providing a URL as suggested text. Developers are using GPT-3 in several ways, including generating code snippets, regular expressions, plots and charts from text descriptions, Excel functions and other development applications.

GPT-3 is starting to be used in healthcare. One 2022 study explored GPT-3's ability to aid in the diagnoses of neurodegenerative diseases such as dementia. It detects common symptoms, such as language impairment in patient speech, as part of the diagnosis process.

AI tools based on GPT-3 are also being used for the following applications:

- Creating memes, quizzes, recipes, comic strips, blog posts and advertising copy.

- Writing music, jokes and social media posts.

- Automating conversational tasks by responding to any text that a person types into the computer with a new piece of text appropriate to the context.

- Translating text into programmatic commands.

- Translating programmatic commands into text.

- Performing sentiment analysis.

- Extracting information from contracts.

- Generating a hexadecimal color based on a text description.

- Writing boilerplate code.

- Finding bugs in existing code.

- Mocking up websites.

- Generating simplified summarizations of text.

- Translating between programming languages.

- Performing malicious prompt engineering and phishing attacks.

How does GPT-3 work?

GPT-3 is a language prediction model. This means that it has a neural network ML model that can take input text and transform it into what it predicts the most useful result will be. These systems are trained using a vast body of internet text to spot patterns in a process called generative pre-training. GPT-3 was trained on several data sets, each with different weights, including Common Crawl, WebText2 and Wikipedia.

GPT-3 is first trained through a supervised testing phase and then a reinforcement phase. When training ChatGPT, a team of trainers asks the language model a question with a correct output in mind. If the model answers incorrectly, the trainers tweak the model to teach it the right answer. The model can also give several answers that trainers rank from best to worst.

GPT-3 has more than 175 billion ML parameters and is significantly larger than its predecessors, including previous LLMs such as Bidirectional Encoder Representations from Transformers (BERT). Parameters are the parts of an LLM that define its skill on a problem, such as generating text. LLM performance generally scales as more data and parameters are added to the model.

When a user provides text input, the system analyzes the language and uses a text predictor based on its training to create the most likely output. The model can be fine-tuned, but even without much additional tuning or training, the model generates high-quality output text that feels similar to what humans would produce.

When a user provides text input, the system analyzes the language and uses a text predictor based on its training to create the most likely output. The model can be fine-tuned, but even without much additional tuning or training, the model generates high-quality output text that feels similar to what humans would produce.

What are the benefits of GPT-3?

GPT-3 advantages include the following:

- Limited input and training. When a large amount of text needs to be generated from a machine based on some small amount of text input, GPT-3 provides a good solution. LLMs, like GPT-3, are able to provide decent outputs when given a handful of training examples.

- Large application range. GPT-3 also has a range of AI applications. It's task-agnostic, meaning it can perform many tasks without fine-tuning.

- Fast, automated output. As with any automation, GPT-3 can handle quick, repetitive tasks, enabling humans to handle more complex tasks that require a higher degree of critical thinking. There are many situations where it isn't practical or efficient to enlist a human to generate text output, or there might be a need for automatic text generation that seems human. For example, customer service centers use GPT-3 to answer customer questions and support chatbots. Sales teams use it to connect with potential customers. And marketing teams write copy using GPT-3. This type of content requires fast production and is low risk, meaning that if there's a mistake in the copy, the consequences are relatively minor.

- Lightweight. GPT-3 is also easy to use and makes efficient use of computational power, so it can run on consumer laptops and smartphones.

What are the risks and limitations of GPT-3?

While GPT-3 is remarkably large and powerful, it has several limitations and risks associated with its use.

Limitations

- Pre-training. GPT-3 isn't constantly learning. It has been pre-trained, meaning it doesn't have an ongoing, long-term memory that learns from each interaction.

- Limited input size. Transformer architectures such as GPT-3 have a limited input size. A user can't provide a lot of text as input for the output, which can limit certain applications. GPT-3 has a prompt limit of about 2,048 tokens.

- Slow inference time. GPT-3 also suffers from slow inference time, since it takes a long time for the model to generate results.

- Lack of explainability. GPT-3 is prone to the same problems many neural networks face, including lack of ability to explain and interpret why certain inputs result in specific outputs.

Risks

- Mimicry. Language models such as GPT-3 are becoming increasingly accurate, and machine-generated content might become difficult to distinguish from human-generated content. This could pose copyright and plagiarism issues.

- Accuracy. Despite its proficiency in imitating the format of human-generated text, GPT-3 struggles with factual accuracy in many applications.

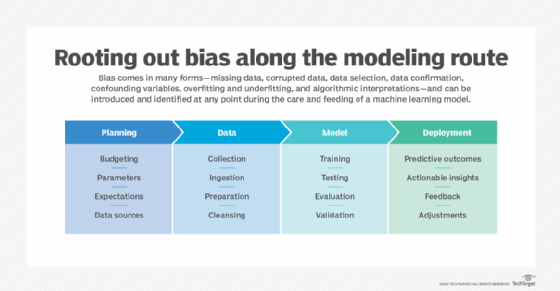

- Bias. Language models are prone to machine learning bias. Since the model was trained on internet text, it has potential to learn and exhibit many of the biases that humans exhibit online. For example, two researchers at the Middlebury Institute of International Studies at Monterey found that GPT-2 -- GPT-3's predecessor -- is adept at generating radical text, including discourses that imitate conspiracy theorists and white supremacists. This presents the opportunity to amplify and automate hate speech as well as inadvertently generate it. ChatGPT, which is powered on a variant of GPT-3, aims to reduce the likelihood of this happening through more intensive training and user feedback.

GPT-3 models

OpenAI, the original developer of GPT-3, has several GPT-3 models. The algorithms of each GPT-3 AI model were developed using different training data and are designed for specific tasks. The most important include the following:

- Text-ada-001. This is the fastest model in the GPT-3 series and is best for simple tasks requiring quick responses such as keyword extraction, text mining and basic text generation.

- Text-babbage-001. This model offers moderate performance that's ideal for simple tasks such as basic question answering and straightforward data analysis.

- Text-curie-001. An intermediate model that balances speed and quality, it's most suitable for interactive bots and chatbots, language translation and basic content generation.

- Text-davinci-003. This is the most complex model used for professional writing, conversational AI and sentiment analysis.

Industries using GPT-3

GPT-3 is used by a range of industries such as the following:

- Healthcare, for the analysis of medical literature and assistance in diagnostics.

- E-commerce and retail, including generating product recommendations and descriptions.

- Finance, for customer support and generation of financial reports.

- Marketing, for search engine optimization assistance and analyzing market trends.

The history of GPT-3

Formed in 2015 as a nonprofit, OpenAI developed GPT-3 as one of its research projects. It aimed to tackle the large goals of promoting and developing "friendly AI" in a way that benefits humanity as a whole.

The first version of GPT was released in 2018 and contained 117 million parameters. The second version of the model, GPT-2, was released in 2019 with around 1.5 billion parameters. GPT-3 jumped over GPT-2 by a huge margin with more than 175 billion parameters -- more than 100 times its predecessor and 10 times more than comparable programs.

Earlier pre-trained models, such as BERT, demonstrated the viability of the text generator method and showed the power that neural networks have to generate long strings of text that previously seemed unachievable.

OpenAI released access to GPT-3 incrementally to see how it would be used and to avoid potential problems. The model was released during a beta period that required users apply to use the model, initially at no cost. However, the beta period ended in October 2020, and the company released a pricing model based on a tiered credit-based system that ranges from a free access level for 100,000 credits or three months of access to hundreds of dollars per month for larger-scale access. In 2020, Microsoft invested $1 billion in OpenAI to become the exclusive licensee of the GPT-3 model. This means that Microsoft has sole access to GPT-3's underlying model.

ChatGPT launched in November 2022 and was free for public use during its research phase. This brought GPT-3 more mainstream attention than it previously had, giving many nontechnical users an opportunity to try the technology. GPT-4 was released in March of 2023 and is estimated to have 1.76 trillion parameters. OpenAI hasn't publicly stated the exact number of parameters in GPT-4, however.

The future of GPT-3

There are many open source efforts in play to provide a free and non-licensed model as a counterweight to Microsoft's exclusive licensee status for GPT-3. New language models are published frequently on Hugging Face's platform.

It's unclear exactly how GPT-3 will develop in the future, but it's likely it will continue to find real-world uses and be embedded in various generative AI applications. Many applications already use GPT-3, including Apple's Siri virtual assistant. Where possible, GPT-4 is being integrated where GPT-3 has been in use.

GPT-3 is a generative AI model. Learn the difference between generative AI and predictive AI.