Getty Images/iStockphoto

Will AI replace cybersecurity jobs?

Although AI can enhance cybersecurity practices like threat detection and vulnerability management, the technology's limitations ensure a continued need for human security pros.

As human dependence on technology increases, securing interconnected digital systems has become critical. Despite the growing need for cybersecurity professionals, the field's skills shortage remains a major problem for decision-makers worldwide. Can AI fill the gap?

Today, IT systems process massive volumes of digital data, including personally identifiable information, healthcare records and financial details. Consequently, threat actors have intensified their efforts to exploit these systems and access that sensitive information.

To secure their IT systems, organizations worldwide are increasing their security expenditures. A significant portion of this expense is directed toward hiring experts to manage systems and oversee the implementation of security policies and access controls.

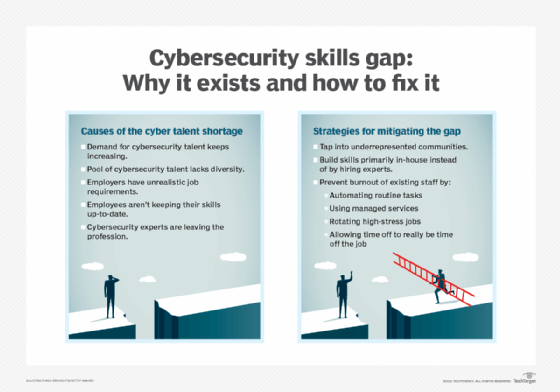

But there are not currently enough qualified experts to fill existing job openings for cybersecurity professionals. While digital transformation is accelerating, the number of cybersecurity professionals is not increasing at the same rate. According to research from Cybersecurity Ventures, there were 3.5 million unfilled cybersecurity positions in 2023, with 750,000 of those in the U.S. -- a disparity predicted to last through 2025 at least.

The rise of AI, particularly generative AI tools like OpenAI's ChatGPT, offers new ways for organizations to overcome the cybersecurity skills gap by using AI and machine learning (ML) to reinforce cybersecurity defenses. However, the vital question remains: Can AI replace human experts entirely?

AI's limitations in cybersecurity

While AI and ML can streamline many cybersecurity processes, organizations cannot remove the human element from their cyberdefense strategies. Despite their capabilities, these technologies have limitations that often require human insight and intervention, including a lack of contextual understanding and susceptibility to inaccurate results, adversarial attacks and bias.

Because of these limitations, organizations should view AI as an enhancement, not a replacement, for human cybersecurity expertise. AI can augment human capabilities, particularly when dealing with large volumes of threat data, but it cannot fully replicate the contextual understanding and critical thinking that human experts bring to cybersecurity.

Lack of contextual understanding

AI tools can analyze vast volumes of data, but lack the human ability to understand the psychological elements of cyberdefense, such as hackers' motivations and tactics. This understanding is vital in predicting and responding to advanced persistent threats and other sophisticated attacks, such as ransomware. Human intervention is crucial when dealing with complex, zero-day threats that require deep contextual understanding.

Inaccurate results

AI tools can issue incorrect alerts -- both false positives and false negatives. False positives can lead to wasted resources, while false negatives can leave organizations vulnerable to threats. Consequently, humans must review AI-generated alerts to ensure they do not miss critical threats, while avoiding costly unnecessary investigations.

Adversarial attacks

As enterprise AI deployments become more widespread, adversarial machine learning -- attacks against AI and ML models -- is expected to increase. For instance, threat actors might poison a model used to power an AI malware scanner, causing it to incorrectly recognize malicious files or code as benign. Human intervention is essential to identify and respond to these manipulations and ensure the integrity of AI-powered systems.

AI bias

AI systems can be biased if they are trained on biased or nonrepresentative data, leading to inaccurate results or biased decisions that can significantly affect an organization's cybersecurity posture. Human oversight is necessary to mitigate such biases and ensure cybersecurity defenses function as intended.

How AI can mitigate the cybersecurity skills shortage

As cyberthreats become more sophisticated, security tools need to advance accordingly to stay one step ahead. While human security professionals are a necessity to any cybersecurity protocol, AI and ML can aid security modernization efforts in several ways.

Automated threat intelligence detection

AI can automate threat detection and analysis by scanning massive volumes of data in real time. AI-powered threat detection tools can swiftly identify and respond to cyberthreats, including emerging threats and zero-day attacks, before they breach an organization's network.

AI tools can also combat insider threats, a significant concern for modern organizations. After learning users' normal behavior patterns within the organization's IT environment, AI tools can flag suspicious activity when a particular user deviates from these patterns.

When it comes to spam protection, AI-powered tools offer significant advantages over traditional methods. Thanks to natural language processing capabilities, AI-powered tools can process a broader range of data types, including unstructured data such as social media posts, text and audio files, in addition to emails and business documents. This capability improves the overall effectiveness of threat detection.

Vulnerability management

Vulnerability management is critical to any cybersecurity defense strategy. Most cyberattacks exploit vulnerabilities in software applications, computer networks and operating systems to gain entry into the target IT environment. Robust vulnerability management can mitigate many types of cyberthreats.

A typical vulnerability management system includes the following:

- Identification.

- Categorization.

- Remediation.

- Ongoing monitoring.

But traditional vulnerability management tools have numerous drawbacks, such as reliance on manual efforts, low speed and lack of contextual analysis. AI capabilities can mitigate these disadvantages in the following ways:

- Automating repetitive tasks such as scanning, assessment and remediation.

- Prioritizing vulnerabilities based on severity and business impact.

- Providing continuous, real-time monitoring to detect emerging threats and zero-day attacks, significantly reducing an organization's attack surface.

- Identifying future vulnerabilities more accurately through continuous learning.

- Automatically recommending patches and mitigations.

Digital forensics and incident response

AI-powered tools can enhance various aspects of digital forensics and incident response.

For example, after a cybersecurity incident, machine learning algorithms can analyze vast volumes of unstructured data, such as server and network logs, to identify the source and scope of the attack. These tools excel at responding quickly to contain the incident and prevent further damage to other IT assets.

AI tools can also significantly improve the remediation process after cybersecurity incidents. A major part of the incident lifecycle involves restoring systems to normal operations after discovering the breach. IT admins use a plethora of tools and scripts to meet the following needs:

- Analyzing malware artifacts.

- Removing malicious files.

- Disabling compromised user accounts.

- Disconnecting compromised endpoint devices from the network.

- Collecting forensic evidence from affected systems, such as log files and memory dumps.

- Applying patches to fix security vulnerabilities.

Generative AI tools such as ChatGPT and GitHub Copilot can help create scripts in any programming language to automate these repetitive tasks, streamlining the incident response process.

Security orchestration

AI-powered security tools can automate numerous tasks related to security configuration and management, including the following:

- Configuring firewall rules by analyzing normal user behavior and interactions across the internal network, then generating firewall rules based on this behavior.

- Updating systems and applications, and reverting changes if any problems appear.

- Analyzing historical network data to identify optimal intrusion detection and prevention system configurations.

- Scanning cloud configurations to identify and fix any misconfigurations that malicious actors could exploit.

Nihad A. Hassan is an independent cybersecurity consultant, expert in digital forensics and cyber open source intelligence, blogger and book author. Hassan has been actively researching various areas of information security for more than 15 years and has developed numerous cybersecurity education courses and technical guides.