Amazon chatbots to employ neural networks for CX

Amazon tests neural network bots to help site customers and human agents more quickly identify answers to refund and order-cancellation questions.

Amazon is testing two variations of neural-network chatbots for its customer service, specifically to handle refunds and order cancellations. One Amazon chatbot assists customers for self-service; the other assists customer-service agents by suggesting answers to customer questions.

The tests are a part of "phasing in" these next-generation bots for Amazon customer service, wrote Jared Kramer, applied-science manager in Amazon.com's Customer Service Technical Management organization, in a blog post describing the progress.

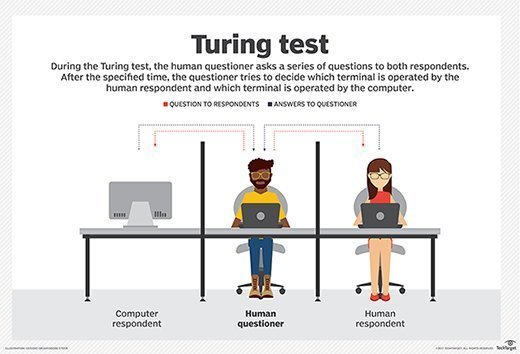

Neural network bots, such as the recently released Google Meena, have the potential to strike up a conversation and provide more accurate answers than current, more rudimentary rules-based bots. Rules-based bots, such as Salesforce Einstein Bot and Amazon Lex, take a user's predefined business processes and language to offer scripted answers to the questions they can recognize, typically basic tasks such as password resets or balance inquiries. These bots return documents from a knowledge base or web search to attempt to answer the questions they can't.

The Amazon chatbot, in theory, can analyze more words the customer or agent offers in their queries and return more human-like answers as it ranks possible solutions. Instead of offering stock, programmed answers, it can generate dialogue after being trained on more data and can recognize more complex linguistic patterns than rules-based bots.

"It is difficult to determine what types of conversational models other customer service systems are running, but we are unaware of any announced deployments of end-to-end, neural-network-based dialogue models like ours," Kramer said. "And we are working continually to expand the breadth and complexity of the conversations our models can engage in, to make customer service queries as efficient as possible for our customers."

Templates key to faster deployment

Chatbot vendors in general ease deployment by making templates for common uses such as customer service, so when users buy a particular bot they aren't training them from scratch, said Forrester Research analyst Vasupradha Srinivasan. Customizing a template to a particular company's vocabulary and processes is simpler when a basic, industry-specific base of terms is already in place.

Adding a neural network to the mix might not necessarily look like a huge technological leap forward to the end users, Srinivasan said, but they can make conversations more efficient.

"If you use chatbots today, you realize that what they really do is sort information from some frequently asked questions document and you see the copy pasted back. It gives you a link if you have more questions," Srinivasan said. "That's not conversational at all. People don't talk like that; it's not a response to the question you asked. It's just guiding you to where the response is available. Neural networks allow chatbots to generate words based on the learning it applies as it talks to the user."

On the implementation side, templates paired with neural networks can make it much simpler for users to spin off variants for other tasks in customer service beyond, in Amazon's case, order cancellation and returns.

Spinning off a well-trained chatbot to handle other customer service tasks is difficult for many companies, because they fail to accommodate complex end-user intents and actions in their initial strategy, she wrote in the report "How To Scale Your Chatbot," last December. Most chatbot deployments suffer from a narrow focus on call-deflection, which precludes them from exploring broader use cases.

In his blog post, Kramer did not discuss any plans Amazon may have to eventually roll out the chatbots as an AWS service the way it has other Amazon-built customer experience tools such as Amazon Personalize, its product recommendation engine. It would make sense, said Gartner analyst Brian Manusama, but he hasn't seen any indication Amazon plans to.