IT's budgetary nightmare: Tech buyers face AI pricing variance

Did HubSpot just fix AI pricing?

Ah, booking summer vacations -- a glorious late-winter rite for New Englanders. Here, bleak weather drags on far longer than human souls should bear. The act feeds our faith in sun-drenched times surely to come when our rental reservations come into full bloom.

What does all that Frostian sentiment have to do with enterprise AI pricing? I'll tell you: If Vrbo and Airbnb can set up their sites to give us all-inclusive pricing, then SaaS vendors can do it with AI, too.

Booking business and personal travel has been much less of a moving cost target now that hotel and vacation rental sites were pushed by the U.S. Federal Trade Commission (FTC) to make pricing more transparent -- a rare bipartisan initiative proposed by the Biden FTC and put into effect by Trump's.

Thanks to this CX regulation, which also bans "junk fees," it's so much easier to compare and contrast different properties with all-in pricing listed on index pages -- and make a confident booking decision in 500 fewer clicks this year, no joke. Prince Edward Island, here we come!

I'm not saying that the FTC should impose similar pricing transparency regulations on SaaS vendors. However, I would bet that, in their heart of hearts, more than a few CIOs wouldn't object if they did.

AI isn't junk

To be clear, I am also not, in any way, comparing AI consumption pricing to junk fees that some bad actors in the hospitality industry attempt to sneak past the FTC's goalies. Large language model (LLM) vendors provide a technology that can potentially drive business efficiency and eliminate mind-numbing busywork for humans.

Furthermore, reserving a vacation rental is a one-off consumer transaction with a credit card; enterprise IT deals are vastly more complicated.

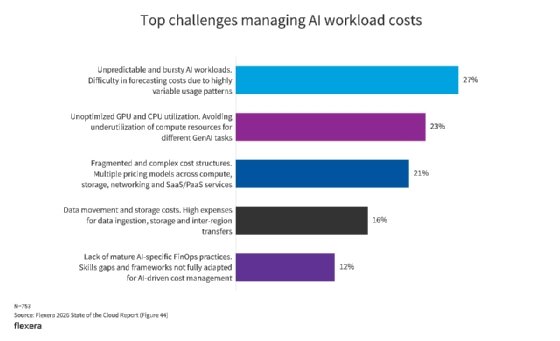

But variable consumption pricing for AI introduces uncertainty into IT budgets, just like the obfuscation of resort fees, service fees, taxes, cleaning fees and all the other fees does for consumers booking a stay. Tech buyers don't want it, said Alan Pelz-Sharpe, founder of Deep Analysis, a technology research and advisory firm.

He made the case for all-in, full-year pricing.

"Let's put it into hard terms: IT budgets grow around 5% to 6% a year -- Microsoft pricing [alone] goes up by more than that," Pelz-Sharpe said. "So IT budgets are pretty set. You introduce massive variability into your quarterly billing, and a lot of IT departments are simply going to say no. It's as simple as that. They're just not going to take it."

Yet the idea of AI consumption pricing prevails. Salesforce and Zendesk are both working on different, complex outcomes-based pricing plans that, at the moment, appear to be works in progress and have not reached their final destinations.

It's not an issue created by SaaS companies. AI is a pass-through cost from the LLM purveyors, and worse yet, it's eating up seat licenses. But it's definitely their problem to resolve. It seems an almost impossible equation to manage external costs and shrinking seat revenue while keeping customer bills level.

Marketing and customer experience platform HubSpot, which is accountable to a budget-strapped customer base of small- and medium-sized businesses, might be on to something with its latest pricing structure.

HubSpot's new model

Starting April 14, HubSpot changed its AI pricing for two of its agents. The Breeze Customer Agent will cost $0.50 per resolved conversation, and the Breeze Prospecting Agent will cost $1 per lead recommended for outreach. Use of the rest of HubSpot's agents on its platform is included in the subscription cost.

That free-with-subscription model also extends to many agents on the fledgling Breeze agent marketplace because, so far, most have been made by HubSpot itself.

This hybrid consumption and all-in pricing is HubSpot's way of matching the price of the agents to the value they bring, in addition to respecting what customers told the company they wanted. It hits a balance between P&L considerations and the variability of LLM consumption, said Jon Dick, chief customer officer at HubSpot.

HubSpot also gives CIOs and procurement teams control mechanisms, such as consumption caps, to prevent surprise AI bills and make costs more predictable.

Agentic AI pricing is iterative, said Dick, who acknowledged that HubSpot's plan is also a work in progress.

"I think it's very difficult to predict anything with AI," Dick said. "Our principles are that we believe that AI agents are an incredibly powerful tool for our segment of the market, and we want to price to value -- and do it in a way that is as clear, understandable and predictable as possible.

"We're learning a lot, and learning fast. If we hear, loud and clear, from our customer base that they would prefer all-in pricing, I think we would listen to [them] and figure out if it was feasible."

Economics of AI in flux

In the end, the OpenAIs and Anthropics of the world must bend their pricing into something their customers -- and, in the case of SaaS vendors, their customers' customers -- can economically justify.

Enterprise technology buyers can be magpies, it's true, and AI is an awfully shiny object. But they can also be cruel if the numbers don't add up. They hold the purse strings. They get to decide whether or not they're playing a long game with AI.

The bigger economic picture might force their hands.

Companies that have embedded AI in their operations might have bought in, thinking that AI would soon be commoditized and that pricing would come down. Just like it happened with the cloud. While that moment might also come for AI, a war economy -- and the spiking energy costs it has wrought -- could pitch us into a global recession that puts costly AI projects in jeopardy unless they're already returning the savings their architects projected. There might not be the luxury of time for tweaking and A/B testing.

And that AI commoditization moment could be years off. Someone's got to be on the hook for all those AI data centers that Meta, Google and Oracle plan to build based on future business projections. Someone has to drive the investment in Anthropic, OpenAI, Cohere and xAI stock IPOs predicted for 2026. Customers will play at least some part in bankrolling this. In a recession, there will be fewer of them to go around. Something's got to give.

Thinking about how these market forces affect SaaS pricing brings to mind an exchange on the 2023 BoxWorks user conference keynote stage, back in the early days of generative AI. Knowing he might not get the answer he wanted but enjoying putting OpenAI CEO Sam Altman on the spot, Box CEO Aaron Levie asked Altman to please work on bringing down the consumption pricing for OpenAI's LLM.

"Everybody can expect lower AI prices over time," Altman said. But not as much as one might think, he added: The better LLMs get, the more compute power they'll require.

"If you can just make [ChatGPT] 100 times cheaper, we won't ask for more than that," Levie said wryly.

Back then, the two CEOs probably couldn't imagine the economic squeeze brought about by the geopolitical tumult of 2026.

But we all do now.

For customers to keep their AI projects going, pricing will need to reflect this evolving economic reality. That likely calls for stable, predictable and transparent costs. Just like Airbnb and Vrbo did, AI companies -- and the SaaS vendors who function as their de facto channels -- need to figure it out.

Don Fluckinger is a senior news writer for Informa TechTarget. He covers customer experience, digital experience management and end-user computing. Got a tip? Email him.