iPhone

What is an iPhone?

The iPhone is a smartphone made by Apple that combines a computer, iPod, digital camera and cellular phone into one device with a touchscreen interface. The iPhone runs the iOS operating system, and in 2021 when the iPhone 13 was introduced, it offered up to 1 TB of storage and a 12-megapixel camera.

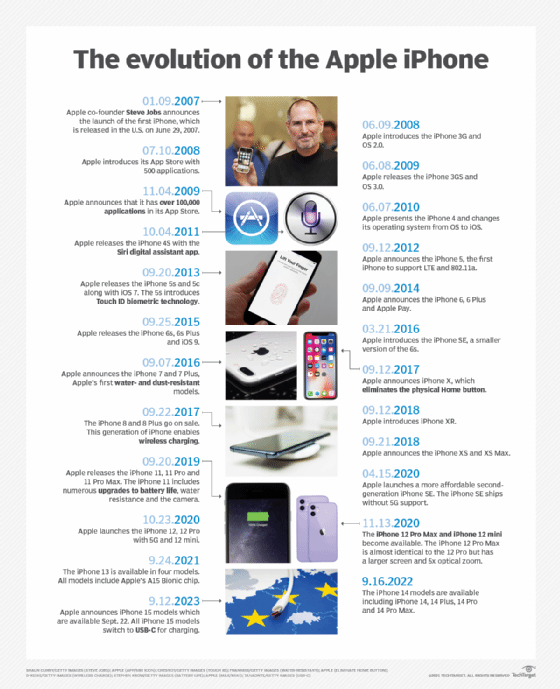

The original iPhone was announced on Jan. 9, 2007, at the Macworld convention by Apple co-founder Steve Jobs. While it was not considered the first smartphone, the iPhone has helped drive the global shift to mobile computing among both consumers and businesses. Its primary rival has been Google Android-based devices from companies such as Samsung, also introduced in 2007.

The first-generation iPhone came preloaded with a suite of Apple software, including iTunes, the Safari web browser and iPhoto. Internet Message Access Protocol and Post Office Protocol 3 email services were integrated with the device.

Apple released the iPhone under an exclusive two-year partnership with AT&T Wireless, but it took less than three months for hackers to unlock the device for use on any Global System for Mobile communication network.

IPhone models

IPhone 3G, 3GS. Apple released the second-generation iPhone and iPhone operating system (OS) 2.0 on June 9, 2008. The new device was called the iPhone 3G, a nod to its new ability to connect to third-generation (3G) cellular networks powered by technologies such as Universal Mobile Telecommunications System and high-speed downlink packet access (HSDPA). The iPhone 3G was available in 8 GB and 16 GB models.

The iPhone OS 2.0 update included several features designed for business, including support for Microsoft Exchange email. Improved mobile security features included secure access to corporate networks over Cisco's IPSec virtual private network, plus remote wipe and other management capabilities.

Apple also released in 2008 a software development kit (SDK) for custom applications, a configuration utility for centralized management and, most importantly, its App Store -- a portal through which iPhone users could purchase and download additional applications to run on their devices.

The iPhone 3G exchanged the flat aluminum housing of the first-generation iPhone for a sleek, convex black or white plastic case. The switch to plastic enabled better transmission for the many radio receivers inside the device.

The iPhone 3G also featured assisted GPS, which combined triangulation using cellular towers with a GPS receiver. It did not support Flash, Java or Multimedia Messaging Service (MMS). Its built-in Bluetooth supported wireless earpieces but not stereo audio, laptop tethering or File Transfer Protocol.

Less than a year after the debut of the iPhone 3G, on June 8, 2009, Apple released the iPhone 3GS and iPhone OS 3.0. Available in 16 GB and 32 GB models, the iPhone 3GS featured several hardware improvements, including a video camera, built-in compass and faster download speeds through 7.2 megabits per second (Mbps) HSDPA support.

That new version of the OS brought support for MMS, copy-and-paste functionality and the Find My iPhone app. In addition, it offered an expanded SDK that enabled developers to build in-app purchasing features, push notifications and navigation capabilities into their third-party apps.

IPhone 4 and 4S. Apple released the iPhone 4 on June 7, 2010. With this model, Apple also changed the name of its OS from iPhone OS to iOS. The name change was made in the aftermath of the April 3, 2010, release of the iPad, which ran the same OS.

Hardware innovations in the iPhone 4 included Apple's Retina Display, which boasted a higher pixel density than previous iPhone screens and a front-facing camera. For the first time, Apple also produced versions with code division multiple access connectivity, which enabled the device to connect to a wider variety of cellular carriers' networks.

IOS 4 introduced FaceTime, an app that enabled video calling between Apple devices over Wi-Fi. It also brought limited multitasking capabilities to the iPhone, letting users make phone calls or listen to music in one app, while having a different app open on the screen.

On Oct. 4, 2011, Apple released the iPhone 4S, which featured the debut of Siri, a voice-powered digital assistant app. The device ran on Apple's new A5 processors and shipped with iOS 5, which featured the debut of Apple's cloud service, iCloud, and its proprietary text and multimedia messaging technology, iMessage.

IPhone 5, 5c and 5s. The iPhone 5, which was released on Sept. 12, 2012, featured a taller screen than its predecessors, measuring 4 inches diagonally with a 16:9 widescreen aspect ratio and 1136 x 640-pixel resolution. It ran on Apple's A6 processor and included a specially designed nano-SIM card, plus a new connector that was not compatible with plugs and accessories for the iPad, iPod or previous iPhones.

The iPhone 5 was also the first iPhone to support Long-Term Evolution (LTE) networks and the 5 gigahertz 802.11ac Wi-Fi band. It was available in 16 GB, 32 GB and 64 GB storage capacity models. The iPhone 5 shipped with iOS 6, whose new features included a native Maps application and Passbook, an app that stores digital credit cards, boarding passes and more.

The iPhone 5s, released on Sept. 20, 2013, shipped with iOS 7 and was powered by a 64-bit dual-core A7 processor. Apple added another new chip called the M7 coprocessor, which handled motion data from the phone's gyroscope, compass and accelerometer.

The iPhone 5 and 5s had the same aluminum frame, chamfered edges, weight and dimensions, weighing just 3.9 ounces and measuring 4.9 x 2.3 x 0.3 inches. The iPhone 5s had an updated camera lens with F2.2 aperture. Other new camera features included slow-motion video and live video zoom capabilities.

Perhaps the biggest change in the iPhone 5s was Touch ID, which turned the phone's Home button into a biometric fingerprint scanner for authenticating access to the device and iTunes.

The iPhone 5c, released on the same day as the 5s, came in five colors: white, pink, yellow, blue and green. It was the same size as the iPhone 5s at 4.9 x 2.3 x 0.3 inches, and it weighed about 4.6 ounces. The iPhone 5c did not run the A7 processor as the iPhone 5s had, however; it ran the A6 processor from the iPhone 5. It also did not share the iPhone 5s' aluminum frame; instead, the iPhone 5c was made of hard-coated polycarbonate with a steel-reinforced interior.

IPhone 6 and 6 Plus; 6s and 6s Plus. Apple released the iPhone 6 and 6 Plus on Sept. 9, 2014. It marked the first time that one iPhone model was available in two different sizes. The iPhone 6 featured a 4.7-inch display with a 1334 x 750 resolution, and the iPhone 6 Plus had a 5.5-inch display with a 1920 x 1080 resolution. Both devices ran Apple's A8 processor and M8 motion coprocessor, and they were the first to come with Apple Pay, Apple's mobile payments service.

The iPhone 6 and 6 Plus shipped with iOS 8, which featured a revamped user interface and the new iCloud Drive, Apple's file synchronization service.

On Sept. 25, 2015, Apple released the iPhone 6s and 6s Plus with iOS 9. The new devices ran on Apple's A9 chip and M9 coprocessor. They also included 3D Touch, which enabled users to perform different functions by tapping the screen with various levels of pressure.

IPhone SE first generation. Apple released on March 21, 2016, the iPhone SE, a smaller version of the iPhone 6s. The first iPhone SE featured a 4-inch display but ran on the same A9 and M9 chips as the iPhone 6s and 6s Plus. It was available in 16 GB and 64 GB models and came in four colors: space gray, silver, gold and rose gold.

IPhone 7 and 7 Plus. The iPhone 7 and 7 Plus, announced on Sept. 7, 2016, boasted Apple's first water- and dust-resistant casing. The devices, which came in 32 GB, 128 GB and 256 GB versions, also featured two 12-megapixel cameras and Apple's new four-core A10 Fusion processor.

In a major design change, Apple eliminated the headphone jack in the iPhone 7 and 7 Plus, forcing users to either rely on wireless headphones or an adapter for the iPhone's Lightning port. The iPhone 7 and 7 Plus shipped with iOS 10, whose new features included Siri integration with third-party apps and expanded 3D Touch capabilities.

IPhone 8 and 8 Plus. Apple gave the iPhone 8 and 8 Plus a new aluminum and glass design, a six-core A11 Bionic processor and wireless charging capabilities. The devices, released on Sept. 22, 2017, also had new cameras built especially for augmented reality use cases. Both models came in two sizes: 64 GB and 256 GB. The iPhone 8 and 8 Plus shipped with iOS 11, which included ARKit, an SDK for augmented reality apps.

IPhone X, XR and XS/XS Max. Apple also announced the iPhone X in September 2017. The iPhone X was the first iPhone to eliminate the physical Home button that was present on every preceding iPhone model, giving the device a touchscreen-only interface. Like the iPhone 8 and 8 Plus, the iPhone X used the A11 Bionic chip.

The iPhone X featured an all-glass design and a 5.8-inch super Retina HD display, making it the largest iPhone in history at the time. The device also marked the debut of Face ID, a new way of authenticating user access through facial recognition technology. The iPhone X came in two storage options -- 64 GB and 256 GB.

In September 2018, Apple announced three more iPhone X models. The iPhone XR was a budget version of the flagship phone that came with a larger 6.1-inch display. But the technology in the display had a lower resolution, Liquid Retina HD display that used LCD as opposed to the light-emitting diode, or OLED, Super Retina HD displays available in iPhone X and XS/XS Max displays. The XR used an upgraded A12 Bionic chip and boasted a battery life that was better than all other X models. The XR could be purchased with either 64 GB or 128 GB storage capacity.

The XS is the successor to the iPhone X model with the upgraded A12 Bionic chip, slightly improved battery life, improved water resistance up to 2 meters for up to 30 minutes and the option to purchase the device with up to 512 GB storage. The XS Max featured the same specs as the XS -- only with a larger 6.5-inch display.

IPhone 11, 11 Pro and 11 Pro Max. Released on Sept. 20, 2019, the iPhone 11, 11 Pro and 11 Pro Max are the successors to the iPhone XR, XS and XS Max, respectively. One major advancement of the iPhone 11 series of smartphones is the inclusion of a Wi-Fi 6 wireless chip for improved speeds using the IEEE 802.11ax Wi-Fi 6 standard. Another advantage is the infusion of an upgraded 12-megapixel TrueDepth front camera.

The iPhone 11 continued the use of the slightly lower quality 6.1-inch LCD display found in the iPhone XR. However, many iPhone 11 enhancements were made over the XR, including an upgraded A13 Bionic chip, a rear camera with 2x optical zoom and ultra-wide lens, improved battery life, improved water resistance and a 256 GB storage capacity option.

The iPhone 11 Pro also makes significant strides over the iPhone XS. Perhaps the most famous is the three-lens rear-facing camera that Apple put into iPhones for the first time. Other advantages over the XS model include an upgraded A13 Bionic chip, Super Retina XDR display, ultra-wide-angle photo capabilities and up to 4x optical zoom, four additional hours of battery life and improved water resistance up to 4 meters for 30 minutes.

Finally, the iPhone 11 Pro Max is simply an iPhone 11 Pro with a 6.5-inch, as opposed to a 5.8-inch, Super Retina XDR display. Because of the larger size, the iPhone 11 Pro Max uses a larger battery that delivers up to two additional hours of video playback -- 20 total hours for the iPhone Pro Max and 18 hours for the iPhone 11 Pro -- compared to the iPhone 11 Pro.

IPhone SE second generation. Throughout the late 2010s and into 2020, Apple dominated the high-end smartphone market but began losing ground to competitors in the mid- to low-end price range. To counter this, Apple launched a second generation of iPhone SE with upgraded internals on April 15, 2020.

The second-generation SE used a 4.7-inch Retina HD display and A13 Bionic chip, and came in 64 GB, 128 GB or 256 GB storage capacity. The second-generation SE brought back the physical home button with touch ID login capability as opposed to the Face ID authentication features originally introduced in the iPhone X and all newer models up to the SE second gen phone. Thus, not only did this updated SE provide plenty of performance in a lower-cost model -- it also appealed to iPhone users who preferred to have the physical Home button as opposed to the all-touch alternative that all other iPhones evolved toward.

One caveat to note about the iPhone SE is that it shipped without 5G chipset support to connect to wireless carriers that are rolling out 5G commercially. Thus, despite this phone launching just a few months before the iPhone 12, which has 5G support, the iPhone SE is stuck with fourth-generation LTE mobile data capabilities only.

IPhone 12, 12 Pro, 12 Pro Max and 12 mini. The iPhone 12 series of smartphones comes in four different versions. All iPhone 12 models feature the A14 Bionic chip, Super Retina XDR displays, Dolby Vision HDR video recording, water resistance up to 6 meters for 30 minutes and 5G mobile data chipsets.

The base iPhone 12 model launched on Oct. 23, 2020, and came with a 6.1-inch display, a 17-hour battery, improved Ceramic Shield casing and 64 GB, 128 GB or 256 GB storage capacity options.

The iPhone 12 Pro is similar to the base iPhone 12 but with enhanced rear-facing camera hardware and features that produce superior images. The iPhone 12 Pro features a three-lens camera for wide, ultra-wide and telephoto capabilities. Apple also introduced its lidar scanner sensor capability in the 12 Pro model. LiDAR scanning technology is used when taking nighttime photos and offers improved autofocus in low light situations. Storage options for the 12 Pro models are 128 GB, 256 GB and 512 GB.

Likely due to larger-size Super Retina XDR display shortages in Apple's manufacturing supply chain, the iPhone 12 Pro Max model launched on Nov. 12, 2020, three weeks after the iPhone 12 and 12 Pro launch. The 12 Pro Max is identical to the 12 Pro with a few notable exceptions. For one, the screen size increased from 6.1 inches on the 12 Pro to 6.7 inches on the 12 Pro Max. Additionally, the 12 Pro Max has a camera lens that can optically zoom up to five times, compared to the 12 Pro's 4x optical zoom. Finally, because of the larger screen size, Apple was able to put a larger battery into the 12 Pro Max, bumping the expected battery life from 17 hours to 20.

The iPhone 12 mini offers the same features as the other iPhone 12 models but in a more compact size -- 5.4 inches vs. the 6.1-inch iPhone 12 Pro or the 6.7-inch iPhone 12 Pro Max.

IPhone 13, 13 Mini, 13 Pro and 13 Pro Max. The iPhone 13 became available Sept. 24, 2021. As was the case with its predecessor, the iPhone 13 is available in four models: the base iPhone 13, iPhone 13 mini, iPhone 13 Pro and iPhone 13 Pro Max.

All iPhone 13 models integrate Apple's proprietary A15 Bionic chip -- a six-core CPU. They also integrate a 5G chipset and support Wi-Fi 6 (802.11ax) as well as Bluetooth 5.0. Water resistance on all iPhone 13 models is rated as IP68, meaning a device can potentially last for up to 30 minutes in up to 6 meters of water before it becomes inoperable. All iPhone 13 models are available with 128 GB, 256 GB and 512 GB storage options. The iPhone 13 Pro Max is also available with the largest storage capacity that Apple provides, topping out at 1 TB.

All iPhone models have updated cameras, with new functionality that varies across the models. However, all models include support for a new cinematic mode, which automatically shifts focus as a subject steps into the field of view.

The base iPhone 13 model has a 6.1-inch display, and battery life that provides up to 19 hours of video playback. The iPhone 13 mini has a smaller 5.4-inch display and a shorter battery life of up to 17 hours.

Both the base and mini have a Super Retina XDR display and the same two-camera setup. They both have dual 12MP lenses with wide and ultra-wide capabilities. There is a 2x optical and 5x digital zoom on the dual camera system.

Stepping up from the base and mini are the iPhone 13 pro models with pro features -- once again being focused on camera hardware boosts. The iPhone 13 Pro has a 6.1-inch display while the iPhone Pro Max is about 10% bigger at 6.7 inches. The display used for both pro models is the Super Retina XDR with ProMotion, providing faster screen refresh rates than the base models.

The iPhone Pro differentiator is the use of a three-camera system -- providing telephoto, wide and ultra-wide capabilities. The pro camera system also has a larger optical zoom range than the base models -- coming in at 6x -- and a digital zoom of 15x. As was the case with the iPhone 12 series, the iPhone 13 Pro cameras also benefit from a LiDAR scanner for better low-light and night photography.

IPhone 14, 14 Plus, 14 Pro and 14 Pro Max. Apple announced its iPhone 14 lineup on Sept. 7, 2022, with the phones first available on Sept. 16. Once again, Apple has four models -- iPhone 14, 14 Plus and 14 Pro Max models. There will be no mini model in this version.

All iPhone 14 models are available with 128 GB, 256 GB, 512 GB and 1 TB storage options. With the iPhone 13, only the 13 Pro Max was available with a 1 TB option.

The iPhone 14 and 14 Plus are powered by Apple's A15 Bionic chip, while the 14 Pro and Max benefit from the faster A16 Bionic chip. Both chips have a six-core CPU, five-core GPU and a 16-core neural engine.

With the 5.4-inch mini model now gone, the iPhone 14 and 14 Max provide a 6.1-inch display. The 14 Plus and 14 Max Pro both scale the size up to 6.7 inches. All of the iPhone 14 models benefit from Apple's Super Retina XDR display. The base models have a maximum brightness of 1,200 nits while the Pro models are brighter at 2,000 nits.

The form factor for the Pro models is slightly different from the base model, with a new front design that uses less space for the camera notch at the top of the phone. With the Pro models, Apple is also providing an always-on display capability, that isn't present in the base models. The always on display is enabled by a new low power mode that is enabled on the iPhone 14 Pro models.

Camera and image processing across all iPhone 14 models have also been improved over prior models. The camera in the 14 and 14 Plus get a boost with a 12MP main camera, and the 14 Pro and 14 Pro Max benefit from a 48MP main camera. All of the iPhone 14 models benefit from Apple's new Photonic Engine, which uses the neural engine processing capabilities in the A15 and A16 chips to provide better low-light images. Apple has also added a new Action Mode for video, providing improved stabilization capabilities that work across all iPhone 14 models.

Also of note, all iPhone 14 models integrate a new accelerometer that Apple claims is better able to detect crashes and accidents. Apple is also integrating better satellite connectivity for emergency services across the iPhone 14 model lineup.

IPhone 15, 15 Plus, 15 Pro and 15 Pro Max. Apple announced its iPhone 15 lineup on Sept. 12, 2023, with the phones first available on Sept. 22. The four models follow the same path as the previous iPhone 14 lineup, with iPhone 15, 15 Plus, 15 Pro and 15 Pro Max models.

At the entry-level, the iPhone 15 has the smallest display of the lineup, coming in at 6.1 inches. The iPhone 15 Plus, iPhone 15 Pro and iPhone 15 Pro Max all have 6.7-inch displays. All the iPhone 15s have a Super Retina XDR display, with the Pro models benefiting from the addition of Apple's ProMotion technology and always-on display capabilities.

Among the most noticeable changes across the iPhone 15 phones is the switch to the USB-C interface for charging, from Apple's lightning port, which had been the default since the iPhone 5. The move to USB-C for the iPhone brings the smartphone in alignment with Apple's iPad, Mac and AirPods devices which are already using USB-C.

Another big change that has come only to the 15 Pro and 15 Pro Max is a new customizable action button, which is in the same location that the mute switch was available on all prior iPhones. By default, the action button is still just a simple ring-silencing switch, and it can be customized by users to execute any number of different actions, such as launching the camera or recording audio.

The iPhone 15 and 15 plus are available with 128 GB to 512 GB in storage capacity, with the 15 Pro and Pro Max versions providing a 1 TB option. The iPhone 15 and 15 Plus are powered by Apple's A16 Bionic chip, which first debuted on the iPhone 14 Pro phones, while the 15 Pro and 15 Pro Max benefit from the faster A17 Bionic chip. Both chips have a six-core CPU and a 16-core neural engine, while the A17 has a six-core GPU and the A16 has a five-core GPU.

Apple has also made incremental improvements to the camera and image processing across the iPhone 15 lineup, which now all have a 48MP main camera. The Pro models provide four optical zoom options (0.5, 1x, 2x and 5x), while the non-Pro models only have three (0.5, 1x and 2x).

All iPhone 15 models will ship initially with iOS 17 as the default mobile operating system.

iPhone 16, 16 Plus, 16 Pro, 16 Pro Max and 16e. Apple announced its iPhone 16 lineup on Sept. 9, 2024, and the phones were first available on Sept. 20, 2024. As was the case for the previous several iPhone updates, the initial rollout included a base model, Plus, Max and Max Pro.

In a departure from past releases, Apple issued another model of the iPhone 16 after the initial release. The iPhone 16e was announced on Feb. 19, 2025, with pre-orders on Feb. 21, 2025, and availability on Feb. 28, 2025. The new 'e' series is intended to be a more affordable version of the iPhone.

The base iPhone 16 and the iPhone 16e models have the smallest screen at 6.1 inches, which is the same as the iPhone 15. The iPhone 16 Plus has a 6.7-inch display -- again the same size as the prior year model. However, screen sizes change a little for the higher-end versions. The iPhone 16 Pro has a 6.3-inch screen, while the iPhone 16 Pro Max has a large 6.9-inch display. Once again, the displays are slightly better on the Pro version, which includes Apple's ProMotion technology. All iPhone 16s have a Super Retina XDR display.

The iPhone 16, 16e and 16 Plus have the A18 chip, which has a six-core CPU, and a 16-core neural engine. In the 16e, the A18 is configured with a 4-core GPU, while the base iPhone 16 and the iPhone 16 Plus models have a 5-core GPU configuration. The Pro models benefit from the slightly more powerful A18 Pro chip, which is the same except that it has a six-core GPU. The A18 chips help power the new Apple Intelligence features, bringing enhanced AI capabilities to the iPhone.

Across all models of the iPhone 16, except the 16e, there is also a new button called Camera Control. This button is designed to quickly activate the camera and enable zoom slider functionality. The iPhone 16, 16 Plus and 16e also get the Action button, which was previously only available on the Pro versions.

Camera and sound hardware are another area of updates for the iPhone 16. All iPhone 16 devices start with Apple's 48-megapixel camera technology. The Pro gets a 48-megapixel ultrawide telephoto, while the 16 and 16 Plus are limited to just 12 megapixels. The Pro phones also get a big boost for video with Dolby Vision support for 4K video at 120 fps.

From a networking perspective, all iPhone 16s, except the iPhone 16e, also support the 802.11be wireless standard, commonly known as Wi-Fi 7. This is an upgrade from the iPhone 15, which supported Wi-Fi 6E. The iPhone 16e only supports Wi-Fi 6.

All iPhone 16 models will ship initially with iOS 18 as the default mobile operating system.

What are the differences between iPhone and Android?

This is an often-asked question for those who are torn between choosing an Apple iPhone and virtually any other smartphone device on the market that uses the Android OS.

The first difference is that the iPhone is a smartphone device that was developed and manufactured by Apple Inc. Apple iPhones run Apple's proprietary iOS operating system. Only Apple manufactured devices are able to run this smartphone OS.

Android, on the other hand, is an Open Source mobile OS created by numerous developer groups and is commercially sponsored by Google Inc. Thus, the two major competing smartphone OSes on the market are Apple's iOS and Google's Android.

Because Apple's iOS is proprietary while Android is open source and available for use by multiple smartphone manufacturers, all competing smartphone manufacturers use some form of Android. This includes smartphone vendors such as Samsung, LG Electronics -- which announced in April that it is leaving the mobile phone market -- Motorola and Huawei.

Besides the difference in OSes, the other major difference between iPhones and Android devices largely revolves around where applications can be purchased and downloaded. Apple maintains its own proprietary App store and thoroughly vets which applications can be purchased within their virtual store. Apple also does not allow iPhone users to download apps from any third-party stores. While this limits where apps can be obtained, Apple claims it does this to better protect its customers from malware-infected apps that can sneak into third-party app stores.

Android enables users to download and purchase apps through Google's popular Play store in addition to other third-party app stores. This creates a bit more price competition among stores. Android also has less strict rules when it comes to developers selling apps through the Google Play store or third-party alternatives. Thus, there are more app options in the Android ecosystem -- about 2.5 million -- compared to Apple's App Store -- roughly 2.2 million.