What is computational linguistics? Definition and career info

Computational linguistics (CL) is the application of computer science to the analysis and comprehension of written and spoken language. As an interdisciplinary field, CL combines linguistics with computer science and artificial intelligence (AI) and is concerned with understanding language from a computational perspective. Computers that are linguistically competent help facilitate human interaction with machines and software. This is useful in many settings as it helps humans complete tasks more efficiently.

Computational linguistics is used in tools such as instant machine translation, speech recognition systems, parsers, text-to-speech synthesizers, interactive voice response systems, search engines, text editors and language instruction materials.

The term computational linguistics is also closely linked to natural language processing (NLP), and these two terms are often used interchangeably.

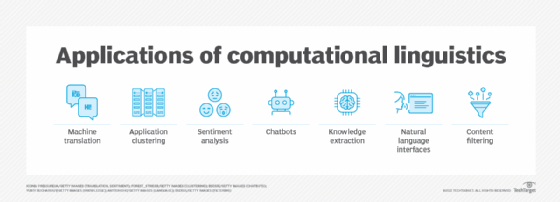

Applications of computational linguistics

Most work in computational linguistics -- which has both theoretical and applied elements -- is aimed at improving the relationship between computers and basic language. It involves building artifacts that can be used to process and produce language. Building such artifacts requires data scientists to analyze massive amounts of written and spoken language in both structured and unstructured formats.

This article is part of

What is enterprise AI? A complete guide for businesses

Applications of CL typically include the following:

- Natural language processing. NLP is a field of AI that enables computers to process and understand language in a similar way that humans do.

- Machine translation. This is the process of using AI to translate one human language to another.

- Application clustering. This is the process of turning multiple computer servers into a cluster.

- Sentiment analysis. Sentiment analysis is an important approach to NLP that identifies the emotional tone behind a body of text.

- Chatbots. These software or computer programs simulate human conversation or chatter through text or voice interactions.

- Information extraction. This is the creation of knowledge from structured and unstructured text.

- Natural language interfaces. These are computer-human interfaces where words, phrases or clauses act as user interface controls.

- Content filtering. This process blocks various language-based web content from reaching users.

- Text mining. Text mining is the process of extracting useful information from massive amounts of unstructured textual data. Tokenization, part-of-speech tagging -- named entity recognition and sentiment analysis -- are used to accomplish this process.

Approaches and methods of computational linguistics

There have been many different approaches and methods of computational linguistics since its beginning in the 1950s. Examples of some CL approaches include the following:

- The corpus-based approach, which focuses on the language as it's practically used.

- The comprehension approach, which enables the NLP engine to interpret naturally written commands in a simple rule-governed environment.

- The developmental approach, which adopts the language acquisition strategy of a child by acquiring language over time. The developmental process has a statistical approach to studying language and doesn't take grammatical structure into account.

- The structural approach, which takes a theoretical approach to the structure of a language. This approach uses large samples of a language run through computational models to gain a better understanding of the underlying language structures.

- The production approach focuses on a CL model to produce text. This has been done in a number of ways, including the construction of algorithms that produce text based on example texts from humans. This can be broken down into the following two methods:

- The text-based interactive approach uses text from a human to generate a response by an algorithm. A computer can recognize different patterns and reply based on user input and specified keywords.

- The speech-based interactive approach works similarly to the text-based approach, but user input is made through speech recognition. The user's speech input is recognized as sound waves and is interpreted as patterns by the CL system.

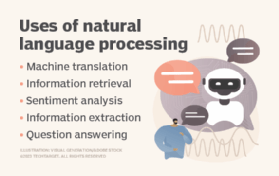

Computational linguistics vs. natural language processing

Computational linguistics and natural language processing are similar concepts, as both fields require formal training in computer science, linguistics and machine learning (ML). Both use the same tools, such as ML and AI, to accomplish their goals and many NLP tasks need an understanding or interpretation of language. Due to their similarities, some use the terms interchangeably. It is important to note, however, that they are two different concepts.

Where NLP deals with the ability of a computer program to understand human language as it's spoken and written to provide sentiment analysis, CL focuses on the computational description of languages as a system. Computational linguistics also leans more toward linguistics and answering linguistic questions with computational tools; NLP, on the other hand, involves the application of processing language.

NLP plays an important role in creating language technologies, including chatbots, speech recognition systems and virtual assistants, such as Siri and Alexa. Meanwhile, CL lends its expertise to topics such as preserving languages, analyzing historical documents and building dialogue systems, such as Google Translate.

History of computational linguistics

Although the concept of computational linguistics is often associated with AI, CL predates AI's development, according to the Association for Computational Linguistics. One of the first instances of CL came from an attempt to translate text from Russian to English in the early to mid-1950s. The thought was that computers could make systematic calculations faster and more accurately than a person, so it wouldn't take long to process a language. However, the complexities found in languages were underestimated, taking much more time and effort to develop a working program.

Two programs were developed in the early 1970s that had more complicated syntax and semantic mapping rules. SHRDLU was a primary language parser developed by computer scientist Terry Winograd at the Massachusetts Institute of Technology. It combined human linguistic models with reasoning methods. This was a major accomplishment for natural language understanding and processing research.

In 1971, NASA developed Lunar and demonstrated it at a space convention. The Lunar Sciences Natural Language Information System answered convention attendees' questions about the composition of the rocks returned from the Apollo moon missions.

Translating languages was a difficult task before this, as the system had to understand grammar and the syntax in which words were used. Since then, strategies to execute CL began moving away from procedural approaches to the ones that were more linguistic, understandable and modular. In the late 1980s, computing processing power increased, which led to a shift to statistical methods when considering CL. This is also around the time when corpus-based statistical approaches were developed.

Modern CL relies on many of the same tools and processes as NLP. These systems use a variety of tools, including AI, ML, deep learning and cognitive computing. As an example, GPT-3, or the third-generation Generative Pre-trained Transformer, is a neural network ML model that produces text based on user input. It was released by OpenAI in 2020 and was trained using internet data to generate any type of text. The program requires a small amount of input text to generate large relevant volumes of text. GPT-3 is a model with more than 175 billion ML parameters. Compared to the largest trained language model before this, Microsoft's Turing-NLG model only had 17 billion parameters. The latest version of GPT, GPT-4, launched in March 2023. Compared to its predecessors, this model is capable of handling more sophisticated tasks, thanks to improvements in its design and capabilities.

Modern examples of computation linguistics

Computational linguistics has plenty of applications today. Some modern examples include the following:

- Machine translations. Just as translations were one of the earliest examples of computational linguistics, modern implementations such as Google Translate are still popular.

- Chatbots. Modern chatbots like ChatGPT use AI and ML algorithms to understand and generate human language.

- Sentiment analysis. This is a type of NLP that is used to identify the emotional tone of text. Examples include Lexalytics or Azure Text Analysis.

- Data processing. Modern tools like Tableau commonly use NLP techniques to process text data. This normally includes methods like tokenization, stemming and lemmatization to preprocess text.

- Feature extraction. Feature extraction methods used today commonly extract features from text using keywords, linguistic patterns or phrases.

- Speech recognition. Speech recognition systems like Apple's Siri are able to convert spoken language into text.

- Grammar checking. Grammar-checking tools like Grammarly are able to use NLP to analyze text for grammatical correctness, clarity and style.

What computational linguists do

Typically, computational linguists are employed in universities, governmental research labs or large enterprises. In the private sector, vertical companies typically use computational linguists to authenticate the accurate translation of technical manuals. Tech software companies, such as Microsoft, typically hire computational linguists to work on NLP, helping programmers create voice user interfaces that let humans communicate with computing devices as if they were another person. Although a computational linguist's job and tasks might vary from company to company, they commonly perform tasks surrounding speech recognition, machine translation, grammar checking, text mining and various big data applications. Some common job titles for computational linguists include natural language processing engineer, speech scientist and text analyst.

Common business goals of computational linguistics include the following:

- Build applications that integrate human language into the application's functions.

- Create grammatical and semantic frameworks for characterizing languages.

- Translate text from one language to another.

- Offer text and information retrieval that relates to a specific topic.

- Analyze text or spoken language for context, sentiment or other affective qualities.

- Answer questions, including those that require inference and descriptive or discursive answers.

- Summarize text.

- Build dialogue agents capable of completing complex tasks such as making a purchase, planning a trip or scheduling maintenance.

- Create chatbots capable of passing the Turing Test.

- Maintain search engines that rely on human inputs.

- Explore and identify the learning characteristics and processing techniques that constitute both the statistical and structural elements of a language.

How to become a computational linguist

In terms of skills, computational linguists must have a strong background in areas pertaining to computer science and programming, as well as expertise in ML, deep learning, AI, cognitive computing, neuroscience and language analysis. These individuals should also be able to handle large data sets, possess advanced analytical and problem-solving capabilities, and be comfortable interacting with both technical and non-technical professionals.

Individuals pursuing a job as a linguist generally need a master's or a bachelor's degree in a computer science related field. Doctoral degrees are less commonly asked for. Work experience developing natural language software is also commonly asked for. Industry certifications might be seen as another plus, which can demonstrate an individual's skills in AI, ML, NLP and data structures. Other skills a computational linguist should have include programming, mathematics, linguistics and problem-solving.

The ultimate goal of computational linguistics is to enhance communication, revolutionize language technology and elevate human-computer interaction.

Learn about 20 different courses for studying AI, including programs at Cornell University, Harvard University and the University of Maryland, which offer content on computational linguistics.