Build and organize an effective machine learning team

Putting together an ML team requires a business understand why it needs one and the core roles involved in making all aspects of the machine learning process work.

Almost every business in every industry wants to harness the power of machine learning to make its products, processes or services more innovative, competitive, personalized and efficient.

AI and ML are built into most networked systems and applications. Whatever the strategic business need, having a dedicated ML team can be a competitive advantage, enabling maximum efficiency, flexibility, and control over the development process and any resulting intellectual property or innovation.

Why build an ML team

There are many compelling reasons to build a team focused on machine learning. The following are some of the key ones:

- Innovation. Machine learning is evolving at a pace that's hard to keep up with. Having a dedicated team researching and implementing the latest advancements, such as agentic AI and generative AI, lets a business achieve cutting-edge breakthroughs. These capabilities can also become valuable new intellectual property.

- Data to drive business decisions. Using ML models for business analytics and prediction remains a core use. ML teams can offer an effective path to facilitate ML development and implementation. In addition, ML analytics and forecasting can drive operational quality improvements across manufacturing, logistics and cybersecurity. All of this supports insightful business decision-making.

- Customized solutions. Prebuilt ML models might not always work well with existing systems. In this case, an in-house ML team enables the development of tailored implementations that address specific business needs. For example, an organization might opt to develop numerous small, highly efficient ML models rather than use a single all-encompassing prebuilt model.

- Competitive advantages. ML teams can evaluate existing products, services and processes, and continuously improve them with ML capabilities. This strategy helps a business stay ahead of market dynamics. For example, adding customer support models and AI to a service can enhance the customer experience and a business's brand.

- Automation and efficiency. A dedicated team can develop ML processes to automate repetitive tasks, leading to increased efficiency and reduced operational costs. Agentic AI implementations are a principal example of ML-driven business automation.

Types of ML teams

Machine learning teams are often developed in response to a company's strategic AI initiative. It might be formed to solve a specific business problem, working for a funding sponsor, such as a chief digital officer, chief AI officer or other stakeholder who has a strategic need, organizational approval and the funding to support the initiative.

The sponsor can request team resources from a company's center of excellence (CoE) or from the organization's ML group. A newly hired team might later be integrated into a CoE so that it can be redeployed for other projects. In practice, there are three major types of ML teams:

- Centralized. A centralized team is typically an independent group that has a core of ML professionals, including data scientists and engineers. It's responsible for ML projects and initiatives across the organization, and is used for quick development and knowledge sharing.

- Decentralized. A decentralized team is a short-term, cross-functional group of professionals from across a business. It might include ML, software development, marketing and product professionals. It's focused on specific ML tasks or capabilities, often for ML or AI systems already in production.

- Hybrid. A hybrid team combines the centralized core of ML expertise with decentralized staffing to help guide and implement specific applications or functionality.

How does an ML team operate?

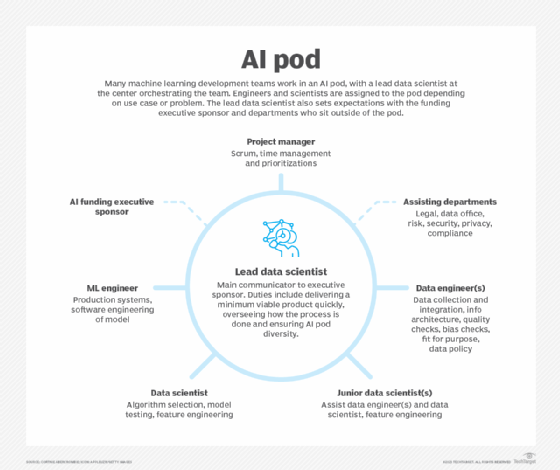

Many ML development teams work in AI pods. Data scientists usually lead an AI pod, organizing the team and setting expectations with the funding executive sponsor and stakeholders outside the pod.

Inside the pod, a project manager manages Scrum, timing and deadline issues. And the ML team members work iteratively together developing a machine learning model, including testing, deploying, optimizing and governing it.

The project manager works with data engineers to identify and collect relevant data and develop data policies. Junior data scientists assist experienced data scientists with model creation. ML engineers then work with the data scientists to deploy and govern the final product. ML engineers might include ML operations (MLOps) or other platform engineering professionals with extensive knowledge of infrastructure, model deployment, monitoring and optimization.

AI pods are empowered to make decisions related to the AI initiative if they meet the executive sponsor's business needs, especially regarding budget, timing and business outcomes. Executive sponsors are treated as stakeholders rather than collaborative partners, because they generally lack AI literacy. They receive progress updates at key milestones, but the AI pods often retain sovereignty over the team, product and methods.

This sovereignty isn't always a good thing for the executive sponsor or the organization. Ambitious data scientists can exploit this sovereignty and not document their processes, then leave the company for a more interesting or better-paying opportunity. The model and its features could then become a source of technical debt. It's best for executive sponsors to be trained on basic AI development processes so they can insist on certain best practices, such as documentation, throughout the ML development process.

Core roles on an ML team

AI pods core roles include project managers, data engineers, data scientists and ML engineers. The following explains the duties fulfilled by each role.

Project manager

The project manager helps the lead data scientist keep the AI initiative on time, budget and target. They work with the project stakeholders, gathering their input, understanding business needs, and providing updates and milestone reports. Project manager duties include the following:

- Determining project milestones. They work with the ML team and lead data scientists to understand key workstreams. They also map milestones to deliverable dates and stakeholder meetings.

- Identifying priorities. Project managers hold the ML team to account for completing tasks on time. They use Agile methodologies, such as DevOps, to manage tasks.

- Maintaining trust in the project. They ensure the team doesn't buy, collect or use data that could be biased, inaccurate or compromised in its use of personally identifiable information, trade secrets or other sensitive intellectual property. They also verify that data sets are fit for the AI initiative's purpose and prevent behaviors that could lead to AI bias or compromise safety.

Data engineer

Data engineers a crucial ML team role with duties that can take the longest and be the most tedious. All ML development starts with data. Depending on the project, deciding where to source data or whether to generate synthetic data can be problematic, even before data cleanup and transformation begin. The majority of what goes awry with AI is usually related to the data, rather than the model or production. Data engineering duties include the following:

- Collecting and integrating data. Data engineers gather data from various sources, including databases, APIs, IoT devices, logs and external data sets. They ensure data is collected efficiently, stored securely and is available for analysis in an organized manner.

- Building data storage and infrastructure. They design, build and maintain data infrastructure, such as data warehouses, data lakes and feature stores. They also optimize data storage and implement appropriate data security and privacy measures in compliance with regulatory requirements.

- Implementing data orchestration processes. They transform raw data into a suitable format for analysis; automate data processes; create AI data pipelines to move and cleanse data; and use tools, such as AWS Glue and Google Cloud Dataflow, to automate and orchestrate extract, transform and load (ETL) processes.

- Ensuring data quality and governance. This role verifying data quality and integrity. Engineers implement data governance policies to maintain accuracy, reliability and adherence to privacy and security standards.

- Optimizing performance. Engineers fine-tune data processing and retrieval to enhance system performance and minimize latency, ensuring efficient data access for data scientists and model training.

- Collaborating with data scientists. They work with data scientists to understand their data requirements, develop data models and support the deployment of ML models in production environments.

Data scientist

There are different levels of data scientists. Larger AI pods include junior data scientists who work with data engineers on key model features, also known as feature engineering. More senior data scientists optimize models and the domain in which the model will be deployed, ensuring the relevance and usefulness of the data and enhancing how data affects the model. Data scientists' duties include the following:

- Analyzing and modeling data. Scientists identify patterns, trends and insights in data, create predictive models and develop algorithms to solve complex business problems.

- Developing ML models. Data scientists design, train and validate ML models using algorithms and techniques. They explore and experiment with different algorithms and models to find the best fit for the problem.

- Optimizing features. Data scientists select, transform and create new variables from raw data. They implement data tagging and labeling to enhance the relevance of important data features.

- Evaluating and optimizing models. They model performance using defined thresholds and metrics, and fine-tune models to achieve better results.

Machine learning engineer

ML engineers focus on the software engineering aspects of developing, deploying and governing ML models within production systems, using evolving paradigms such as MLOps. Their goal is to create scalable, robust implementations. Some businesses separate ML engineers from MLOps engineers. In this case, the ML engineer works closely with data science and software development team members to build, deploy and scale models, while MLOps engineers focus on production-centric issues, such as infrastructure health, automation and monitoring. ML engineer duties include the following:

- Training and validating models. ML engineers train and validate ML models using separate training and validation data sets and evaluation metrics to ensure models are accurate and generalized.

- Deploying models. They deploy ML models into production environments for real-time use, setting up APIs, provisioning infrastructure resources, managing versioning and handling model updates.

- Optimizing performance. They assess the efficiency and scalability of models, ensuring they can handle large volumes of data and real-time processing.

- Integrating software and ML. ML engineers integrate machine learning into existing software systems and build new applications to use the models effectively.

- Testing, monitoring and maintenance. They also conduct testing and debugging to identify and resolve issues affecting model performance or software integration. They monitor model performance with metrics and KPIs, and maintain models by updating or retraining as necessary, or scaling infrastructure resources. Monitoring and response are increasingly important as agentic AI systems gain autonomy across enterprise workflows, while generative AI must be carefully monitored to prevent malicious, prohibited and illegal output.

- Deploying and managing infrastructure and DevOps. ML engineers work with cloud infrastructure and DevOps teams to set up and manage the resources needed to run ML applications in production environments.

Challenges in building an ML team

The following issues can pose challenges when building an in-house machine learning team:

- Retaining talent. One of the first considerations is whether there's enough ongoing work to support a team and keep them engaged. These roles are in high demand, so the lack of an enticing salary and interesting projects might prompt members to leave for the next big resume-building project.

- Cost. Building a team is costly. Businesses might consider augmenting a smaller existing team with outsourced talent.

- Recruiting skilled people. Finding people with ML model development, engineering and other specific skills can be difficult. Good candidates might lack the needed soft skills, such as basic communication capabilities. It's important to interview in person to understand how candidates approach problems and communicate.

- Domain expertise. Hiring for ML skills alone isn't enough. ML team members must possess extensive knowledge of specific industry practices and requirements. Without that knowledge, the team can wind up developing and deploying models that are efficient and reliable but don't solve real business problems.

- Diversity. Ensuring a diverse team is crucial to understanding all the people affected by the product. There are ethnic, cultural, religious and socioeconomic factors to consider, depending on what part of the country the business is in. Flexible schedules and work-from-home options can help expand the applicant pool during hiring. A diverse advisory panel that can weigh in on key decisions is also important.

Best practices to manage an ML team

There's no single approach to managing an ML team, but there are practices that can enhance the team's effectiveness, efficiency and satisfaction.

- Focus on data quality. Bad data is more detrimental to an ML project than bad algorithms or poor programming. Managers must emphasize the importance of data engineering from data origin and acquisition through ETL preprocessing, QA and risk management.

- Avoid rigid timelines. ML is iterative, and the failure rate can be high. Managers who avoid rigid schedules and timelines can use the benefits of iterative failures to lower stress and enhance retention. This ultimately results in better project outcomes.

- Drive collaboration. Team members often have a mix of specializations, such as math-intensive data science versus infrastructure and production operations. Managers who encourage collaboration and interaction among team members can promote cross-training, mentoring, mutual support and better documentation.

- Prioritize consistent workflows. Effective iteration demands consistent workflows and processes. Managers can foster consistency by embracing ML-centric processes, such as MLOps where the emphasis is on automation, orchestration and monitoring. Such consistency enhances speed and lends itself to transparency and reproducibility, which are vital for AI compliance and governance.

- Build in guardrails. A gap often exists between goals and guardrails. For example, it's one thing to collect useful and valid data, but it's vital for all ML team members to recognize the limits of data use, privacy and security demands, the potential for data bias and other realities of real-world data. Responsibility for data quality might be unevenly distributed across the team, but everyone must understand and adhere to the guardrails.

- Require documentation. All members of the AI pod must document their processes, from data sources and final features to considerations for model selection to API setup and governance. For ML products to be trusted, development must be fully documented to ensure transparency and explainability. Some organizations build documentation requirements into their employment contracts as a condition of hire; others have workflow systems that don't grant next-step approvals until documentation is uploaded. Platforms that include automated documentation and version control can ensure regular, consistent documentation.

- Ensure a variety of skills. Some of the most creative and effective ML teams include a diverse mix of skills and backgrounds. For example, a team that's versed in data science but weak in production might have difficulty with model deployment and monitoring.

Stephen J. Bigelow, senior technology editor at TechTarget, has more than 30 years of technical writing experience in the PC and technology industry.

Cortnie Abercrombie is a top advisor to Fortune 500 companies on AI strategy, development, operating models and building trustworthy AI cultures. She is also the founder and CEO of AI Truth, a nonprofit dedicated to the responsible creation and use of AI.