What is a neural network?

A neural network is a machine learning (ML) model designed to process data in a way that mimics the function and structure of the human brain. Neural networks are intricate networks of interconnected nodes, or artificial neurons, that collaborate to tackle complicated problems.

Also referred to as artificial neural networks (ANNs), neural nets or deep neural networks, neural networks represent a type of deep learning technology that's classified under the broader field of artificial intelligence (AI).

Neural networks are widely used in a variety of applications, including image recognition, predictive modeling, decision-making and natural language processing (NLP). Examples of significant commercial applications over the past 25 years include handwriting recognition for check processing, speech-to-text transcription, oil exploration data analysis, weather prediction and facial recognition.

How do neural networks work?

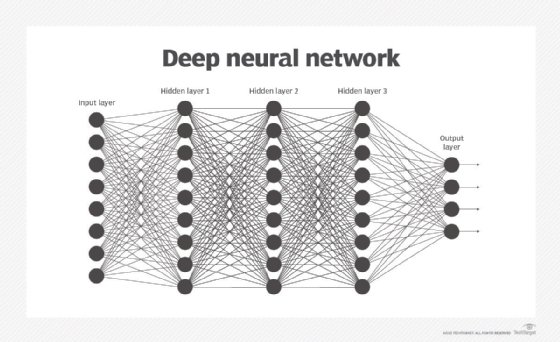

An ANN usually involves many processors operating in parallel and arranged in tiers or layers. There are typically three layers in a neural network: an input layer, an output layer and several hidden layers. The first tier -- analogous to optic nerves in human visual processing -- receives the raw input information. Each successive tier receives the output from the tier preceding it rather than the raw input, the same way biological neurons further from the optic nerve receive signals from those closer to it. The last tier produces the system's output.

This article is part of

What is machine learning? Guide, definition and examples

Each processing node has its own small sphere of knowledge, including what it has seen and any rules it was originally programmed with or developed for itself. The tiers are highly interconnected, which means each node in Tier N will be connected to many nodes in Tier N-1 -- its inputs -- and in Tier N+1, which provides input data for the Tier N-1 nodes. There could be one or more nodes in the output layer, from which the answer it produces can be read.

ANNs are noted for being adaptive, which means they modify themselves as they learn from initial training, and subsequent runs provide more information about the world. The most basic learning model is centered on weighting the input streams, which is how each node measures the importance of input data from each of its predecessors. Inputs that contribute to getting the right answers are weighted higher.

Applications of neural networks

Image recognition was one of the first areas in which neural networks were successfully applied. But the technology uses of neural networks have expanded to many additional areas, including the following:

- Chatbots.

- Computer vision.

- NLP, translation and language generation.

- Speech recognition.

- Recommendation engines.

- Stock market forecasting.

- Delivery driver route planning and optimization.

- Drug discovery and development.

- Social media.

- Personal assistants.

- Pattern recognition.

- Regression analysis.

- Process and quality control.

- Targeted marketing through social network filtering and behavioral data insights.

- Generative AI.

- Quantum chemistry.

- Data visualization.

Prime uses involve any process that operates according to strict rules or patterns and has large amounts of data. If the data involved is too large for a human to make sense of in a reasonable amount of time, the process is likely a prime candidate for automation through artificial neural networks.

How are neural networks trained?

Typically, an ANN is initially trained, or fed large amounts of data. Training consists of providing input and telling the network what the output should be. For example, to build a network that identifies the faces of actors, the initial training might be a series of pictures, including actors, non-actors, masks, statues and animal faces. Each input is accompanied by matching identification, such as actors' names or "not actor" or "not human" information. Providing the answers enables the model to adjust its internal weightings to do its job better.

For example, if nodes David, Dianne and Dakota tell node Ernie that the current input image is a picture of Brad Pitt, but node Durango says it's George Clooney, and the training program confirms it's Pitt, Ernie decreases the weight it assigns to Durango's input and increases the weight it gives to David, Dianne and Dakota.

In defining the rules and making determinations -- the decisions of each node on what to send to the next layer based on inputs from the previous tier -- neural networks use several principles. These include gradient-based training, fuzzy logic, genetic algorithms and Bayesian methods. They might be given some basic rules about object relationships in the data being modeled.

For example, a facial recognition system might be instructed, "Eyebrows are found above eyes," or "Mustaches are below a nose. Mustaches are above and/or beside a mouth." Preloading rules can make training faster and the model more powerful faster. But it also includes assumptions about the nature of the problem, which could prove to be either irrelevant and unhelpful, or incorrect and counterproductive, making the decision about what, if any, rules to build unimportant.

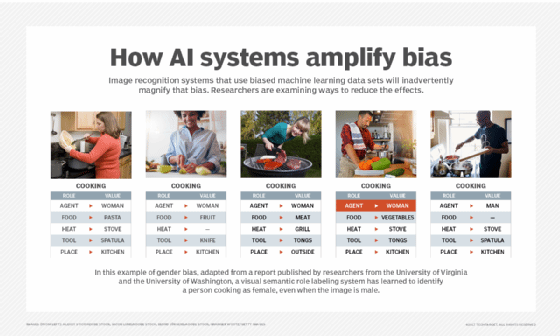

Further, the assumptions people make when training algorithms cause neural networks to amplify cultural biases. Biased data sets are an ongoing challenge in training systems that find answers on their own through pattern recognition in data. If the data feeding the algorithm isn't neutral -- and almost no data is -- the machine propagates bias.

Types of neural networks

Neural networks are sometimes described in terms of their depth, including how many layers they have between input and output, or the model's so-called hidden layers. This is why the term neural network is used almost synonymously with deep learning. Neural networks can also be described by the number of hidden nodes the model has, or in terms of how many input layers and output layers each node has. Variations on the classic neural network design enable various forms of forward and backward propagation of information among tiers.

Specific types of ANNs include the following:

Feed-forward neural networks

One of the simplest variants of neural networks, these pass information in one direction, through various input nodes, until it makes it to the output node. The network might or might not have hidden node layers, making their functioning more interpretable. It's prepared to process large amounts of noise. This type of ANN computational model is used in technologies such as facial recognition and computer vision.

Recurrent neural networks

More complex in nature, recurrent neural networks (RNNs) save the output of processing nodes and feed the result back into the model. This is how the model learns to predict the outcome of a layer. Each node in the RNN model acts as a memory cell, continuing the computation and execution of operations.

This neural network starts with the same front propagation as a feed-forward network, but then goes on to remember all processed information to reuse it in the future. If the network's prediction is incorrect, then the system self-learns and continues working toward the correct prediction during backpropagation. This type of ANN is frequently used in text-to-speech conversions.

Convolutional neural networks

Convolutional neural networks (CNNs) are one of the most popular models used today. This computational model uses a variation of multilayer perceptrons and contains one or more convolutional layers that can be either entirely connected or pooled. These convolutional layers create feature maps that record a region of the image that's ultimately broken into rectangles and sent out for nonlinear processing.

The CNN model is particularly popular in the realm of image recognition. It has been used in many of the most advanced applications of AI, including facial recognition, text digitization and NLP. Other use cases include paraphrase detection, signal processing and image classification.

Deconvolutional neural networks

Deconvolutional neural networks use a reversed CNN learning process. They try to find lost features or signals that might have originally been considered unimportant to the CNN system's task. This network model can be used in image synthesis and analysis.

Modular neural networks

These contain multiple neural networks working separately from one another. The networks don't communicate or interfere with each other's activities during the computation process. Consequently, complex or big computational processes can be performed more efficiently.

Perceptron neural networks

These represent the most basic form of neural networks and were introduced in 1958 by Frank Rosenblatt, an American psychologist who's also considered to be the father of deep learning. The perceptron is specifically designed for binary classification tasks, enabling it to differentiate between two classes based on input data.

Multilayer perceptron networks

Multilayer perceptron (MLP) networks consist of multiple layers of neurons, including an input layer, one or more hidden layers, and an output layer. Each layer is fully connected to the next, meaning that every neuron in one layer is connected to every neuron in the subsequent layer. This architecture enables MLPs to learn complex patterns and relationships in data, making them suitable for various classification and regression tasks.

Radial basis function networks

Radial basis function networks use radial basis functions as activation functions. They're typically used for function approximation, time series prediction and control systems.

Transformer neural networks

Transformer neural networks are reshaping NLP and other fields through a range of advancements. Introduced by Google in a 2017 paper, transformers are specifically designed to process sequential data, such as text, by effectively capturing relationships and dependencies between elements in the sequence, regardless of their distance from one another.

Transformer neural networks have gained popularity as an alternative to CNNs and RNNs because their "attention mechanism" enables them to capture and process multiple elements in a sequence simultaneously, which is a distinct advantage over other neural network architectures.

Generative adversarial networks

Generative adversarial networks consist of two neural networks -- a generator and a discriminator -- that compete against each other. The generator creates fake data, while the discriminator evaluates its authenticity. These types of neural networks are widely used for generating realistic images and data augmentation processes.

Advantages of artificial neural networks

Artificial neural networks offer the following benefits:

- Parallel processing. ANNs' parallel processing abilities mean the network can perform more than one job at a time.

- Feature extraction. Neural networks can automatically learn and extract relevant features from raw data, which simplifies the modeling process. However, traditional ML methods differ from neural networks in the sense that they often require manual feature engineering.

- Information storage. ANNs store information on the entire network, not just in a database. This ensures that even if a small amount of data disappears from one location, the entire network continues to operate.

- Nonlinearity. The ability to learn and model nonlinear, complex relationships helps model the real-world relationships between input and output.

- Fault tolerance. ANNs come with fault tolerance, which means the corruption or fault of one or more cells of the ANN won't stop the generation of output.

- Gradual corruption. This means the network slowly degrades over time instead of degrading instantly when a problem occurs.

- Unrestricted input variables. No restrictions are placed on the input variables, such as how they should be distributed.

- Observation-based decisions. ML means the ANN can learn from events and make decisions based on the observations.

- Unorganized data processing. ANNs are exceptionally good at organizing large amounts of data by processing, sorting and categorizing it.

- Ability to learn hidden relationships. ANNs can learn the hidden relationships in data without commanding any fixed relationship. This means ANNs can better model highly volatile data and nonconstant variance.

- Ability to generalize data. The ability to generalize and infer unseen relationships on unseen data means ANNs can predict the output of unseen data.

Disadvantages of artificial neural networks

Along with their numerous benefits, neural networks also have some drawbacks, including the following:

- Lack of rules. The lack of rules for determining the proper network structure means the appropriate ANN architecture can only be found through trial, error and experience.

- Computationally expensive. Neural networks such as ANNs use many computational resources. Therefore, training neural networks can be computationally expensive and time-consuming, requiring significant processing power and memory. This can be a barrier for organizations with limited resources or those needing real-time processing.

- Hardware dependency. The requirement of processors with parallel processing abilities makes neural networks dependent on hardware.

- Numerical translation. The network works with numerical information, meaning all problems must be translated into numerical values before they can be presented to the ANN.

- Lack of trust. The lack of explanation behind probing solutions is one of the biggest disadvantages of ANNs. The inability to explain the why or how behind the solution generates a lack of trust in the network.

- Inaccurate results. If not trained properly, ANNs can often produce incomplete or inaccurate results.

- Black box nature. Because of their black box AI model, it can be challenging to grasp how neural networks make their predictions or categorize data.

- Overfitting. Neural networks are susceptible to overfitting, particularly when trained on small data sets. They can end up learning the noise in the training data instead of the underlying patterns, which can result in poor performance on new and unseen data.

History and timeline of neural networks

The history of neural networks spans several decades and has seen considerable advancements. The following examines the important milestones and developments in the history of neural networks:

- 1940s. In 1943, mathematicians Warren McCulloch and Walter Pitts built a circuitry system that ran simple algorithms and was intended to approximate the functioning of the human brain.

- 1950s. In 1958, Rosenblatt created the perceptron, a form of artificial neural network capable of learning and making judgments by modifying its weights. The perceptron featured a single layer of computing units and could handle problems that were linearly separate.

- 1970s. Paul Werbos, an American scientist, developed the backpropagation method, which facilitated the training of multilayer neural networks. It made deep learning possible by enabling weights to be adjusted across the network based on the error calculated at the output layer.

- 1980s. Cognitive psychologist and computer scientist Geoffrey Hinton, computer scientist Yann LeCun and a group of fellow researchers began investigating the concept of connectionism, which emphasizes the idea that cognitive processes emerge through interconnected networks of simple processing units. This period paved the way for modern neural networks and deep learning models.

- 1990s. Jürgen Schmidhuber and Sepp Hochreiter, both computer scientists from Germany, proposed the long short-term memory recurrent neural network framework in 1997.

- 2000s. Hinton and his colleagues at the University of Toronto pioneered restricted Boltzmann machines, a sort of generative artificial neural network that enables unsupervised learning. RBMs opened the path for deep belief networks and deep learning algorithms.

- 2010s. Research in neural networks picked up great speed around 2010. The big data trend, where companies amass vast troves of data, and parallel computing gave data scientists the training data and computing resources needed to run complex ANNs. In 2012, a neural network named AlexNet won the ImageNet Large Scale Visual Recognition Challenge, an image classification competition.

- 2020s and beyond. Neural networks continue to undergo rapid development, with advancements in architecture, training methods and applications. Researchers are exploring new network structures such as transformers and graph neural networks, which excel in NLP and understanding complex relationships. Additionally, techniques such as transfer learning and self-supervised learning are enabling models to learn from smaller data sets and generalize better. These developments are driving progress in fields such as healthcare, autonomous vehicles and climate modeling.

Discover the process for building a machine learning model, including data collection, preparation, training, evaluation and iteration. Follow these essential steps to kick-start your ML project.