What is black box AI?

Black box AI is any artificial intelligence system whose inputs and operations aren't visible to the user or another interested party. A black box, in a general sense, is an impenetrable system.

Black box AI models arrive at conclusions or decisions without providing any explanations as to how they were reached.

As AI technology has evolved, two main types of AI systems have emerged: black box AI and explainable (or white box) AI. The term black box refers to systems that are not transparent to users. Simply put, AI systems whose internal workings, decision-making workflows, and contributing factors are not visible or remain unknown to human users are known as black box AI systems.

The lack of transparency makes it hard for humans to understand or explain how the system's underlying model arrives at its conclusions. Black box AI models might also create problems related to flexibility (updating the model as needs change), bias (incorrect results that may offend or damage some groups of humans), accuracy validation (hard to validate or trust the results), and security (unknown flaws make the model susceptible to cyberattacks).

How do black box machine learning models work?

When a machine learning model is being developed, the learning algorithm takes millions of data points as inputs and correlates specific data features to produce outputs.

This article is part of

What is enterprise AI? A complete guide for businesses

The process typically includes these steps:

- Sophisticated AI algorithms examine extensive data sets to find patterns. To achieve this, the algorithm ingests a large number of data examples, enabling it to experiment and learn on its own through trial and error. As the model gets more and more training data, it self-learns to change its internal parameters until it reaches a point where it can predict the exact output for new inputs.

- As a result of this training, the model is finally ready to make predictions using real-world data. Fraud detection using a risk score is an example use case for this mechanism.

- The model scales its method, approaches, and body of knowledge and then produces progressively better output as additional data is gathered and fed to it over time.

In many cases, the inner workings of black box machine learning models are not readily available and are largely self-directed. This is why it's challenging for data scientists, programmers and users to understand how the model generates its predictions or to trust the accuracy and veracity of its results.

How do black box deep learning models work?

Many black box AI models are based on deep learning, a branch of AI -- in particular, a branch of machine learning -- in which multilayered or deep neural networks are used to mimic the human brain and simulate its decision-making ability. The neural networks are composed of multiple layers of interconnected nodes known as artificial neurons.

In black box models, these deep networks of artificial neurons disperse data and decision-making across tens of thousands or more of neurons. The neurons work together to process the data and identify patterns within it, allowing the AI model to make predictions and arrive at certain decisions or answers.

These predictions and decisions result in a complexity that can be just as difficult to understand as the complexity of the human brain. As with machine learning models, it's hard for humans to identify a deep learning model's "how," or the specific steps it took to make those predictions or arrive at those decisions. For all these reasons, such deep learning systems are known as black box AI systems.

Issues with black box AI

While black box AI models are appropriate and highly valuable in some circumstances, they can pose several issues.

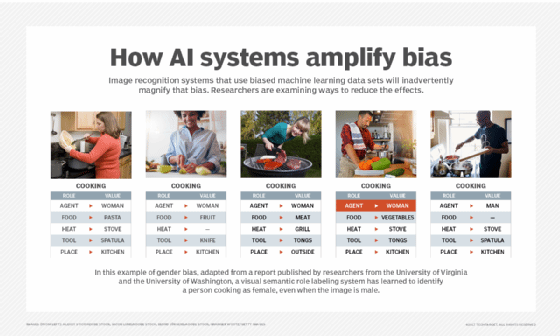

1. AI bias

AI bias can be introduced into machine learning algorithms or deep learning neural networks as a reflection of conscious or unconscious prejudices on the part of the developers. Bias can also creep in through undetected errors or from training data when details about the dataset are unrecognized. Usually, the results of a biased AI system will be skewed or outright incorrect, potentially in a way that's offensive, unfair or downright dangerous to some people or groups.

Example

An AI system used for IT recruitment might rely on historical data to help HR teams select candidates for interviews. However, because history shows that most IT staff in the past were male, the AI algorithm might use this information to recommend only male candidates, even if the pool of potential candidates includes qualified women. Simply put, it displays a bias toward male applicants and discriminates against female applicants. Similar issues could occur with other groups, such as candidates from certain ethnic groups, religious minorities or immigrant populations.

With black box AI, it's hard to identify where the bias is coming from or if the system's models are unbiased. If the inherent bias results in consistently skewed results, it might damage the reputation of the organization using the system. It might also result in legal actions for discrimination. Bias in black box AI systems can also have a social cost, leading to the marginalization of, harassment of, wrongful imprisonment of, and even injury to or death of certain groups of people.

To prevent such damaging consequences, AI developers must build transparency into their algorithms. It's also important that they comply with AI regulations, hold themselves accountable for mistakes, and commit to promoting the responsible development and use of AI.

In some cases, techniques such as sensitivity analysis and feature visualization can be used to provide a glimpse into how the internal processes of the AI model are working. Even so, in most cases, these processes remain opaque.

2. Lack of transparency and accountability

The complexity of black box AI models can prevent developers from properly understanding and auditing them, even if they produce accurate results. Some AI experts, even those who were part of some of the most groundbreaking achievements in the field of AI, don't fully understand how these models work. Such a lack of understanding leads to reduced transparency and minimizes a sense of accountability.

These issues can be extremely problematic in high-stakes fields like healthcare, banking, military and criminal justice. Since the choices and decisions made by these models cannot be trusted, the eventual effects on people's lives can be far-reaching, and not always in a good way. It can also be difficult to hold individuals responsible for the algorithm's judgments if it is using hazy models.

3. Lack of flexibility

Another big problem with black box AI is its lack of flexibility. If the model needs to be changed for a different use case -- say, to describe a different but physically comparable object -- determining the new rules or bulk parameters for the update might require a lot of work.

4. Difficult to validate results

The results black box AI generates are often difficult to validate and replicate. How did the model arrive at this particular result? Why did it arrive only at this result and no other? How do we know that this is the best/most correct answer? It's almost impossible to find the answers to these questions and to rely on the generated results to support human actions or decisions. This is one reason why it's not advisable to process sensitive data using a black box AI model.

5. Security flaws

Black box AI models often contain flaws that threat actors can exploit to manipulate the input data. For instance, they could change the data to influence the model's judgment so it makes incorrect or even dangerous decisions. Since there's no way to reverse engineer the model's decision-making process, it's almost impossible to stop it from making bad decisions.

It's also difficult to identify other security blind spots affecting the AI model. One common blind spot is created due to third parties that have access to the model's training data. If these parties fail to follow good security practices to protect the data, it's hard to keep it out of the hands of cybercriminals, who might gain unauthorized access to manipulate the model and distort its results.

When should black box AI be used?

Although black box AI models pose many challenges, they also offer all these advantages:

- Higher accuracy. Complex black box systems might provide higher prediction accuracy than more interpretable systems, especially in computer vision and natural language processing because they can identify intricate patterns in the data that are not readily apparent to humans. Even so, the algorithms' accuracy makes them highly complex, which can also make them less transparent.

- Rapid conclusions. Black box models are often based on a fixed set of rules and equations, making them quick to run and easy to optimize. For example, calculating the area under a curve using a least-squares fit algorithm might generate the right answer even if the model doesn't have a thorough understanding of the problem.

- Minimal computing power. Many black box models are pretty straightforward, so they don't need a lot of computational resources.

- Automation. Black box AI models can automate complex decision-making processes, reducing the need for human intervention. This saves time and resources while improving the efficiency of previously manual-only processes.

In general, the black box AI approach is typically used in deep neural networks, where the model is trained on large amounts of data and the internal weights and parameters of the algorithms are adjusted accordingly. Such models are effective in certain applications, including image and speech recognition, where the goal is to accurately and quickly classify or identify data.

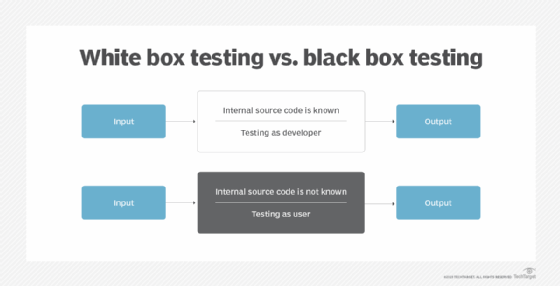

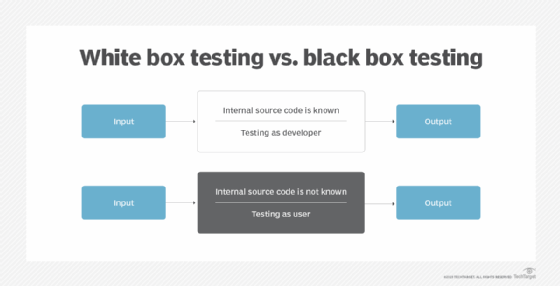

Black box AI vs. white box AI

Black box AI and white box AI are different approaches to developing AI systems. The selection of a certain approach depends on the end system's specific applications and goals. White box AI is also known as explainable AI or XAI.

XAI is created in a way that a typical person can understand its logic and decision-making process. Apart from understanding how the AI model works and arrives at particular answers, human users can also trust the results of the AI system. For all these reasons, XAI is the antithesis of black box AI.

While the input and outputs of a black box AI system are known, its internal workings are not transparent or difficult to comprehend. White box AI is transparent about how it comes to its conclusions. Its results are also interpretable and explainable, so data scientists can examine a white box algorithm and then determine how it behaves and what variables affect its judgment.

Since the internal workings of a white box system are available and easily understood by users, this approach is often used in decision-making applications, such as medical diagnosis or financial analysis, where it's important to know how the AI arrived at its conclusions.

Explainable or white box AI is the more desirable AI type for many reasons.

One, it enables the model's developers, engineers and data scientists to audit the model and confirm that the AI system is working as expected. If not, they can determine what changes are needed to improve the system's output.

Two, an AI system that's explainable allows those who are affected by its output or decisions to challenge the outcome, especially if there is the possibility that the outcome is the result of inbuilt bias in the AI model.

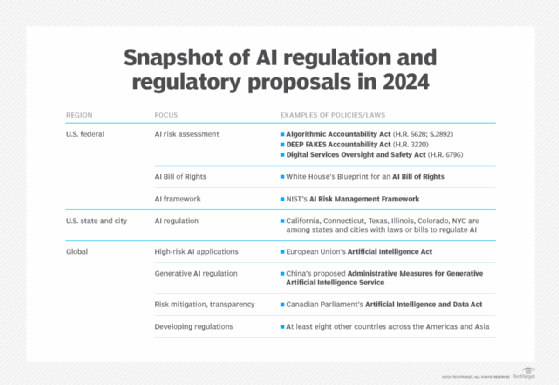

Third, explainability makes it easier to ensure that the system conforms to regulatory standards, many of which have emerged in recent years -- the EU's AI Act is one well-known example -- to minimize the negative repercussions of AI. These include risks to data privacy, AI hallucinations resulting in incorrect output, data breaches affecting governments or businesses, and the spread of audio or video deepfakes leading to the spread of misinformation.

Finally, explainability is vital to the implementation of responsible AI. Responsible AI refers to AI systems that are safe; transparent; accountable; and used in an ethical way to produce trustworthy, reliable results. The goal of responsible AI is to generate beneficial outcomes and minimize harm.

Here is a summary of the differences between black box AI and white box AI:

- Black box AI is often more accurate and efficient than white box AI.

- White box AI is easier to understand than black box AI.

- Black box models include boosting and random forest models that are highly non-linear in nature and harder to explain.

- White box AI is easier to debug and troubleshoot due to its transparent, interpretable nature.

- Linear, decision tree, and regression tree are all white box AI models.

More on responsible AI

AI that's developed and used in a morally upstanding and socially responsible way is known as responsible AI. RAI is about making the AI algorithm responsible before it generates results. RAI guiding principles and best practices are aimed at reducing the negative financial, reputational and ethical risks that black box AI can create. In doing so, RAI can assist both AI producers and AI consumers.

AI practices are deemed responsible if they adhere to these principles:

- Fairness. The AI system treats all people and demographic groups fairly and doesn't reinforce or exacerbate preexisting biases or discrimination.

- Transparency. The system is easy to comprehend and explain to both its users and those it will affect. Additionally, AI developers must disclose how the data used to train an AI system is collected, stored, and used.

- Accountability. The organizations and people creating and using AI should be held responsible for the AI system's judgments and decisions.

- Ongoing development. Continual monitoring is necessary to ensure that outputs are consistently in line with moral AI concepts and societal norms.

- Human supervision. Every AI system should be designed to enable human monitoring and intervention when appropriate.

When AI is both explainable and responsible, it is more likely to create a beneficial impact for humanity.

Neural networks are becoming more popular, as they come with a high level of accountability and transparency. Learn about the possibilities of white box AI, its use cases and the direction it's likely to take in the future. Read about navigating the black box AI debate in healthcare and ways to solve the black box AI problem through transparency.