Getty Images/iStockphoto

Qualtrics expands synthetic research marketing, testing tech

Qualtrics attempts to meld agentic AI and digital twins for A/B testing in marketing and product development, and to improve CX.

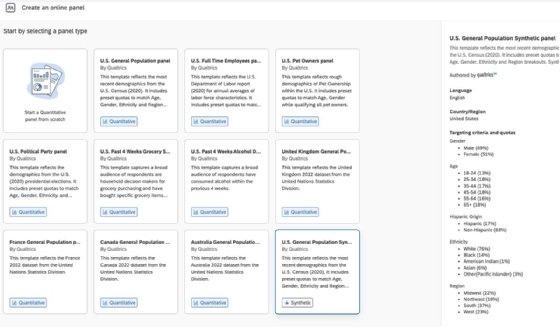

Digital personas and lookalike audiences were the first stabs martech companies took at infusing AI into marketing workflows. Agentic AI takes this a step further to transform how marketers and product development teams work, and Qualtrics has introduced yet another iteration with prebuilt synthetic research panels fueled by a custom large language model (LLM).

Previewed last year and released today at its X4 user conference, Qualtrics synthetic research includes prebuilt synthetic panels for U.S. consumers that answer marketer questions based on demographic audience segments. The panels are powered by a specialized LLM Qualtrics built on its data stores. Models for U.K., Canadian and Australian audiences are planned for release later this year, with more in the works.

"We actually take out some human bias, where people will answer a question one way, but their behavior is different," said Brad Anderson, global head of product, engineering and UX at Qualtrics. "We do tens of thousands of research projects every year on behalf of our customers. We've taken that data and used it to build our own [LLM]."

Qualtrics said that companies such as Navy Federal Credit Union, Google and Dollar Shave Club, among others, have been testing its synthetic research tools.

Marketing and product development tools released today at X4 also include Qualtrics Research Hub features that turn a user's existing research data into a conversational answer engine. AI Summary & Recommendations plumbs content and insights across an organization to provide answers to researchers' questions. Finally, guided research acceleration ports complex research processes into an agent for frontline marketers, potentially reducing the time spent consulting experts to design research studies.

The company also released a host of other agents and experience analysis tools: Experience Agents that address customer service issues in the moment; connectors for contact-center-as-a-service platforms such as Genesys, Nice and Salesforce; and Automated Text Analytics, built on Clarabridge technology, which detects and organizes customer feedback across multiple channels and surfaces emerging topics, trends and issues.

AI research can work -- with humans in the loop

Traditional market research employs human panels to provide feedback for a marketing campaign, marketing messaging, or a product in development. Over the years, large tech companies such as Adobe and Qualtrics -- and many startups, including Simile, Voicepanel and Outset.ai -- have deployed AI to simulate human responses to market research questions.

Querying Qualtrics synthetic research and its AI competitors is much less expensive than designing traditional studies and conducting research with human panels, said Liz Miller, analyst at Constellation Research. It's also faster, reducing what is often a months-long process into seconds. It can also provide answers to personal questions that humans might be reluctant to address.

"I worked in skincare, I've asked consumers about uncomfortable things," Miller said. "These are questions that people don't want to answer. They just don't. And when it gets really sensitive, even having a synthetic answer may be better than flying blind."

Like all AI technologies, though, these digital representations have their limitations. Not only can they introduce bias, but they can also amplify it and turn hallucinations into wrong insights -- or paint unrealistic patterns or preferences. They can echo what the researcher is looking for rather than reflect true human behavior and emotional nuance in context.

The way to correct these issues, Miller and Anderson said, is to start with real-world, human data and continue feeding the model with it as you go along. Otherwise, marketers and product designers risk creating false needs for false products or affirming marketing messaging that won't necessarily work when a campaign rolls out.

"Synthetic research is only as good as the primary research that an AI has been trained on," Miller said. "Organizations like Qualtrics, which have been doing this for decades, give a layer of respectability to the idea of synthetic research. But it is only as good as the questions you ask -- I would say the same thing of traditional research -- if I construct a survey of nothing but double-barreled questions that lead the consumer to what I want them to answer, my data is going to suck."

Don Fluckinger is a senior news writer for Informa TechTarget. He covers customer experience, digital experience management and end-user computing. Got a tip? Email him.