What is deep learning and how does it work?

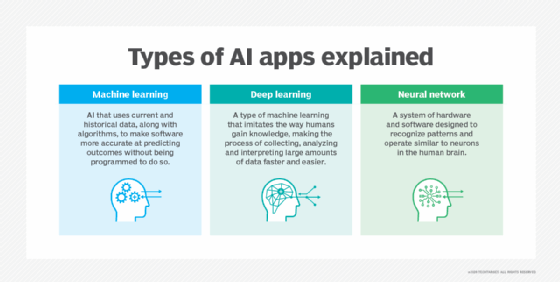

Deep learning is a type of machine learning (ML) and artificial intelligence (AI) that trains computers to learn from extensive data sets in a way that simulates human cognitive processes.

Deep learning models can be taught to perform classification tasks and recognize patterns in photos, text, audio and other types of data. Deep learning is also used to automate tasks that normally need human intelligence, such as describing images or transcribing audio files.

Where human brains have millions of interconnected neurons that work together to learn information, deep learning features neural networks constructed from multiple layers of software nodes that work together. Deep learning models are trained using a large set of labeled data and neural network architectures.

Deep learning enables a computer to learn by example. To understand deep learning, imagine a toddler whose first word is dog. The toddler learns what a dog is -- and is not -- by pointing to objects and saying the word dog. The parent says, "Yes, that is a dog," or "No, that isn't a dog." As the toddler continues to point to objects, they become more aware of the features that all dogs possess. What the toddler is doing, without knowing it, is clarifying a complex abstraction: the concept of a dog. They're doing this by building a hierarchy in which each level of abstraction is created with the knowledge that was gained from the preceding layer of the hierarchy.

Why is deep learning important?

Deep learning has various use cases for business applications, including data analysis and generating predictions. It's also an important element of data science, including statistics and predictive modeling. Therefore, it's extremely beneficial to data scientists who are tasked with collecting, analyzing and interpreting large amounts of data by making the process faster and easier for them.

Deep learning requires both a large amount of labeled data and computing power. If an organization can accommodate both needs, deep learning can be used in areas such as digital assistants, fraud detection and facial recognition. Deep learning also has a high recognition accuracy, which is crucial for other potential applications where safety is a major factor, such as in autonomous cars or medical devices.

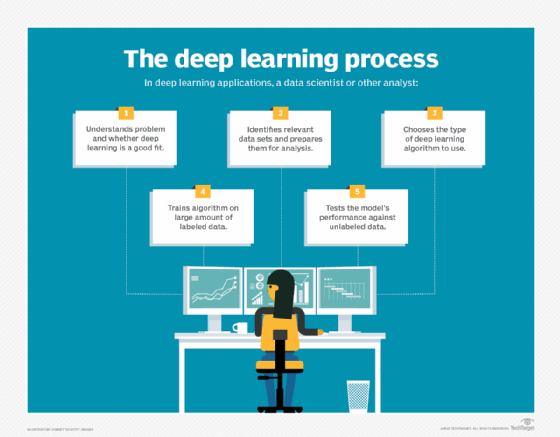

How deep learning works

Computer programs that use deep learning go through much the same process as a toddler learning to identify a dog, for example. Deep learning programs have multiple layers of interconnected nodes, with each layer building upon the last to refine and optimize predictions and classifications. Deep learning performs nonlinear transformations to its input and uses what it learns to create a statistical model as output. Iterations continue until the output has reached an acceptable level of accuracy. The number of processing layers through which data must pass is what inspired the label deep.

Backpropagation is another crucial deep-learning algorithm that trains neural networks by calculating gradients of the loss function. It adjusts the network's weights, or parameters that influence the network's output and performance, to minimize errors and improve accuracy.

In traditional ML, the learning process is supervised, and the programmer must be extremely specific when telling the computer what types of things it should be looking for to decide if an image contains a dog or doesn't contain a dog. This is a laborious process called feature extraction, and the computer's success rate depends entirely upon the programmer's ability to accurately define a feature set for dog. The advantage of deep learning is that the program builds the feature set by itself through unsupervised learning.

Initially, the computer program might be provided with training data -- a set of images for which a human has labeled each image dog or not dog with metatags. The program uses the information it receives from the training data to create a feature set for dog and build a predictive model. In this case, the model the computer first creates might predict that anything in an image that has four legs and a tail should be labeled dog. Of course, the program isn't aware of the labels four legs or tail. It simply looks for patterns of pixels in the digital data. With each iteration, the predictive model becomes more complex and more accurate.

Unlike the toddler, who takes weeks or even months to understand the concept of dog, a computer program that uses deep learning algorithms can be shown a training set and sort through millions of images, accurately identifying which images have dogs in them, within a few minutes.

To achieve an acceptable level of accuracy, deep learning programs require access to immense amounts of training data and processing power, neither of which were easily available to programmers until the era of big data and cloud computing. Because deep learning programming can create complex statistical models directly from its own iterative output, it can create accurate predictive models from large quantities of unlabeled, unstructured data.

Deep learning methods

Various methods can be used to create strong deep learning models. These techniques include learning rate decay, transfer learning, training from scratch and dropout.

Learning rate decay

The learning rate is a hyperparameter -- a factor that defines the system or sets conditions for its operation prior to the learning process -- that controls how much change the model experiences in response to the estimated error every time the model weights are altered. Learning rates that are too high can result in unstable training processes or the learning of a suboptimal set of weights. Learning rates that are too small can produce a lengthy training process that has the potential to get stuck.

The learning rate decay method -- also called learning rate annealing or adaptive learning rate -- is the process of adapting the learning rate to increase performance and reduce training time. The easiest and most common adaptations of the learning rate during training include techniques to reduce the learning rate over time.

Common techniques in the learning rate decay method include the following:

- Step decay. Reduces the learning rate by a factor at specific intervals.

- Exponential decay. Continuously decreases the learning rate at an exponential rate.

- 1/t decay. Reduces the learning rate inversely proportional to the iteration number.

Transfer learning

This process involves perfecting a previously trained model on a new but related problem. It requires an interface to the internals of a preexisting network. First, users feed the existing network new data containing previously unknown classifications. Once adjustments are made to the network, new tasks can be performed with more specific categorizing abilities.

This method has the advantage of requiring much less data than others, thus reducing computation time to minutes or hours.

Training from scratch

This method requires a developer to collect a large, labeled data set and configure a network architecture that can learn the features and model. This technique is especially useful for new applications, as well as applications with many output categories. However, it's a less common approach, as it requires inordinate amounts of data and computational resources, causing training to take days or weeks.

Dropout

This method attempts to solve the problem of overfitting in networks with large amounts of parameters by randomly dropping units and their connections from the neural network during training.

It has been proven that the dropout method can improve the performance of neural networks on supervised learning tasks in areas such as speech recognition, document classification and computational biology.

Deep learning neural networks

A type of advanced ML algorithm, known as an artificial neural network, underpins most deep learning models. As a result, deep learning can sometimes be referred to as deep neural learning or deep neural network.

DDNs consist of input, hidden and output layers. Input nodes act as a layer to place input data. The number of output layers and nodes required changes per output. For example, yes or no outputs only need two nodes, while outputs with more data require more nodes. The hidden layers are multiple layers that process and pass data to other layers in the neural network.

Neural networks come in several different forms, including the following:

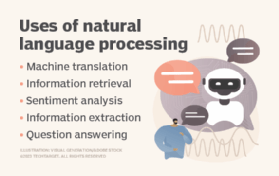

- Recurrent neural networks. RNNs are often used in speech recognition and natural language processing (NLP).

- Convolutional neural networks. CNNs are often used for analyzing visual data.

- Generative adversarial networks. GANs are often used for anomaly detection, data augmentation and image-to-image translation.

- Multilayer perceptrons. MLPs are often used for image recognition, NLP and time-series predictions.

- Feedforward neural networks. Information in a feedforward neural network flows in one direction -- from the input layers to the output layers.

Each type of neural network has benefits for specific use cases. However, they all function in somewhat similar ways -- by feeding data in and letting the model figure out for itself whether it has made the right interpretation or decision about a given data element.

Neural networks involve a trial-and-error process, so they need massive amounts of data on which to train. It's no coincidence neural networks became popular only after most enterprises embraced big data analytics and accumulated large stores of data. Because the model's first few iterations involve somewhat educated guesses on the contents of an image or parts of speech, the data used during the training stage must be labeled so the model can see if its guess was accurate. This means unstructured data is less helpful.

Unstructured data can only be analyzed by a deep learning model once it has been trained and reaches an acceptable level of accuracy, but deep learning models can't train on unstructured data.

Deep learning benefits

The benefits of deep learning include the following:

- Automatic feature learning. Deep learning systems can perform feature extraction automatically, meaning they don't require supervision to add new features.

- Pattern discovery. Deep learning systems can analyze large amounts of data and uncover complex patterns in images, text and audio and can derive insights that the system might not have been trained on.

- Process volatile data sets. Deep learning systems can categorize and sort data sets that have large variations in them, such as in transaction and fraud systems.

- Process multiple data types. Deep learning systems can process both structured and unstructured data.

- Accuracy. Additional node layers aid in optimizing deep learning models for accuracy.

- Can do more than other ML methods. When compared to typical ML processes, deep learning needs less human intervention and can analyze data that other ML processes can't do as well.

- Scalability with data. Deep learning models perform increasingly well as the volume of data grows. Unlike traditional ML algorithms, which can reach a performance plateau after a certain threshold, deep learning models keep improving with more data, making them especially suitable for applications involving large data sets.

- Cost effectiveness. While training deep learning models can be costly, they help businesses save on expenses by significantly reducing errors and defects across industries. The cost of inaccurate predictions often exceeds the training expense, and deep learning's ability to minimize errors surpasses that of traditional ML models.

Key uses for deep learning

Because deep learning models process information in ways similar to the human brain, they can be applied to many tasks people do. Deep learning is currently used in most common image recognition tools, NLP and speech recognition software.

Use cases today for deep learning include all types of big data analytics applications, especially those focused on language translation, medical imaging and diagnosis, stock market trading signals, network security and image recognition.

Use cases of deep learning include the following:

- Customer experience. Deep learning models are already being used for chatbots. And, as the technology continues to mature, deep learning is expected to be executed in various businesses to improve CX and increase customer satisfaction.

- Text generation. Machines are being taught the grammar and style of a piece of text and are then using this model to automatically create a completely new text matching the proper spelling, grammar and style of the original text.

- Aerospace and military. Deep learning is being used to detect objects from satellites that identify areas of interest, as well as safe or unsafe zones for troops.

- Industrial automation. Deep learning is improving worker safety in environments, including factories and warehouses, by providing services through industrial automation that automatically detect when a worker or object is getting too close to a machine.

- Adding color. Color can be added to black-and-white photos and videos using deep learning models. In the past, this was an extremely time-consuming, manual process.

- Computer vision. Deep learning has greatly enhanced machine vision, providing computers with extreme accuracy for object detection and image classification, restoration and segmentation.

- Recommendation engines. Applications can employ deep learning to track user behavior and generate customized suggestions to assist consumers in discovering new products and services. For example, Netflix, Peacock and other media and entertainment organizations use deep learning to provide personalized video recommendations.

- Online security. Deep learning algorithms can protect against fraud by identifying security issues. For example, these algorithms can detect suspicious login attempts, send notifications and alert users if their chosen password isn't strong enough.

Limitations and challenges

Deep learning systems come with the following downsides as well:

- Only learn through observations. Deep learning systems only know what was in the data on which they trained. If a user has a small amount of data or it comes from one specific source that isn't necessarily representative of the broader functional area, the models don't learn in a generalizable way.

- Potential for biases. If a model trains on data that contains biases, the model reproduces those biases in its predictions. Bias in machine learning has been a vexing problem for deep learning programmers, as models learn to differentiate based on subtle variations in data elements. Often, the factors it determines are important aren't made explicitly clear to the programmer. This means, for example, a facial recognition model might make determinations about people's characteristics based on things such as race or gender without the programmer being aware.

- High learning rate. If the rate is too high, then the model converges too quickly, producing a less-than-optimal solution. If the rate is too low, then the process can get stuck, and it's even harder to reach a solution.

- Hardware requirements. Multicore high-performance graphics processing units (GPUs) and other similar processing units are required to ensure improved efficiency and decreased time consumption. However, these units are expensive and use large amounts of energy. Other hardware requirements include RAM and a hard disk drive or RAM-based solid-state drive.

- Requires large amounts of data. The more powerful and accurate models need more parameters, which, in turn, require more data or large amounts of continuous data.

- Lack of multitasking. Once trained, deep learning models become inflexible and can't handle multitasking. They can deliver efficient and accurate results but only to one specific problem. Even solving a similar problem would require retraining the system.

- Lack of reasoning. For any application that requires reasoning -- such as programming or applying the scientific method -- long-term planning and algorithm-like data manipulation is completely beyond what current deep learning techniques can do, even with large amounts of data.

Deep learning on premises vs. cloud

When considering deep learning infrastructure, organizations often debate whether to go with cloud-based services or on-premises options. Both options have pros and cons.

Cloud-based deep learning offers scalability and access to advanced hardware such as GPUs and tensor processing units, making it suitable for projects with varying demands and rapid prototyping. It also eliminates the need for large upfront investments in hardware.

On-premises deep learning options provide greater control over data security and can be more cost-effective over time for consistent, high-capacity needs. These setups also enable customized configurations.

Many organizations also opt for a third, or hybrid option, where models are tested on premises but deployed in the cloud to utilize the benefits of both environments. However, the choice between on-premises and cloud-based deep learning depends on factors such as budget, scalability, data sensitivity and the specific project requirements.

Deep learning vs. machine learning

Both deep learning and ML are subsets of AI, but they have different approaches. Main differences between the two include the following:

- Deep learning is a subset of ML that differentiates itself by the way it solves problems.

- ML involves training algorithms to learn from data and make predictions or decisions without being explicitly programmed for specific tasks.

- ML requires a domain expert to identify the most applied features.

- Deep learning understands features incrementally, thus eliminating the need for domain expertise. However, deep learning algorithms take longer to train than ML algorithms, which only need a few seconds to a few hours. But the reverse is true during testing. Deep learning algorithms take less time to run tests than ML algorithms, whose test time increases along with the size of the data.

- ML doesn't require the same costly, high-end machines and high-performing GPUs that deep learning does.

- Instances where deep learning becomes preferable include situations where there's a large amount of data, a lack of domain understanding for feature introspection or complex problems, such as speech recognition and NLP.

- Many data scientists choose traditional ML over deep learning due to its superior interpretability, or the ability to make sense of the generated results. ML algorithms are also preferred when the amount of data is small.

Potential applications of deep learning in the future

Deep learning is used in both emerging and common technologies. The following are some key areas where deep learning is expected to make strides:

- Healthcare innovations. Deep learning is used in the medical field to detect delirium in critically ill patients. Cancer researchers are using deep learning to automatically detect the presence of cancer cells. Deep learning is also set to transform healthcare by improving diagnostic accuracy and providing personalized treatments. For example, future applications could involve predictive analytics for disease outbreaks, real-time health monitoring via wearable devices and AI-driven virtual health assistants offering tailored medical advice.

- Autonomous cars. Self-driving cars use deep learning to automatically detect objects, such as road signs or pedestrians.

- Social media. Social media platforms can use deep learning for content moderation, combing through images and audio.

- Transfer learning and few-shot learning. Transfer learning, which involves applying a model trained on one task to a related task, is becoming more popular. The emerging field of few-shot learning, which focuses on training models with minimal labeled data, could reduce the need for extensive data preprocessing and large training data sets.

- Smart cities. Deep learning can propel the development of smart cities by optimizing traffic management, energy usage and public safety. AI systems can analyze traffic patterns to minimize congestion, manage energy resources more effectively and enhance surveillance for improved security.

- Edge AI. There has been a growing trend to deploy AI at the edge due to the increase in the computational power of devices. For example, deep learning models are increasingly utilized on mobile devices, internet of things devices and on-premises servers. This reduces latency and enhances privacy by enabling local data processing.

- Deep dreaming. The deep dreaming industry uses deep neural networks to generate new images from a set of input images. The results are often surreal or dream-like, making them ideal for creating new artwork or enhancing existing images.

- Emotional intelligence. Although computers can't replicate human emotions, deep learning can improve their ability to understand moods by analyzing patterns such as tone shifts and facial expressions. Some companies are using deep learning to interpret vocal and facial signals, while others analyze customer service interactions to assess emotional intelligence and provide real-time feedback for better engagement.

- Interpretable AI. Interpretable AI is the future of deep learning and refers to models and systems in AI whose decision-making processes can be understood and explained by humans. Interpretable AI enhances transparency, trust and accountability in AI systems, enabling users to understand how models reach their conclusions, making it easier to identify and correct errors or biases. As deep learning becomes more integrated into critical applications, interpretability will be crucial for ethical and effective use.

Learn more about how deep learning compares to machine learning and other forms of AI.