How to assess and prioritize insider threat risk

Dealing with the human element in security is tough, but critical. This primer describes the types of insider threats and how to use a risk matrix to assess and rank them by importance.

Many published reports and surveys point to the fact that humans within your organization are responsible from anywhere between 30% and 90% of all your cybersecurity woes. That's a big range, and even at the lower end, a large enough percentage to worry about. But it's also a very broad statement, and perhaps the reason why no one seems to be able to agree on how much risk humans within the organization actually pose.

So, let's go on a journey to assess the real threats and when you need to focus on people more than the latest advanced persistent threat (APT).

What is an insider threat?

Many times, the human threat is referred to as the insider threat. What comes to mind when you see the words "insider threat?" Chances are, you're picturing Edward Snowden. He introduced the Snowden effect, becoming the poster child for anyone selling insider threat products for years. The story was simple: If a rogue insider could cause immense damage to the NSA, what chance would a normal organization stand against their own Snowdens?

But insider threats are nothing new. During the height of the Cold War, many spies defected to opposing sides, taking with them national secrets and expertise right from under the noses of their spy bosses. As a result, many counter techniques were developed and deployed to keep an eye on insiders with valuable knowledge or skills to prevent would-be defectors from escaping or passing over information.

But many organizations don't hold state secrets and don't have one country to where their employees would defect. So, for non-state departments, let's try defining an insider threat.

First, let's define an insider. One way of defining it could be any individual with legitimate access within the corporate perimeter -- be it physical or virtual -- including permanent and temporary employees and third-party contractors, as well as third-party support companies and outsourced service providers.

Next, let's define what we mean by a threat. Typically, a threat is defined as something or someone exploiting a vulnerability in a target. In this case, we should reframe it away from the traditional definition to someone who abuses the trust the organization places in them.

Therefore, we can summarize the insider threat as someone who misuses the legitimate access granted to them for the purposes of self-interest that could potentially harm the organization.

However, this definition hasn't taken into consideration motive or intent. Differentiating malicious insider behavior from user error, or even legitimate activity, can be a challenge.

For example, a user is seen downloading several files onto their personal device. It could be they are about to resign and want to take some information with them to their next job. Alternatively, it could be a hardworking and loyal employee who wants to catch up with some work over the weekend. Or worse still, it could be that the user's account has been compromised and is under the control of an attacker masquerading as an insider.

Types of insiders

With this in mind, we can break down insiders into three broad categories:

- Non-malicious insiders are those users who perform actions that have no ill intent but can nevertheless cause harm to an organization. Such actions could include user error, such as running commands against a production environment believing it is a development environment, or losing a company laptop. It can also cover users who are trying to fulfil their jobs by using unapproved tools. Shadow IT falls into this category, describing users who procure or use a cloud application, such as a file-sharing app to increase productivity, but inadvertently expose the company.

- Malicious users are aware of their actions and the negative implications on the organization, yet still pursue those actions. This grouping includes a broad set of users.

- Users who are leaving the organization may harvest information they believe would be useful to them in future jobs. While they are often aware their actions are in violation of company policy, they often justify these actions with a sense of entitlement.

- Disgruntled users may seek to cause disruption or damage to company assets. Activists or employees who believe whistleblower processes are insufficient may also react in a similar manner.

- At the highest level are employees engaged in corporate espionage, providing intellectual property or other sensitive information to competitors, criminal gangs or nation-state sponsored actors.

- Compromised insiders is the final, and often overlooked, category. Typically, these users' credentials have been guessed or captured as part of a targeted attack. Although the actor behind the account is not an employee, the use of legitimate credentials would show up as if they were an employee.

The insider risk matrix

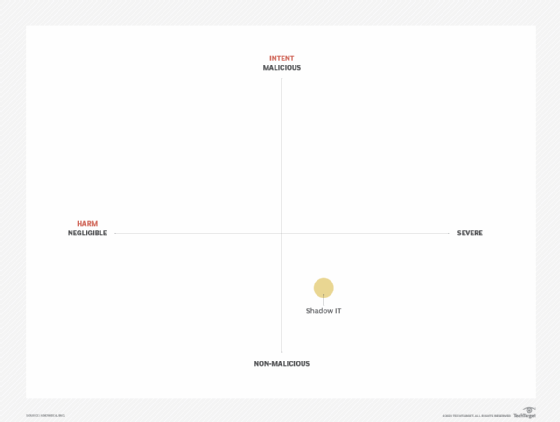

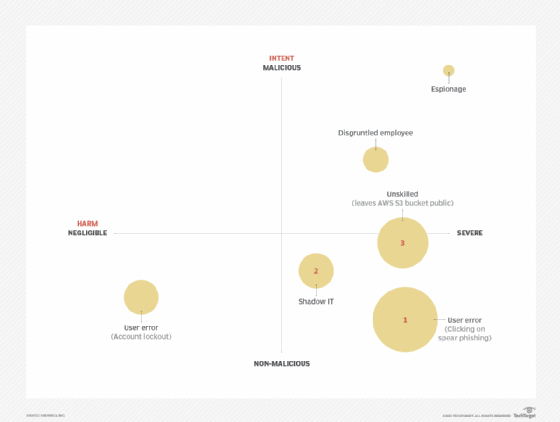

Insider risk factors can be represented in a matrix in which intent is measured against harm. You can plot insider threat scenarios to visualize which are more severe overall by seeing how far they are positioned up and to the right.

For example, a company may deem that the use of shadow IT could allow sensitive company data to be accessed by unauthorized people or exposed during a SaaS provider breach. In this case, the intent would not be malicious because the user was trying to perform their job, yet the consequences could be significant.

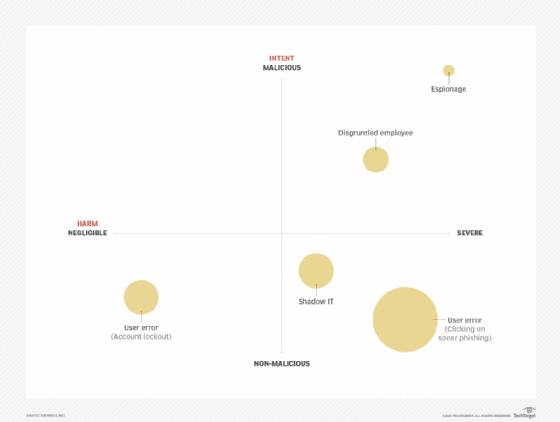

From a risk perspective, intent vs. harm alone doesn't tell the full story. The matrix is missing likelihood, which the bubble size indicates on the chart below.

The bubble size (likelihood) helps you visualize that while espionage can have the biggest impact and is undertaken with the most malicious intent, the likelihood of it occurring is potentially less than that of a disgruntled employee or even shadow IT proliferating within the enterprise.

User error encompasses many activities, all of which are not malicious in nature. However, the harm these activities cause could range from negligible (such as an account lockout) to severe (allowing ransomware to infect the organization by clicking on a phishing link).

Detecting and preventing insider threats

Perimeter and preventative controls are largely ineffective in detecting or responding to insider threats, as by their very nature these are threats from within.

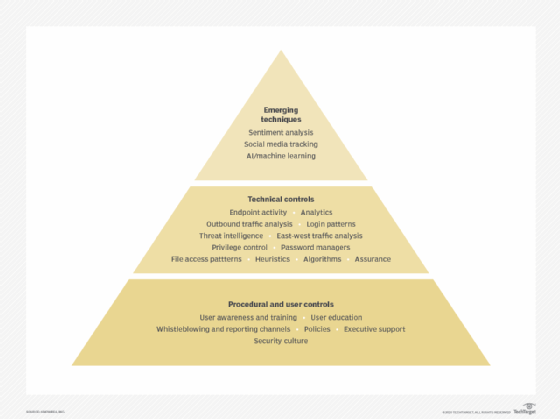

As a result, different techniques should be deployed to address each type of threat based on how it manifests. Like many security controls, the concept of Defense in depth can be applied, whereby a collection of procedural, user and technical controls are used to detect, and even prevent, insider threats from materializing.

You can visualize the broad categories of controls in the pyramid below. Procedural and user controls underpin the entire structure; without these in place to form the solid foundation, nothing above them can work.

Procedural and user controls

Procedural and user controls are important for obtaining management support and ensuring policies are acceptable from a legal as well as cultural perspective. Privacy is a discussion topic that comes up frequently, and transparency into how an organization uses the data it collects about employees is required to retain trust. This layer of controls also provides a framework that allows aggrieved employees to escalate issues without resorting to conducting harmful acts against the organization.

Finally, this layer also raises awareness so that employees can detect and report suspicious activity -- such as a phishing email, a suspicious phone call, a stranger in the office without a badge, or a random USB drive dropped in the parking lot.

Combined, procedural and user controls contribute to building and maintaining a positive security culture, which should be one of the goals of most organizations.

Technical controls

Technical controls, represented in the middle layer of the pyramid, have seen a lot of development in recent years. These tools focus on analytical techniques to identify suspicious user activity. Primarily, these controls provide baselines for user activity against past actions, as well as baselines against peer activity to identify outliers. The baselines can be set against logins (times/locations), file or system access, network traffic, or even endpoint activity, among others.

Threat intelligence can also be an asset in understanding whether outbound traffic is communicating with known command and control servers or other suspicious transfers.

Emerging techniques

Alongside threat intelligence, several new approaches are being developed that can directly or indirectly assist in finding insiders. Social media channels play an ever-increasing role, both in legitimate and not-so-legitimate communications. The ability to monitor these channels, particularly in instances where they are enhanced by specific threat intelligence, greatly increases the chances of isolating activity on social channels that were formerly out of band.

Sentiment analysis is also garnering more interest. It analyzes employee data to understand attitudes and identify where an employee may be disgruntled or have activist tendencies that are contrary to business values.

Assess your threat impact

When we look at the controls pyramid, two things jump out. First, the pyramid by no means represents a comprehensive list of controls. Second, and more important, there are way too many controls to address every aspect of human error and insider threats. Organizations that want to apply controls are also faced with competition for resources, compliance initiatives, slow budget cycles, and many other organizational issues.

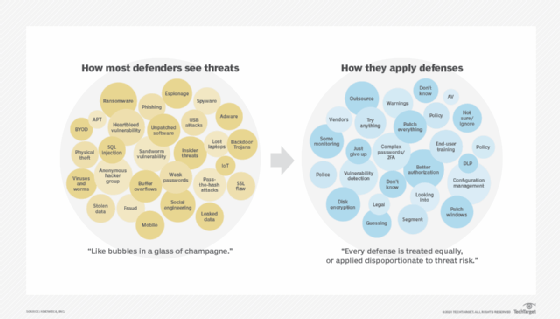

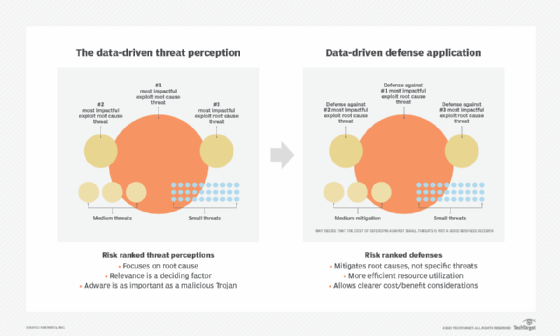

We can address this challenge by putting data in the driver's seat. When we say there are too many threat vectors and too many security controls that need to be put in place, we are basically looking at all threats equally -- and making the dangerous assumption that all remediation needs to be applied equally as depicted in the figure below.

An equal approach is also an extremely inefficient way of doing security. Paraphrasing George Orwell's Animal Farm, "All threats are equal, but some threats are more equal than others."

Yes, it's prudent to want to reduce exposure to external security threats. But it's arguably more sensible to focus your efforts on discerning what the biggest issues are to your business and investing the largest proportion of security controls and spending in that area.

Data-driven defense forces you to completely adjust your thinking. First, it requires you re-examine your entire model of threat perception, focusing on root causes, rather than individual security episodes. Root causes are determined by three main factors: data, relevance, and the individual experience of those working within the organization, who possess the relevant security knowledge required to make critical judgments.

Once an organization has determined the pressing root causes requiring remediation, teams can then prioritize resources to counter them.

Prioritizing threats based on data

Armed with our newfound knowledge about root causes, we can take a more data-driven approach to dealing with the human element.

Revisiting our insider risk matrix, we can label our top three threats. In this revised example, users clicking on phishing is the number one threat, shadow IT is at number two, and unskilled users who are prone to making configuration errors (such as leaving AWS S3 buckets exposed to the public) is number three.

This example tells us that non-malicious activity on the user's part is a bigger threat than malicious actions.

So, in this case, we need to select security controls that can help nudge users in the right direction, either through better tools or by providing them the relevant and appropriate awareness and training they need to adopt more secure practices.

Remediating insider threats and suspicious activity

New techniques are continually being developed to detect and respond to insider threats, but dealing with humans, particularly trusted employees, requires a different strategy and approach than dealing with malware.

While any suspicious email or file can be relatively easily quarantined or blocked until proven otherwise, employees cannot be suspended or fired based on a couple of indicators or mere suspicion, especially when a large portion of suspicious activity can take place outside the realm of IT systems. This means that organizations need to work with HR and legal departments to determine the best strategy to investigate suspicious activity and how to interact with suspected employees.

Ultimately, it's a delicate balancing act. Technology is not sufficiently advanced to fully understand humans and make rational decisions. Which is why in today's enterprise, everyone has a role to play in ensuring the security of the organization and their colleagues. Neglecting to foster a security culture and ignoring the human element is a mistake no organization can make in today's day and age.

About the author

Javvad Malik is a security awareness advocate at KnowBe4, a blogger, event speaker and industry commentator. He is possibly best known as one of the industry's most prolific video bloggers with a fresh perspective on security that speaks to technical and non-technical audiences alike. Prior to joining KnowBe4, Malik was a security advocate at AlienVault and a senior analyst in the Enterprise Security Practice at 451 Research. Prior to joining 451, he was an independent security consultant, with a career spanning more than 12 years working for some of the largest companies across the financial and energy sectors. In addition to authoring and co-authoring several books, Javvad is a co-founder of the Security B-Sides London conference.