CenturionStudio.it - Fotolia

Top 30 DevOps interview questions and answers for 2021

The need for qualified DevOps job candidates continues to grow as more companies adopt the methodology. Review these DevOps questions and answers to prepare for an interview.

DevOps is gaining momentum in organizations of all types and sizes, resulting in a growing need for qualified individuals to fill various DevOps roles. But breaking into the DevOps field is no small feat, and it inevitably means having to interview for one or more positions. During those interviews, candidates will likely be asked an assortment of questions, and they should be able to respond to them confidently and knowledgably.

A good way for candidates to prepare for an interview is to review example DevOps interview questions in advance. This can guide them to the type of information they need to know, as well as prepare them for answering questions about a range of topics. To help candidates with this process, this article provides 30 interview questions and their possible answers.

Candidates should keep in mind that DevOps is a broad field that requires a wide breadth of skills and knowledge. These 30 questions offer a good representation of the types of information that's important for DevOps candidates to understand and can help them prepare for an interview.

DevOps general knowledge

Interviewers are likely to ask general questions about DevOps to gauge the candidate's understanding of fundamental principles and overall concepts.

1. What is DevOps?

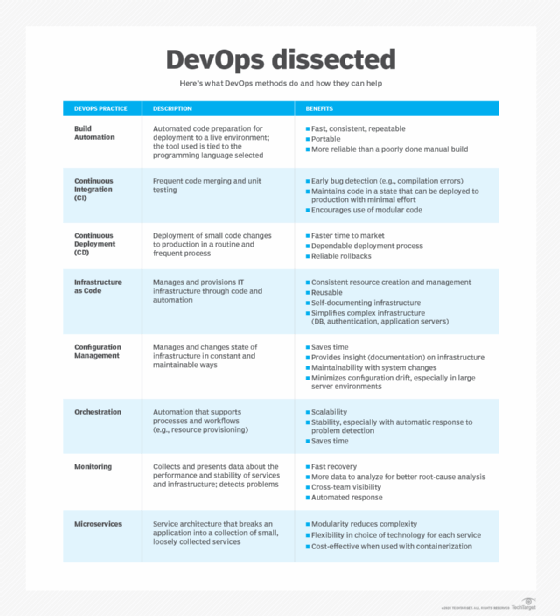

When answering this question, candidates should make it clear what DevOps is and what it is not. DevOps is a cultural shift and the adoption of a specific mindset -- one that emphasizes communication and collaboration among development and IT operations teams, as well as with other stakeholders. DevOps is not an individual, nor is it a specific tool or technology. DevOps typically incorporates common methodologies such as continuous integration, continuous delivery and automation.

2. What problems does DevOps try to solve?

DevOps tries to address many of the issues inherent in traditional application development and delivery methodologies, with the goal of improving operations throughout the application development lifecycle. The following list includes several of the issues that DevOps tries to address.

- The application delivery process is slow, with fixes and updates taking too long.

- Siloes between development, operations and other stakeholders make it difficult to work toward common application delivery goals.

- Applications do not run correctly in all environments. They might work fine during development and testing but not when they're deployed in a production environment.

- Problems with an application are often discovered late in the development lifecycle, making them more difficult, time-consuming and costly to resolve.

- The process of identifying and resolving issues can be complex and time-consuming, often leading to unexpected delays and cost overruns.

- Traditional application development and delivery methodologies are full of repetitive and manual tasks that take too long and use up valuable time.

3. What benefits does DevOps offer?

DevOps offers numerous benefits, and candidates should understand what these are and why they make DevOps so valuable. The following list includes several of these benefits:

- Streamlines software delivery and deployment processes

- Reduces the number of silos between IT groups

- Increases communications between team members and other stakeholders

- Incorporates faster feedback, resulting in quicker software improvements

- Automates repetitive, manual tasks, leading to increased efficiency

- Results in less downtime and faster time to market

- Provides an infrastructure for continuous software development and delivery

4. What are some of the challenges that come with implementing DevOps?

Candidates should be aware of the challenges, as well as benefits, of DevOps. The following list describes some of these challenges.

- DevOps can result in IT departmental changes or shifts in personnel and often requires special training or new skills.

- A DevOps environment can raise security and compliance challenges that might require additional resources to address.

- DevOps can be difficult to scale across projects and teams.

- DevOps tools and platforms can be costly and complex, often requiring additional training and support.

- Organizations often implement DevOps while still supporting traditional methodologies, resulting in increased complexity and expense.

- Creating the right DevOps culture can often be a challenge, especially if individuals are resistant to change or if leadership isn't fully onboard.

- Moving from traditional tools and methodologies to DevOps takes careful planning and preparation and can be a complex, time-consuming process, even under the best circumstances.

5. What is shift-left in DevOps?

The concept of shifting left in DevOps is based on the idea that the application development and delivery process can be graphically represented as a workflow that moves from left to right. In traditional approaches to application development, tasks such as testing software are often done later in the development process, nearer to the right end of the graph. But DevOps moves such tasks toward the left end, that is, sooner in the development process. This shift to the left makes it possible to identify issues while they're still more manageable, resulting in faster development cycles and more streamlined operations.

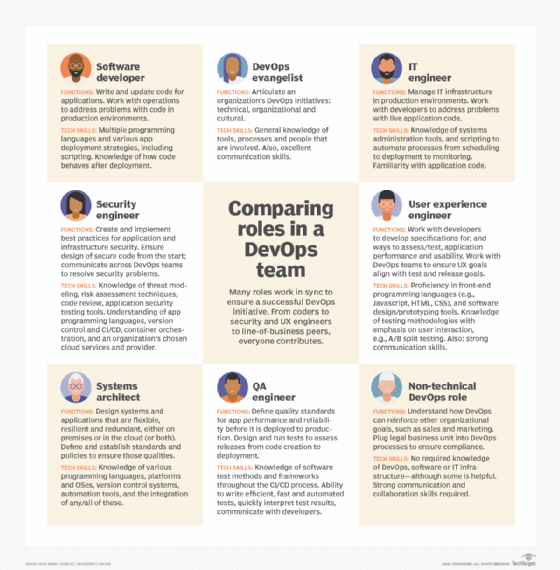

6. What are common DevOps roles?

DevOps roles may vary from one team to the next or they might go by different names. An individual might also perform multiple roles or shift from one role to another. DevOps candidates should have a solid understanding of the different possible roles, no matter which roles they're applying for. The following list includes some of the more common DevOps roles.

- DevOps engineer. Oversees DevOps processes and software development lifecycle and is responsible for fostering open communication and a collaborative environment.

- Software developer. Writes and updates application code and may also create unit tests or infrastructure as code (IaC) instruction sets.

- Software tester. Ensures applications meet quality assurance (QA) standards and can be safely released to production.

- Security engineer. Ensures application and infrastructure security, with a focus on data integrity and compliance.

- User experience (UX) engineer. Ensures applications meet UX expectations and UX goals align with test and release goals.

- Automation engineer. Plans and delivers automation solutions that eliminate manual, repetitive tasks and support the continuous integration/continuous delivery (CI/CD) pipeline.

- Release manager. Oversees the CI/CD pipeline, along with other operations that support building and deploying applications.

- DevOps evangelist. Promotes the organization's DevOps initiatives, while articulating its benefits.

7. How does DevOps differ from Agile?

DevOps and Agile are similar in many respects. They both emphasize small, incremental release cycles and both call for a cultural shift and change in mindset. But they're still different methodologies, and candidates should understand where those differences lie.

- Agile is a software development methodology that emphasizes flexibility, communication and the ability to respond to change, while relying heavily on customer feedback. Unlike traditional development methodologies, such as the waterfall approach, Agile delivers software through small, incremental release cycles with a focus on working software, rather than on thorough documentation.

- DevOps is concerned primarily with bridging the gap between development and operations in order to streamline application development and delivery. DevOps seeks to achieve the same level of flexibility as Agile but extends it to operations. DevOps is also concerned with receiving ongoing feedback throughout the application development and delivery process, which includes internal operations, as well as customer input.

- DevOps and Agile are not mutually exclusive, so there is nothing to prevent organizations from using the two methodologies together.

8. What are soft skills and why are they important to a DevOps team?

Soft skills are equally important to technical skills and are critical to a successful DevOps effort. Soft skills are interpersonal skills necessary for individuals to work together effectively and toward a common goal. Candidates must be able to demonstrate that they possess an assortment of soft skills, which can include any of the following:

- Strong communication skills that help break down silos between teams and individuals.

- Willingness and ability to collaborate on projects and solve problems together.

- Ability to empathize with team members and other stakeholders in a way that values their opinions and appreciates their situations.

- Ability to assess situations, think critically and solve problems, taking into account both the big picture and individual details.

- Willingness to embrace and encourage an attitude of shared ownership and team culture.

DevOps methodologies

Questions in this category focus on the processes and tools used to support application development and delivery. With these types of questions, there is typically a strong emphasis on the CI/CD pipeline.

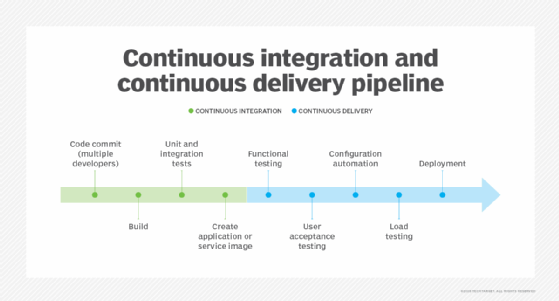

9. What are the differences between continuous integration, continuous delivery and continuous deployment?

Continuous integration, continuous delivery and continuous deployment are all part of the DevOps pipeline, and candidates should have a thorough understanding of how they work and the differences between them.

- Continuous integration. Developers make frequent, isolated changes to the code and then check those changes into source control, which triggers an automated build and testing process. If the changes pass the tests, they're added to the larger code base. If the changes do not pass the tests, they're rolled back so the issues can be resolved.

- Continuous delivery. CD is an extension of CI that automates application delivery to one or more infrastructure environments. Changes that have been committed and have passed the automated tests are considered valid release candidates and are incorporated into the CD process for delivery. In other words, CI focuses on the build and initial code tests, and CD focuses on the operations that occur after the changes have been committed and the application built.

- Continuous deployment. With CD, code is prepared for the target environment, but the application does not actually go live. For that, the code changes must be manually tested and approved. However, continuous deployment makes it possible to automatically test and release the software into a production environment, where it is scaled and monitored, thus, avoiding the manual steps inherent in CD. Not every DevOps environment incorporates continuous deployment.

10. What are the benefits of continuous integration?

Continuous integration is an essential component of the DevOps methodology that offers numerous benefits, including:

- Application issues are discovered more quickly and before they're added to the code base.

- The application build and testing operations are automated, repeatable, fast and efficient.

- Every developer can commit often and to the same code base.

- Application updates and fixes are deployed more quickly and with fewer risks.

- All code check-ins are tracked, changes can be rolled back and everyone has access to the latest build.

11. What are the basic phases in a DevOps operation?

DevOps phases are often categorized and identified in different ways, which can make it confusing for candidates trying to come up with the best way answer this type of question. Even so, the DevOps process typically includes the following phases or phases similar to these:

- Continuous development. Planning and coding the software, as well as creating test cases and automation processes. All code is managed through a source control solution.

- Continuous testing. Ongoing process that ensures an application meets QA, security and compliance standards. Continuous testing goes hand-in-hand with the next phase, continuous integration; however, testing can also occur outside the CI operation.

- Continuous integration. Ongoing process of validating, building and testing the application code as it's checked into source control.

- Continuous delivery. Automated process of delivering release candidates to one or more infrastructure environments.

- Continuous deployment. An optional phase in which the deployment process itself is automated, serving as a continuation of the CD phase.

- Continuous feedback and monitoring. Combination of feedback loops and monitoring tools that track both the application and DevOps processes and provide ongoing input that can be used to improve both. Feedback and monitoring are sometimes treated as two separate phases.

- Continuous operations. The ongoing evaluation of the DevOps infrastructure and application delivery for opportunities to improve and streamline operations.

12. What is the blue-green deployment model?

This is a change management strategy for releasing software code. It requires two identical hardware environments that are configured in exactly the same way, with one environment active and the other inactive.

New code is released to the inactive environment, where it is tested and verified. If the code is considered viable, that environment is made the active one and the other environment becomes the passive one. If everything continues to work as it should, the update might then be applied to the newly designated passive environment so it can serve as a failover. On the other hand, the environment might sit idle until the next update, essentially reversing the deployment process (in terms of which environment is updated first).

13. What challenges do configuration management tools address?

Configuration management tools such as Ansible, Chef, Puppet and SaltStack can reduce costs, boost productivity and ensure the continuous delivery of IT services, which is essential to an effective DevOps operation. Although DevOps candidates don't need to have intimate knowledge of every tool on the market, they should have basic familiarity with the more popular tools and understand what problems these tools can help address. Configuration management tools can help address the following challenges:

- Inconsistencies across systems

- Configuration drift

- Manual, repetitive tasks

- Inefficient IT workflows

- Inadequate disaster recovery

- Site unreliability and long downtimes

- Difficulty scaling and maintaining availability

- Lack of visibility and change tracking

- Slow and error-prone infrastructure setups

- Infrastructure complexity and costs

14. What are the differences between a Chef cookbook and recipe?

DevOps candidates are not expected to have expert knowledge of all configuration management tools; however, they should understand some of the fundamental aspects of the more popular tools, including Chef. As part of that understanding, candidates should be able to differentiate between a Chef cookbook and recipe.

- A cookbook is a unit of configuration and policy distribution that defines a scenario. The cookbook includes everything needed to support that scenario, including templates, file distributions, attribute values, Chef extensions, and one or more recipes.

- A recipe is the most fundamental configuration element within a cookbook. A recipe is written in the Ruby programming language and is made up mostly of resources. A resource is a configuration policy that describes the desired state for a configuration item and declares the steps needed to bring that item to the desired state. A resource also includes the resource type, such as package, template or service, and it provides any other necessary details. A recipe is always part of a cookbook.

15. What is an Ansible playbook?

Ansible is another popular configuration management tool. One of the most important concepts in Ansible is the playbook. For this reason, candidates should have a basic understanding of what a playbook is and how it works.

A playbook serves as a blueprint for automating IT infrastructure, including operating systems, Kubernetes platforms, security systems and network components. Playbooks are written in YAML script and can be used to program server nodes, applications, services and other devices. DevOps teams can save, share or reuse playbooks indefinitely.

A playbook contains a list of tasks that can be automatically executed against the target hosts. Each playbook is made of modules that perform the specific tasks. A module contains metadata that specifies when and where the task should be executed. Ansible playbooks support thousands of modules for performing a variety of IT tasks, including cloud management, networking, user management, security, communication and configuration management.

16. What is a distributed version control system?

A distributed version control system (DVCS) such as Git, Bazaar and Mercurial delivers a local copy of the complete repository to everyone working on that project. Participants carry out commit, branch and merge operations locally and then push their changes to the other users. The DVCS does not require a centralized server to store the repository, as is the case with a centralized version control system such as Subversion.

With a DVCS, team members experience fewer merge conflicts and can merge branches more quickly, and they can work offline when needed. The DVCS also ensures there are multiple backup copies of the repository at any one time. However, the DVCS does not provide the same locking capabilities as a centralized system and might not be as secure because more people have the code, leading to greater exposure and risks.

17. What are the differences between forking and branching in Git?

A source control solution is essential to DevOps processes, and one of the most popular source control tools used in DevOps is Git. Candidates should understand how Git works, as well as fundamental Git concepts, such as the differences between forking and branching:

- Forking. The process of creating a copy of a repository that can be used as a starting point for another project or as a way to experiment with changes without affecting the original repository. The fork is a completely independent project whose changes may or may not be synced back into the original repository. A fork might be maintained indefinitely or used only for a short duration.

- Branching. The process of creating a parallel version of the repository that's contained in the original repository but does not affect the main branch, enabling developers to make changes without impacting that branch. Branching is used extensively in Git to support independent development. For example, developers can create a branch to work on a specific feature within the application. After they've made their changes, they can merge the branch back into the main branch and the new feature will be incorporated into the application.

DevOps-supporting technologies

Questions about supporting technologies are concerned with those platforms, services and methodologies that support DevOps.

18. What are the advantages of infrastructure as code?

DevOps teams routinely use IaC as part of their application development and delivery processes. For this reason, candidates should understand how IaC works and its benefits. The following list includes several of these benefits.

- Infrastructure is defined in code, so it can be stored in source control, providing the same versioning and history benefits as other code.

- Infrastructure is repeatable, making it easier to deploy an application to multiple environments, while providing consistency across those environments.

- Infrastructure deployments can be incorporated into automated processes, reducing manual tasks and simplifying administration, while increasing site reliability.

- DevOps teams can implement infrastructure more quickly, enabling them to deploy their applications to production much faster.

- IaC can increase efficiency and help minimize deployment risks, while reducing deployment costs.

19. What are the differences between the imperative and declarative approaches in IaC?

When automating infrastructure, IaC tools typically take an imperative approach or declarative approach; although, some tools support both approaches. DevOps candidates should understand the differences between these two approaches.

- Declarative. Defines the infrastructure's desired state or outcome, including what resources are needed, but leaves it to the IaC tool to determine the best way to implement the infrastructure.

- Imperative. Defines the specific sequence of steps that the IaC tool should take to achieve the desired configurations. The IaC tool then carries out the commands in the specified order.

The declarative method can simplify configurations, but it doesn't provide the same level of control as the imperative approach, which can be a factor when there are subtle environmental variations. However, the level of control offered by the imperative approach can be daunting with complex implementations, especially if it becomes necessary to change the desired state, which means updating all the steps necessary to reach the new state.

20. How are Docker containers and images related?

When people talk about containers, they're often referring to Docker containers, which have been at the heart of the container movement. Candidates should have a strong understanding of how Docker containers work and how they can be used in DevOps. To this end, they should be able to distinguish between Docker images and Docker containers.

- A Docker image is a read-only immutable file that serves as the basis for creating Docker containers. An image does not have state and it never changes. It contains an ordered collection of root filesystem changes and their corresponding execution parameters. In most cases, the filesystems are layered and stacked on top of each other. An image is, essentially, a template that includes the source code, libraries, dependencies and other components necessary for creating containers.

- A Docker container is a runtime instance of a Docker image. It includes the image itself, along with an execution environment and standard set of instructions. A container provides a virtualized runtime environment for isolating applications from the underlying system. The environment is portable and lightweight and can be easily created, started, stopped, moved or deleted. Containers can also run side-by-side on the same system without interfering with each other.

21. What is a Dockerfile?

A Dockerfile is a text document that contains the commands necessary for building a Docker image. By using Dockerfiles, developers don't have to remember how to set up their images each time they create one. Docker can build images automatically by reading the Dockerfile instructions, helping to avoid a lot of manual steps. When creating Dockerfiles, developers should follow best practices such as:

- Use caching effectively to avoid having to rerun build steps unnecessarily.

- Reduce image sizes to speed up deployments and reduce attack surfaces.

- Consider maintainability, such as using official images or making tags more specific.

- Ensure reproducibility by fetching dependencies in a separate step, removing build dependencies or taking other actions.

22. How can Kubernetes benefit DevOps?

Many DevOps environments use containers to deliver their applications. Kubernetes is another popular tools for working with containers. For this reason, candidates should be well versed in how Kubernetes works and its benefits. The following list describes many of these benefits:

- Kubernetes supports the "build once, deploy everywhere" model, providing consistency across development, testing, staging and application environments.

- Application development and deployment are more efficient, leading to greater productivity and faster time to market.

- DevOps teams can easily scale Kubernetes without sacrificing availability.

- Kubernetes can help save money because it can increase productivity, streamline operations, speed up application delivery and use infrastructure resources more efficiently.

- Kubernetes is portable, open source and compatible across different platforms and frameworks, making it a highly flexible solution that can support multi-cloud environments.

- Kubernetes automates container-related operations, offers seamless updates, provides no-downtime deployments and supports IaC.

23. What components make up a Kubernetes cluster?

Because Kubernetes is so widely implemented, DevOps candidates should understand how the platform works and have a basic understanding of the components that make up a Kubernetes cluster, which includes a control plane and one or more worker nodes.

- The control plane manages the worker nodes, makes global decisions about the cluster and responds to cluster events. The control plane is typically hosted on multiple servers that run the components necessary to maintain the cluster and its containers.

- A cluster must include at least one worker node, although most usually include many nodes. Each node runs one or more pods. A pod is the smallest deployable unit in a Kubernetes cluster. It supports one or more containers and includes a specification for how to run the containers. A pod's containers are configured with shared storage and network resources. Each worker node also runs other components needed to maintain the pods and their containers.

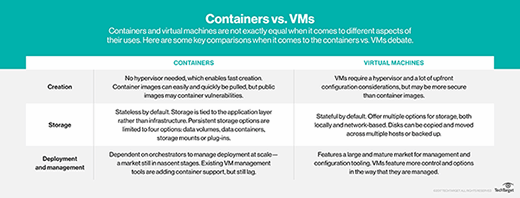

24. How do virtual machines differ from containers?

DevOps teams often rely on both containers and virtual machines, sometimes running containers within VMs. Although they both provide virtual environments for running applications, there are several important differences between them, including:

- A VM runs a complete operating system, including its own kernel. A container is built on top of the host OS and utilizes its kernel.

- Multiple VMs with different operating systems can run on the same host, regardless of the host's OS. Multiple containers on the same host are limited to that host's OS.

- Containers cannot achieve the same level of isolation as VMs, making them less secure.

- Containers are more portable and lightweight than VMs, require fewer resources than VMs and are faster to provision and boot up.

- Because VMs are full systems, maintaining and updating them is more complex than with containers.

- Containers are generally quicker and easier to scale than VMs because they're more lightweight and portable.

25. What are the advantages of using cloud services for DevOps?

Organizations do not have to use cloud services for their DevOps processes, but many find them useful because they offer the technology and flexibility needed to get a DevOps environment up and running in little time and with less effort. Candidates should have a good understanding of the cloud's benefits, which can include any of the following:

- DevOps teams can provision cloud services in a matter of minutes, rather than days or weeks.

- Cloud services make it easy for DevOps teams to scale their operations up and down to meet fluctuating demands.

- DevOps teams can automate many of their operations in cloud environments, providing more time for team members to focus on other efforts.

- Cloud providers maintain and monitor infrastructure, freeing up the DevOps teams for other tasks.

- Major cloud providers support extensive partner ecosystems that make it easy to integrate with third-party tools and services.

- Cloud services make it easier to get started and keep working, resulting in quicker development cycles and faster time to market.

DevOps practices

These questions focus on the day-to-day operations carried out by DevOps team members in their various roles.

26. What is SSH used for?

Secure Shell (SSH), which is also known as Secure Socket Shell, is a network protocol that provides systems administrators with a secure method for accessing a computer over an unsecured network. The protocol supports strong password authentication, public key authentication and encrypted communications over an open network such as the internet. Secure Shell can also refer to the suite of utilities that implement the SSH protocol.

Sys admins use SSH extensively to manage systems and applications remotely. It enables them to log into a computer over a network and execute commands, including the ability to move files among computers. An SSH implementation might also include support for the application protocols used for terminal emulation or file transfers. In addition, administrators can use SSH to create secure tunnels for other application protocols.

27. What is component-based development or componentization?

This is an approach to development that breaks software down into identifiable components that can be developed and deployed independently. After they're deployed, the components are connected together through the use of workflows and network connections and are presented as a single application. The components typically use standard interfaces and conform to common componentization models such as service-oriented architecture (SOA), Common Object Request Broker Architecture (CORBA), Component Object Model+ (COM+) or JavaBeans.

Component-based development can make it easier for developers to collaborate on a single project. Additionally, components that meet well-defined specifications can be reused, helping to speed up development and increase reliability. Not only can DevOps teams get software out faster, but they can also create better quality software. Additionally, component-based development offers greater flexibility. Developers can easily replace or add components as business requirements change, and workflows can be spread across multiple servers, leading to better performance.

28. What types of testing does Selenium support?

Selenium is a popular test automation framework that supports automated testing. Because of its popularity, candidates should be familiar with how Selenium fits into DevOps and the types of testing it supports.

- Functional testing. Determines whether a feature or system is functioning properly and does what it's supposed to do. Functional tests ensure the DevOps team is building the product correctly.

- Acceptance testing. Determines whether a feature or system meets the customer's requirements and expectations. Acceptance testing ensures the DevOps team is building the right product. This type of testing is often considered a subcategory of functional testing.

- Performance testing. Measures how well an application is performing. Selenium supports two categories of performance testing: load testing and stress testing. Load testing determines how well the application works under different loads. Stress testing determines how well the application works under stress.

- Regression testing. Ensures a change, fix or added feature has not broken any existing functionality. Regression testing often requires the re-execution of tests that have already been executed.

Selenium also supports test-driven development (TDD) and behavior-driven development (BDD). With TDD, unit tests drive the design of a feature and development is carried out to make the tests pass. BDD is based on TDD but has the goal of involving all parties in the application's development.

29. How does continuous testing differ from automated testing?

Testing is an essential component of the DevOps process and is integral to successful application delivery. Two important concepts of DevOps testing are automated testing and continuous testing. Candidates should understand the differences between them.

- Automated testing simply refers to the process of automating testing processes that have traditionally been performed manually. Test automation is essential to implementing effective continuous integration because it ensures new or updated code doesn't introduce errors into the application. Automation also makes it possible to run tests repeatedly or run them in parallel. Not surprisingly, automation testing is much faster than manual processes, helping to achieve quicker development cycles.

- Continuous testing typically goes hand-in-hand with automated testing, but they are not one in the same. Continuous testing simply means that testing is ongoing throughout the development and deployment processes, even if it occurs outside the CI/CD pipeline. Continuous testing is typically considered a phase in the DevOps process that ensures an application meets QA, security and compliance standards. Although much of the testing is part of the automated testing included in the CI process, testing can also occur when staging and deploying an application.

30. Which KPIs are important to track?

DevOps teams should track a range of key performance indicators (KPIs) when monitoring their DevOps environments and applications. The following is a list of some of the more common KPIs:

- Application performance

- Change lead time (from inception to production)

- Change volume (number of new user stories or code changes)

- Defect volume and escape rate

- Deployment frequency

- Failed deployments

- Feature prioritization (based on end-user usage)

- Mean time to detection

- Mean time to recovery

- Rate of security test passes

Certifications and courses can help boost or expand a candidate's knowledge of DevOps. Here are some top options to explore.