metadata

Often referred to as data that describes other data, metadata is structured reference data that helps to sort and identify attributes of the information it describes. In Zen and the Art of Metadata Maintenance, John W. Warren describes metadata as "both a universe and DNA."

Meta is a prefix that -- in most information technology usages -- means "an underlying definition or description." Metadata summarizes basic information about data, which can make it easier to find, use and reuse particular instances of data.

For example, author, date created, date modified and file size are examples of very basic document file metadata. Having the ability to search for a particular element (or elements) of that metadata makes it much easier for someone to locate a specific document.

In addition to document files, metadata is used for:

- computer files

- images

- relational databases

- spreadsheets

- videos

- audio files

- web pages

The use of metadata on web pages can be very important. The metadata contains descriptions of the page's contents, as well as keywords linked to the content. This metadata is often displayed in search results by search engines, meaning its accuracy and details could influence whether or not a user decides to visit a site. This information is usually expressed in the form of meta tags.

Search engines evaluate meta tags to help decide a web page's relevance. Meta tags were used as the key factor in determining position in a search until the late 1990s. The increase in search engine optimization (SEO) towards the end of the 1990s led to many websites to keyword stuffing their metadata to trick search engines, making their websites seem more relevant than others.

Since then, search engines have reduced their reliance on meta tags, although they are still factored in when indexing pages. Many search engines also try to thwart web pages' ability to deceive their system by regularly changing their criteria for rankings, with Google being notorious for frequently changing its ranking algorithms.

Metadata can be created manually or by automated information processing. Manual creation tends to be more accurate, allowing the user to input any information they feel is relevant or that would help describe the file. Automated metadata creation can be much more elementary, usually only displaying information such as file size, file extension, when the file was created and who created the file.

Metadata use cases

Metadata is created anytime a document, a file or other information asset is modified, including its deletion. Accurate metadata can be helpful in prolonging the lifespan of existing data by helping users find new ways to apply it.

Metadata organizes a data object by using terms associated with that particular object. It also enables objects that are dissimilar to be identified and paired with like objects to help optimize the use of data assets. As noted, search engines and browsers determine which web content to display by interpreting the metadata tags associated with an HTML document.

The language of metadata is written to be understandable to both computer systems and humans, a level of standardization that contributes to better interoperability and integration between disparate applications and information systems.

Companies in digital publishing, engineering, financial services, healthcare and manufacturing use metadata to gather insights on ways to improve products or upgrade processes. For example, streaming content providers automate the management of intellectual property metadata so it can be stored across an array of applications, thus protecting copyright holders while at the same time making music and videos accessible to authenticated users.

The maturity of AI technologies is somewhat easing the traditional burden of managing metadata by automating previously manual processes to catalog and tag information assets.

History and origins of metadata

Jack E. Myers, founder of Metadata Information Partners (now The Metadata Co.), claims to have coined the term in 1969. Myers filed a trademark for the unhyphenated word "metadata" in 1986. Despite this, references to the term appear in academic papers that predate Myers' claim.

In an academic paper published in 1967, Massachusetts Institute of Technology professors David Griffel and Stuart McIntosh described metadata as "a record … of the data records" that result when bibliographic data about a topic is gathered from discrete sources. The researchers concluded that a "meta-linguistic approach," or "meta language," is needed to enable a computer system to properly interpret this data and its context to other relevant pieces of data. Unlike Myers, Griffel and McIntosh treated "meta" as a prefix to "data."

In 1964, an undergraduate computer science major named Philip R. Bagley started work on his dissertation, in which he argued that efforts to "make composite data elements" ultimately rests on the ability to "associate explicitly" to a second and related data element, which "we might term a 'metadata element.'" Although his thesis was rejected, Bagley's work, including his reference to metadata, subsequently was published as a report under a contract with the U.S. Air Force Office of Scientific Research in January 1969.

Types of metadata and examples

Metadata is variously categorized based on the function it serves in information management.

- Administrative metadata allows administrators to impose rules and restrictions governing data access and user permissions. It also furnishes information on required maintenance and management of data resources. Often used in the context of ongoing research, administrative metadata includes such details as date created, file size and type, and archiving requirements.

- Descriptive metadata identifies specific characteristics of a piece of data, such as bibliographic data, keywords, song titles, volume numbers, etc.

- Legal metadata provides information on creative licensing, such as copyrights, licensing and royalties.

- Preservation metadata guides the placement of a data item within a hierarchical framework or sequence.

- Process metadata outlines procedures used to collect and treat statistical data. Statistical metadata is another term for process metadata.

- Provenance metadata, also known as data lineage, tracks the history of a piece of data as it moves throughout an organization. Original documents are paired with metadata to ensure that data is valid or to correct errors in data quality. Checking the provenance is a customary practice in data governance.

- Reference metadata relates to information that describes the quality of statistical content.

- Statistical metadata describes data that enables users to properly interpret and use statistics found in reports, surveys and compendium.

- Structural metadata reveals how different elements of a compound data object are assembled. Structural metadata is often used in digital media content, such as describing how pages in an audiobook should be organized to form a chapter, and how chapters should be organized to form volumes, and so on. The term "technical metadata" is a synonym most closely associated with items in digital libraries.

- Use metadata is data that is sorted and analyzed each time a user accesses it. Based on analysis of use metadata, business can pick out trends in customer behavior and more readily adapt their products and services to meet their needs.

How to use metadata effectively

The accelerated rate of data growth has fueled new interest in the potential business value that can be derived from metadata. A variety of data structures exist that present both opportunities as well as challenges.

Metadata management provides an organizational framework to harmonize discrete data sets stored across various system. It also provides an organizational consensus to describe information, often broken into business, operational and technical data.

Companies implement metadata management to winnow out older data and develop a taxonomy to classify data according to its business value. A component of this is a catalog or central database that serves as a metadata repository, also known as a data dictionary.

In addition to classifying data, metadata management strategies are used to improve data analytics, develop a data governance policy and establish an audit trail for regulatory compliance.

At its core, metadata management is about enabling people to identify the attributes of a particular piece of data using a web-based user interface. The attribute might be the file's name, its author, a customer ID number, and so on. The person requesting the document is thus able to see and understand the different attributes of the data, the enterprise system it resides in and the reasons those attributes were created.

As of November 2020, Alation, ASG, Alex Solutions, Collibra, Erwin, IBM, Informatica, Oracle, SAP and SmartLogic are ranked among leading metadata management platform vendors by IT analyst firm Gartner in its Magic Quadrant for Metadata Management Solutions.

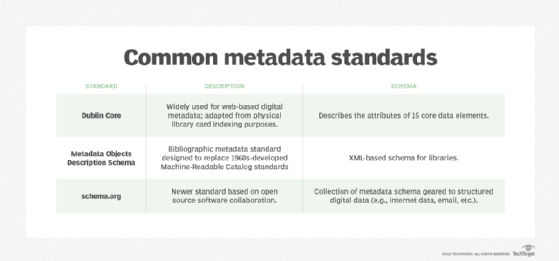

Standardization of metadata

A number of industry standards have been developed to make metadata more useful. These standards ensure consistency on the common language, format, spelling and other attributes to be used to describe data. Each standard is based on a specific schema that provides an overarching structure for all its metadata.

Dublin Core is a widely used general standard originally developed to aid in the indexing of physical library card catalogs. The standard has since been adapted for web-based digital metadata. Dublin Core describes the attributes of 15 core data elements: title, creator, subject, description, publisher, contributors, date, type, format, identifier, source, language, relation, coverage and rights management.

A similar bibliographic metadata standard is Metadata Objects Description Schema, an XML-based schema for libraries, spawned by the Network and Standards Development Office of the U.S. Library of Congress as a successor to Machine-Readable Catalog standards developed in the 1960s.

A newer standard, schema.org, is based on open source software collaboration that provides a collection of metadata schema geared to structured internet data, email and other forms of digital data.

Industry-specific metadata schema

A number of standard metadata schema have been developed to meet the unique requirements of certain disciplines and industry verticals.

Arts and humanities:

- Text Encoding Initiative is a consortium of institutions developing standards that specify encoding methods for representing machine-readable text in digital form.

- VRA Core, jointly developed by the Library of Congress and the Visual Resources Association, is described as "a data standard for the description of works of visual culture as well as the images that document them."

Culture and society:

- Data Documentation Initiative standardizes descriptions of data used in behavioral science and related disciplines.

- Open Archives Language Community, based on Dublin Core, attempts to develop a worldwide virtual repository of language resources.

Sciences:

- Darwin Core is used for sharing information on biological specimens.

- Ecological Metadata Language is a readable XML markup format for sharing data on earth sciences.

- Federal Geospatial Data Committee develops metadata formats for documenting geospatial research data.