leaky bucket algorithm

What is leaky bucket algorithm?

The leaky bucket algorithm is a "traffic shaping" algorithm to reduce the load the transport layer places on the network layer and reduce congestion in the network. Commonly used in asynchronous transfer mode (ATM) networks, the algorithm provides a way to temporarily store a variable number of requests and then organize them into a set-rate output of packets.

Network congestion and traffic shaping

Traffic congestion is a common problem in all networks. When too many packets flow through a network, packet delays or packet losses can occur, both of which degrade the network's performance. This situation is known as congestion.

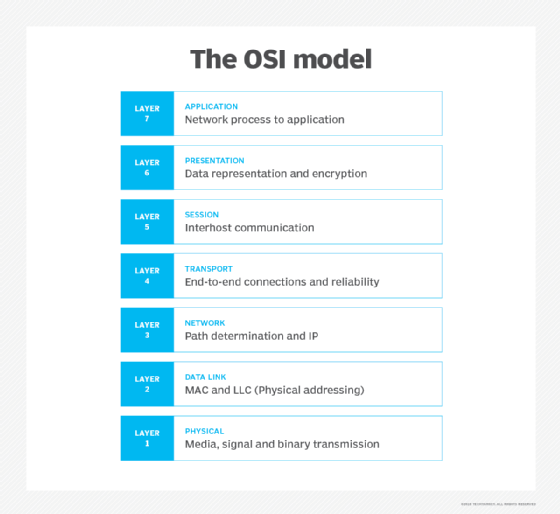

Congestion in a network often happens when the traffic is bursty. In the seven-layer OSI model for networking system communications, the network and transport layers are layer 3 and layer 4, respectively. The two layers are jointly responsible for handling network congestion, which they do by "shaping" the traffic.

Traffic shaping is a congestion management technique. It helps to control the amount of traffic sent to the network and regulates the rate of data transmission. The goal is to reduce the load the transport layer places on the network to reduce congestion and improve network performance. One commonly used method for traffic shaping is the leaky bucket algorithm.

Leaky bucket algorithm explained

The token bucket algorithm and leaky bucket algorithm are two ways to shape network traffic and reduce congestion. The leaky bucket controls both the total amount of traffic and the rate at which it is sent to the network. It can detect both gradually increasing and dramatic memory error increases by comparing how the average and peak data rates exceed set acceptable background amounts.

The algorithm works similarly to the way a real-world leaky bucket holds water: It collects data up to a maximum capacity. The data is released (leaked) from the bucket at a set rate and based on a fixed packet size. When the bucket runs out of data, the leaking stops. If incoming data would overfill the bucket, then the packet is considered "nonconformant," so the new data is not added to the bucket. Data is added to the bucket as space becomes available for conforming packets.

The leaky bucket is used to implement traffic policing and traffic shaping in Ethernet and cellular data networks. It can also be used to control metered-bandwidth internet connections to prevent users from going over the allotted bandwidth for a specified period, thereby avoiding extra charges.

How the leaky bucket algorithm works, with an example

The leaky bucket algorithm is ideal for smoothing out bursty traffic. Just like a hole at the bottom of a water bucket leaks water out at a fixed rate, the leaky bucket algorithm does the same with network traffic. Bursty chunks of traffic are stored in a "bucket" with a "hole" and sent out at a controlled, average rate.

The hole represents the network's commitment to a particular bandwidth. The leaky bucket shapes the incoming traffic to ensure it conforms to the commitment. Thus, regardless of how much data traffic enters the bucket, it always leaves at a constant output rate (the commitment). This mechanism regulates the packet flow in the network and helps to prevent congestion that leads to performance deterioration and traffic delays.

Leaky bucket example. Suppose data enters the network from various sources at different speeds. Consider one bursty source that sends data at 20 Mbps for 2 seconds for a total of 40 Mbps. Then it is silent, sending no data for 5 seconds. Then it again transmits data at a rate of 10 Mbps for 3 seconds, thus sending a total of 30 Mbps. So, in a time span of 10 seconds the source sends 70 Mb data.

However, the network has only committed a bandwidth of 5 Mbps for this source. Therefore, it uses the leaky bucket algorithm to output traffic at the rate of 5 Mbps during the same time period of 10 seconds, which smooths out the network traffic.

Without the leaky bucket algorithm in place, the initial burst of 20 Mbps would have consumed a lot more bandwidth than the network had reserved (committed) for the source, which would have caused congestion and a slowdown in the network.

Implementing the leaky bucket algorithm with a FIFO queue

A first-in, first-out (FIFO) queue enhances communications between applications. It is especially useful when the order of operations and events in the network is critical. Also known as first-come, first-served (FCFS) queuing, FIFO queuing means that the first packet that arrives at a router is also the first to be transmitted. Furthermore, if a packet arrives and the queue -- also known as the buffer space -- is full, it is discarded by the router.

A FIFO queue can be used to implement a leaky bucket algorithm in a network. The queue holds the packets and doesn't allow them to pass if they exceed the bandwidth committed by the network for a source. If the traffic consists of variable-length packets, the output rate is fixed based on the commitment in bytes or bits.

However, if the traffic consists of fixed-size packets, the algorithm will drop the packets arriving at the tail end of the FIFO. This phenomenon, known as a "tail drop," happens regardless of the packet's importance or which flow it belongs to.

Applications of leaky bucket algorithm

The traffic shaping capability of the leaky bucket algorithm is useful for both computing and telecommunications applications. In computing, the bucket is the server, which has fixed processing capability or size. The output that comes from the bucket is the processed data. This data leaves the bucket at a constant rate, depending on the server's processing speed, to smooth out the traffic and prevent bursts.

The algorithm is also implemented to prevent congestion in telecommunications networks. When subscribers sign a contract with a telecom provider, they can use a specific bandwidth within a specified period (per day, per month, etc.) If the subscriber uses more bandwidth than is allocated to them, excess packets will spill out of the bucket. The network will then prevent the subscriber from using more than their allocated bandwidth.

See also: traffic shaping